wordcount在eclipse上的伪分布式运行过程

hadoop 0.20 程式http://trac.nchc.org.tw/cloud/wiki/waue/2009/0617

零. 前言??- hadoop 需要用到多的物件向法,包括承、介面,而且需要入正的classpath,否hadoop程式只是打字...

- 用 vim 理的程式,有可能成一,因此用eclipse,搭配mapreduce-plugin事半功倍。

- 早在hadoop 0.19~0.16之的版本,者就各plugin,每版本的plugin都有大大小小的,如:hadoop plugin 法正使用、法run as mapreduce。hadoop0.16搭配IBM的hadoop_plugin 可以提供完整的功能,但是,老兵不死,只是凋零...

- 子曰:"逝者如斯夫,不夜",以前的文件也落伍了,要跟上潮流,因此此篇的重在:用eclipse 3.4.2 hadoop 0.20程式,且撰的程式作在hadoop平台上

- 以下是我的作法,如果你有更好的作法,或有需要更正的地方,我

位作者Mail家高速路中心-格技Wei-Yu Chenwaue @ nchc.org.tw

?

0.0 Info Update??- Last Update: 2010/01/22

最新版本的 Eclipse 3.5 搭配 Ubuntu 9.04 + hadoop-eclipse-plugin 0.20.1 ,初步功能皆可正常作

但 Ubuntu 9.10 的 各版本 Eclipse , 似乎有 gtk 形介面的bug ,有此一增加 GDK_NATIVE_WINDOWS=1 就可以解,但初步似乎用

0.1 境明??- ubuntu 8.10

- sun-java-6

- eclipse 3.4.2

- hadoop 0.20.0

0.2 目明??- 使用者:waue

- 使用者家目: /home/waue

- 案目 : /home/waue/workspace

- hadoop目: /opt/hadoop

一、安??

安的部份必要都一模一,提供考,反正只要安好java , hadoop , eclipse,清楚自己的路就可以了

1.1. 安java??

首先安java 基本套件

$ sudo apt-get install java-common sun-java6-bin sun-java6-jdk sun-java6-jre

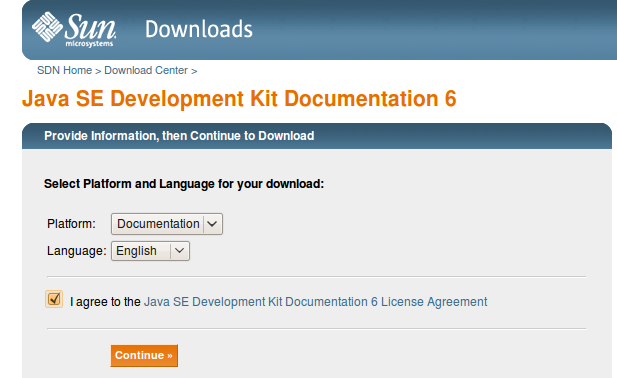

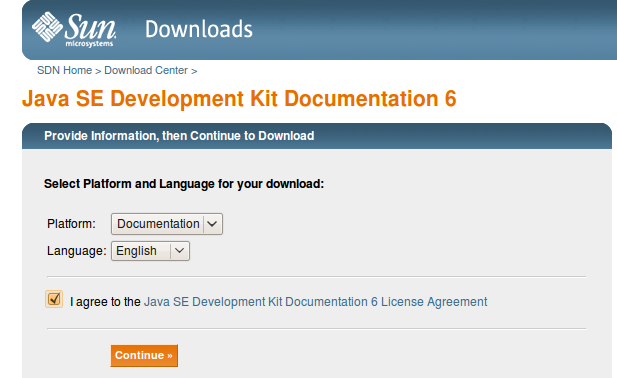

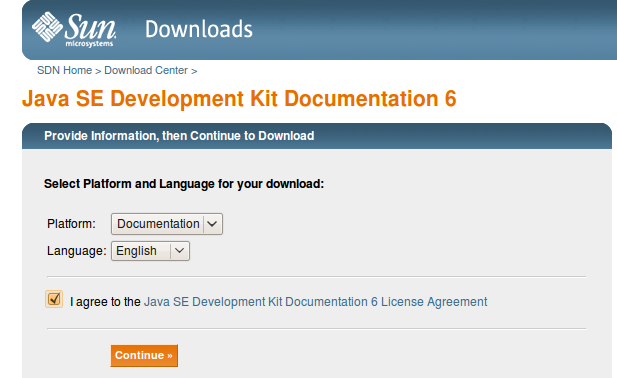

1.1.1. 安sun-java6-doc??

?

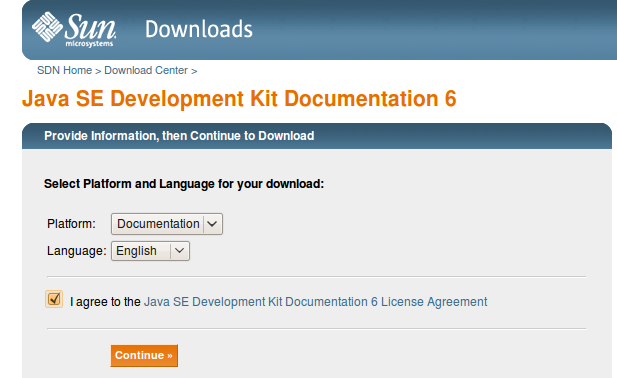

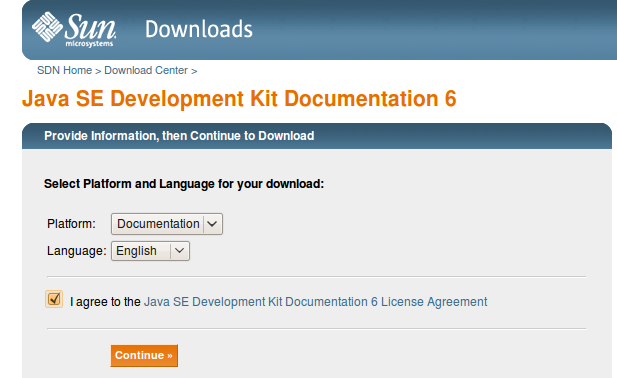

1 javadoc (jdk-6u10-docs.zip) 下下?下

2 下完後案放在 /tmp/ 下

3 行

?

$ sudo apt-get install sun-java6-doc

1.2. ssh 安定??$ apt-get install ssh $ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys $ ssh localhost

行ssh localhost 有出密的息

1.3. 安hadoop??

安hadoop0.20到/opt/取目名hadoop

$ cd ~ $ wget http://apache.ntu.edu.tw/hadoop/core/hadoop-0.20.0/hadoop-0.20.0.tar.gz $ tar zxvf hadoop-0.20.0.tar.gz $ sudo mv hadoop-0.20.0 /opt/ $ sudo chown -R waue:waue /opt/hadoop-0.20.0 $ sudo ln -sf /opt/hadoop-0.20.0 /opt/hadoop

- /opt/hadoop/conf/hadoop-env.sh

export?JAVA_HOME=/usr/lib/jvm/java-6-sun?export?HADOOP_HOME=/opt/hadoop?exportPATH=$PATH:/opt/hadoop/bin

- /opt/hadoop/conf/core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://localhost:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp/hadoop/hadoop-${user.name}</value> </property> </configuration>- /opt/hadoop/conf/hdfs-site.xml

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

- /opt/hadoop/conf/mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

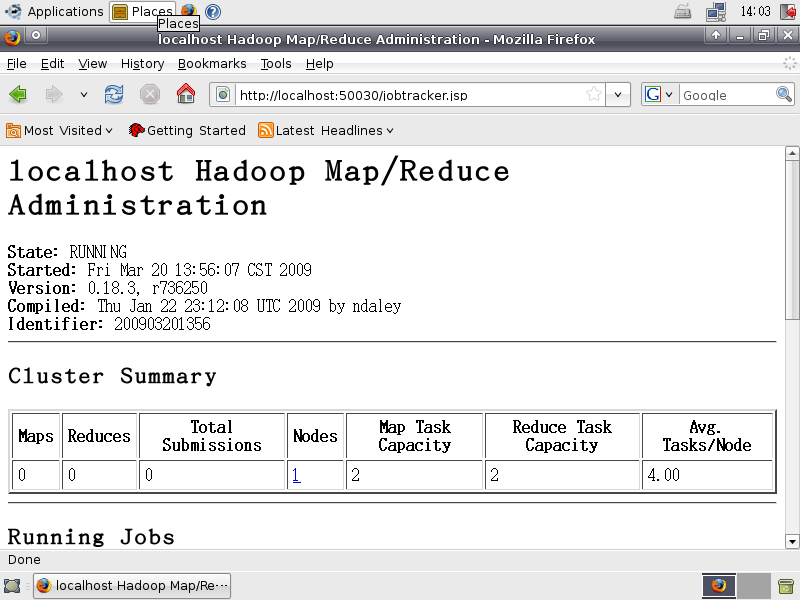

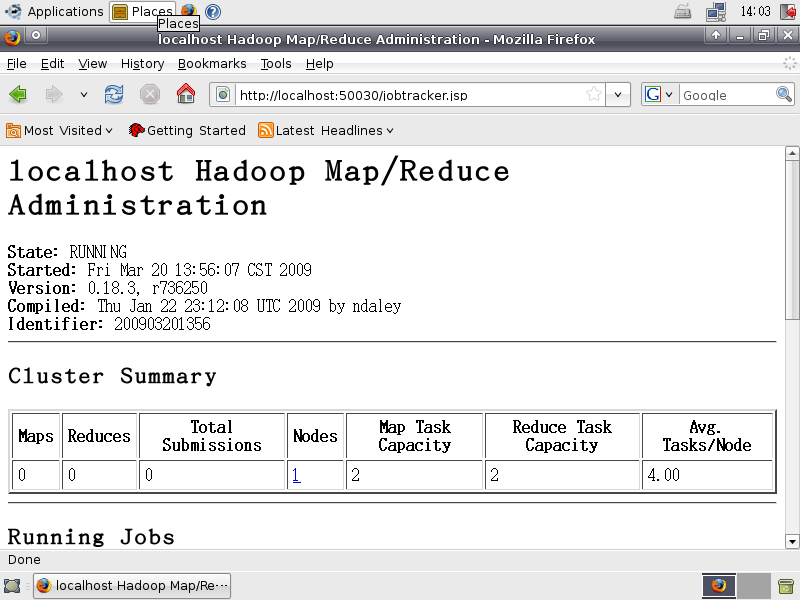

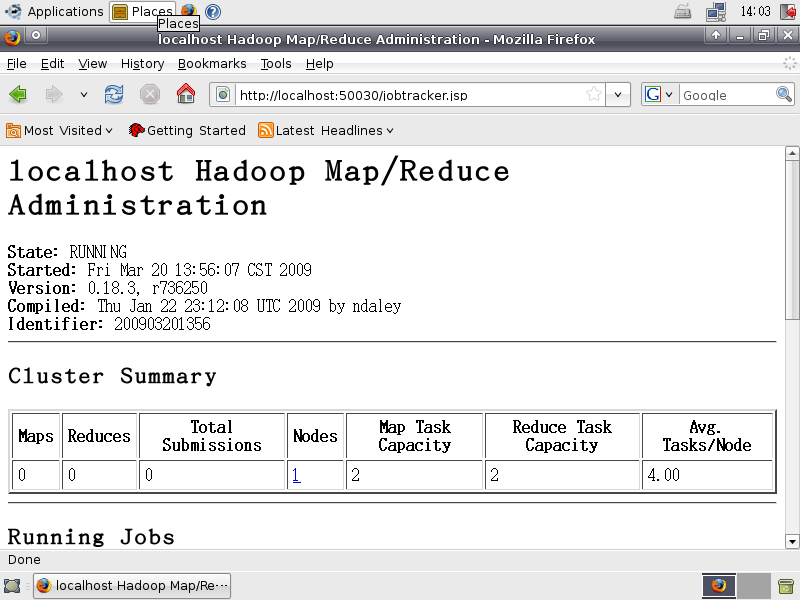

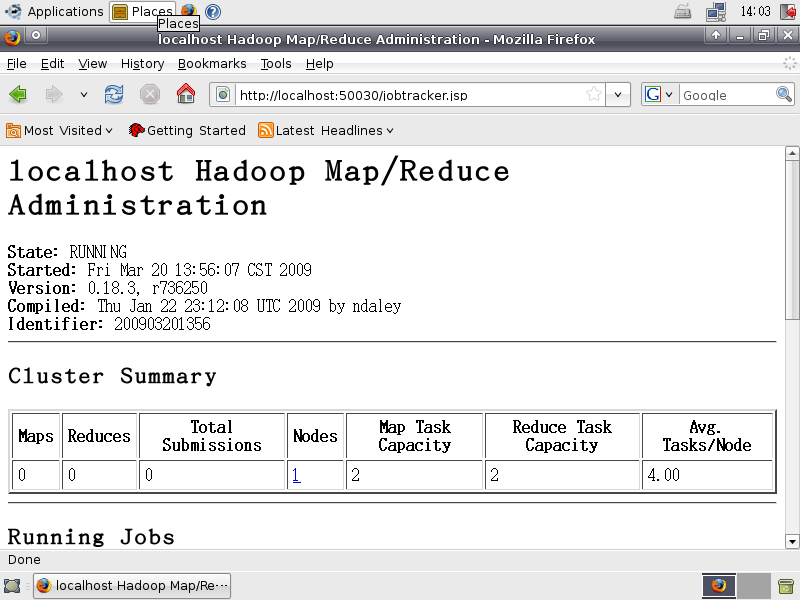

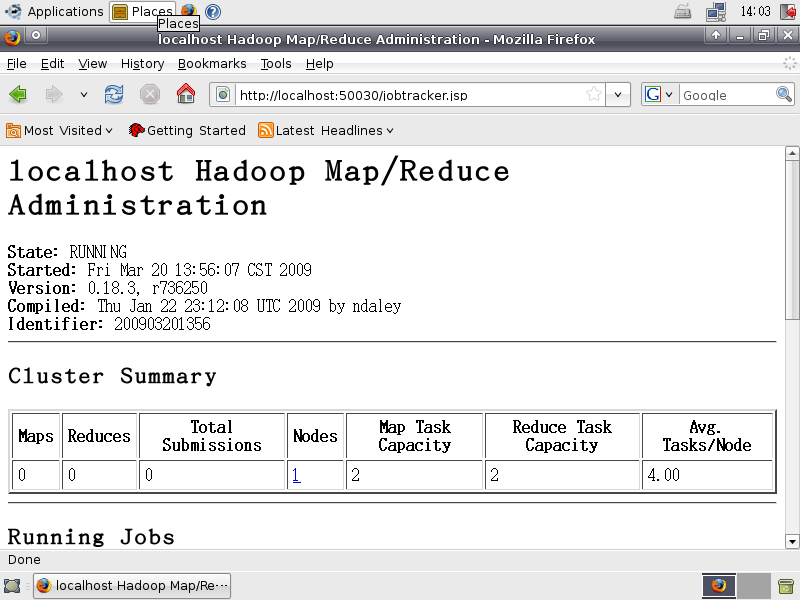

$ cd /opt/hadoop $ source /opt/hadoop/conf/hadoop-env.sh $ hadoop namenode -format $ start-all.sh $ hadoop fs -put conf input $ hadoop fs -ls

- 有息代表

1.4. 安eclipse??

?

- 在此提供方法下案

- 方法一:下?eclipse SDK 3.4.2 Classic,且放案到家目

- 方法二:上指令

$ cd ~ $ wget http://ftp.cs.pu.edu.tw/pub/eclipse/eclipse/downloads/drops/R-3.4.2-200902111700/eclipse-SDK-3.4.2-linux-gtk.tar.gz

- eclipse 已下到家目後,行下面指令:

?

$ cd ~ $ tar -zxvf eclipse-SDK-3.4.2-linux-gtk.tar.gz $ sudo mv eclipse /opt $ sudo ln -sf /opt/eclipse/eclipse /usr/local/bin/

二、 建立案??2.1 安hadoop 的 eclipse plugin??- 入hadoop 0.20.0 eclipse plugin

?

$ cd /opt/hadoop $ sudo cp /opt/hadoop/contrib/eclipse-plugin/hadoop-0.20.0-eclipse-plugin.jar /opt/eclipse/plugins

$ sudo vim /opt/eclipse/eclipse.ini

- 可斟酌考eclipse.ini容(非必要)

?

-startup plugins/org.eclipse.equinox.launcher_1.0.101.R34x_v20081125.jar --launcher.library plugins/org.eclipse.equinox.launcher.gtk.linux.x86_1.0.101.R34x_v20080805 -showsplash org.eclipse.platform --launcher.XXMaxPermSize 512m -vmargs -Xms40m -Xmx512m

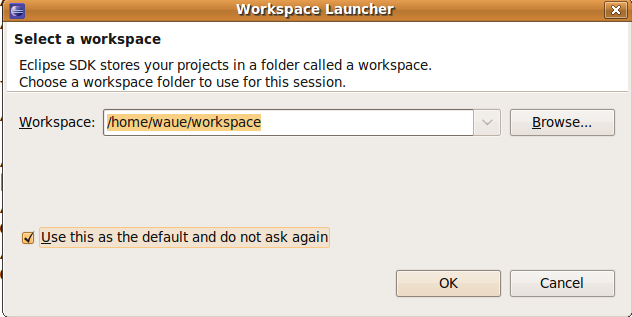

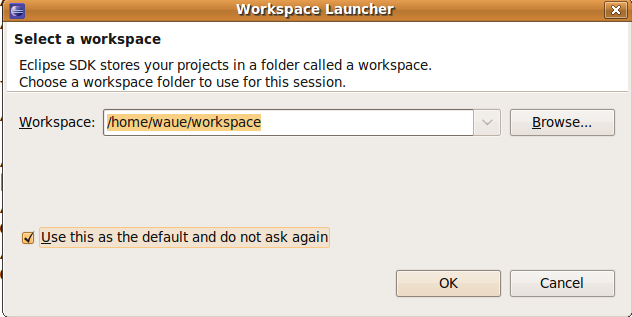

2.2 eclipse??- 打eclipse

?

$ eclipse &

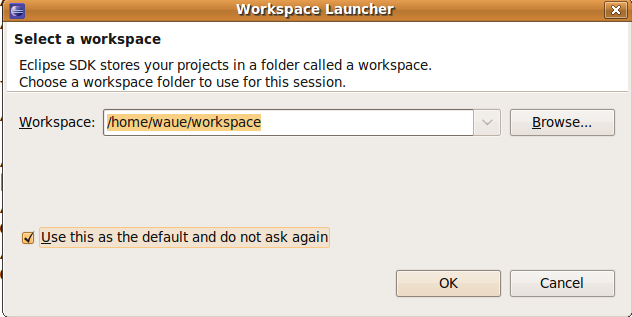

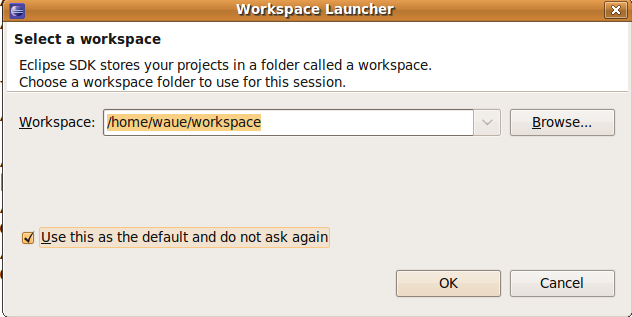

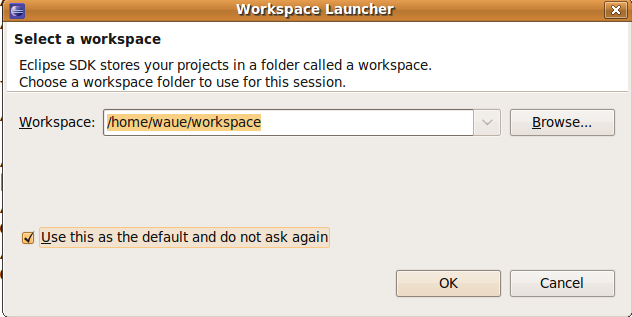

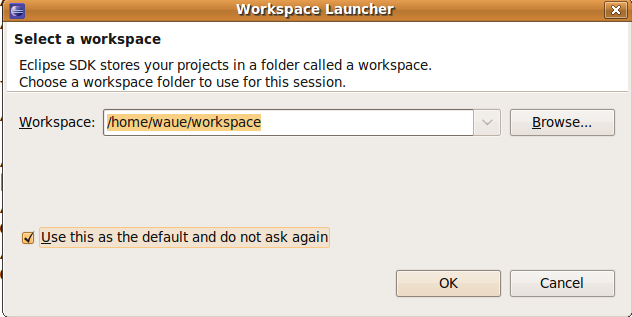

一始出你要工作目放在哪:在我用值

PS: 之後的明是在eclipse 上的介面操作

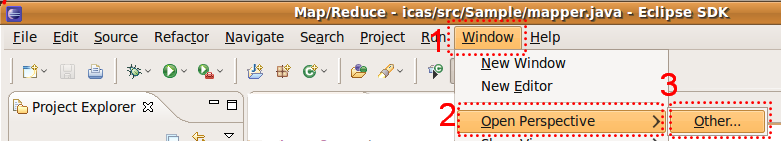

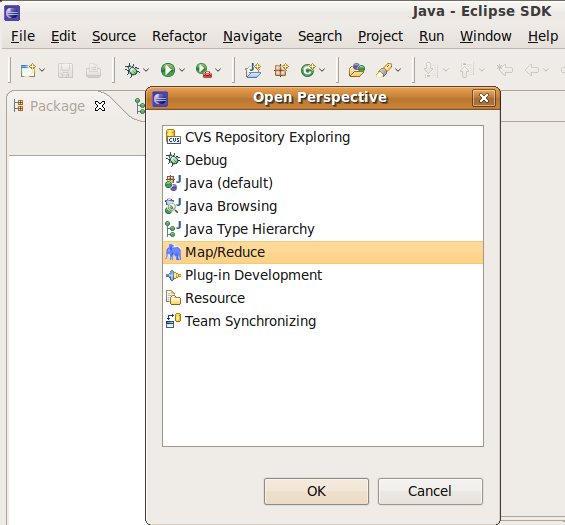

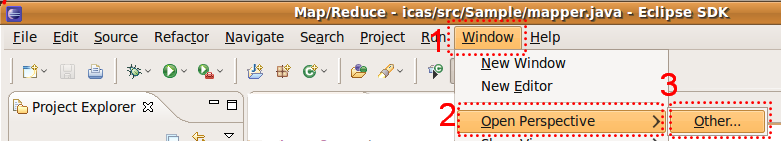

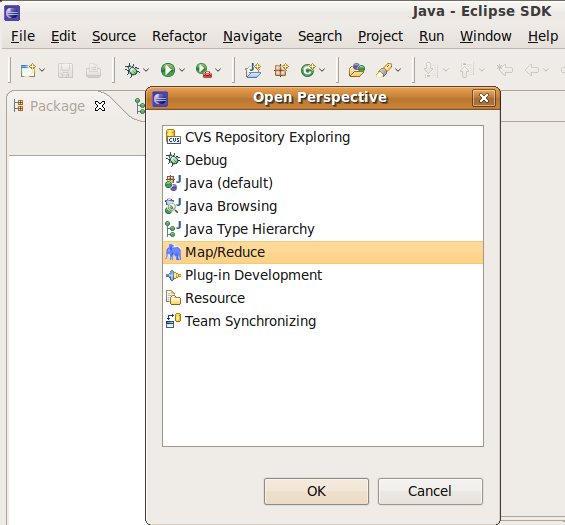

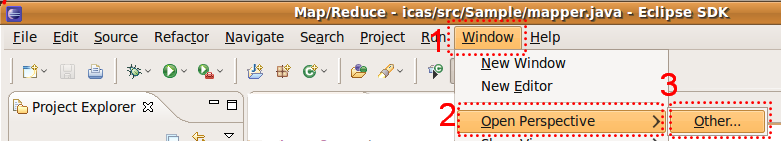

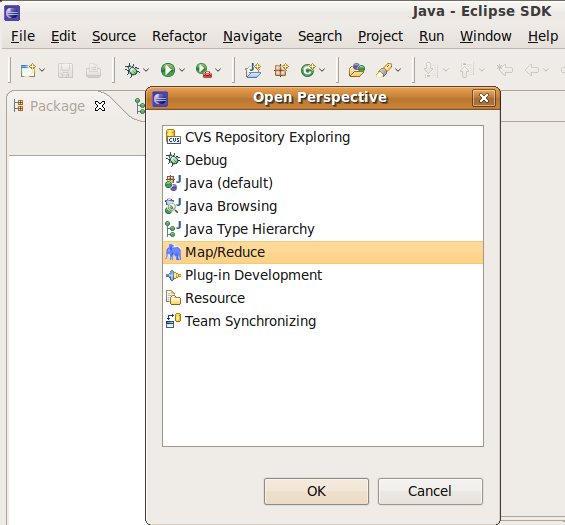

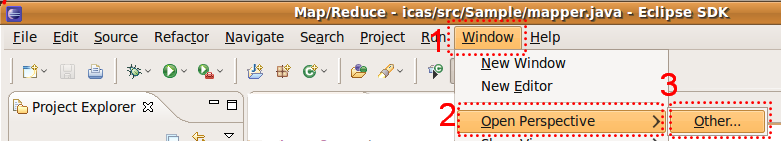

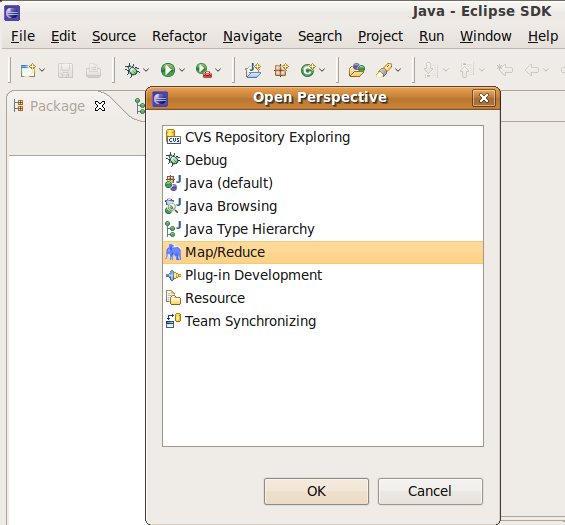

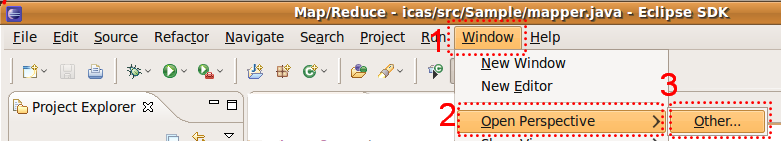

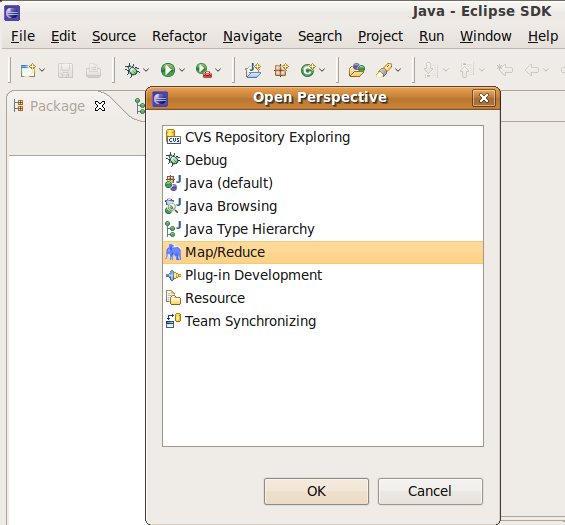

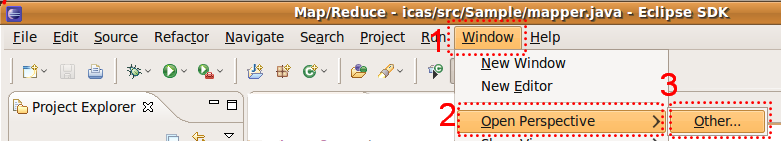

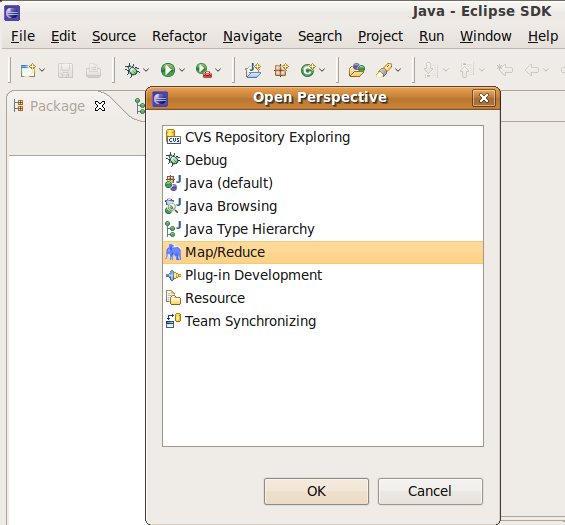

2.3 野??window ->open pers.. ->other.. ->map/reduce

定要用 Map/Reduce 的野

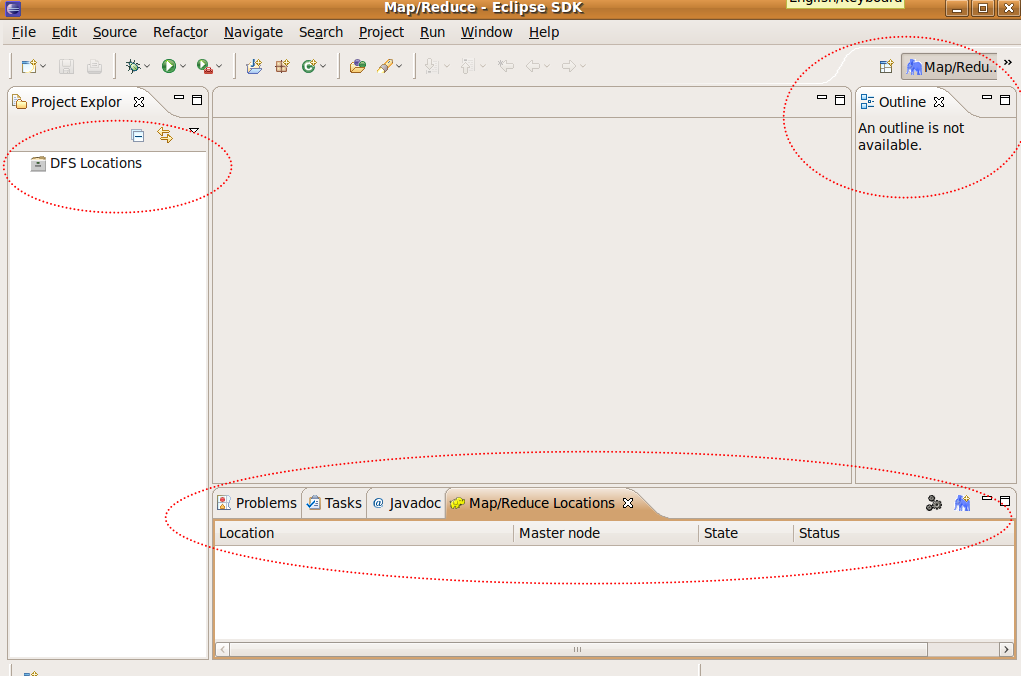

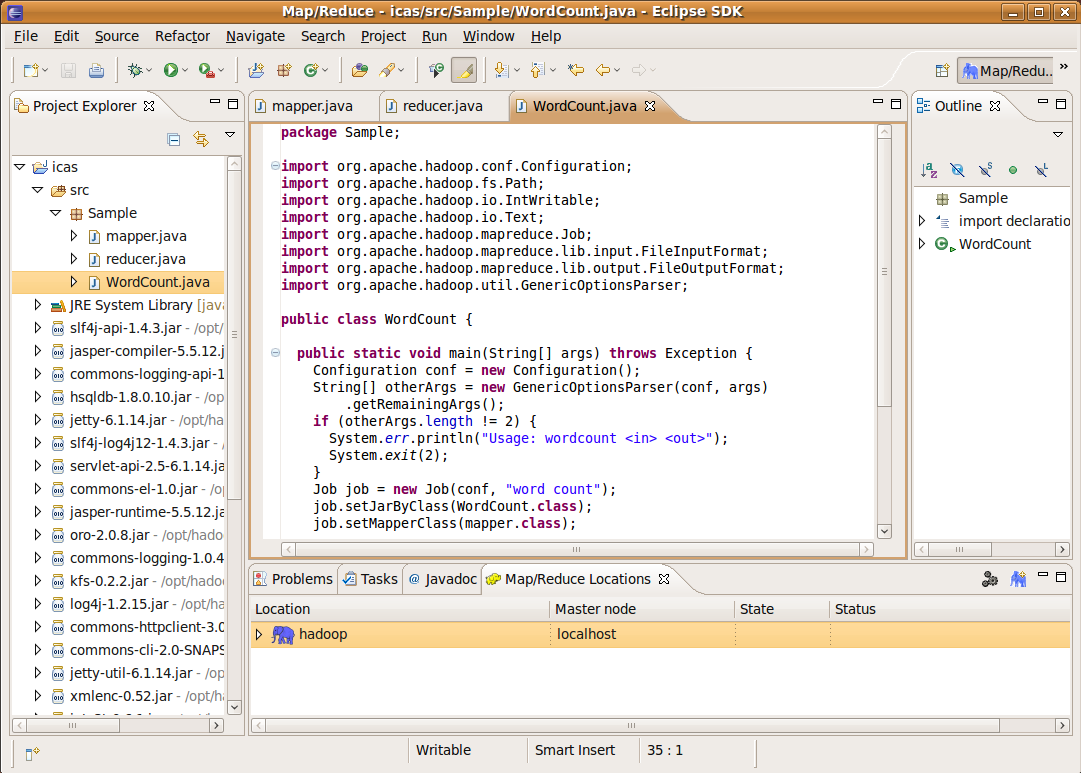

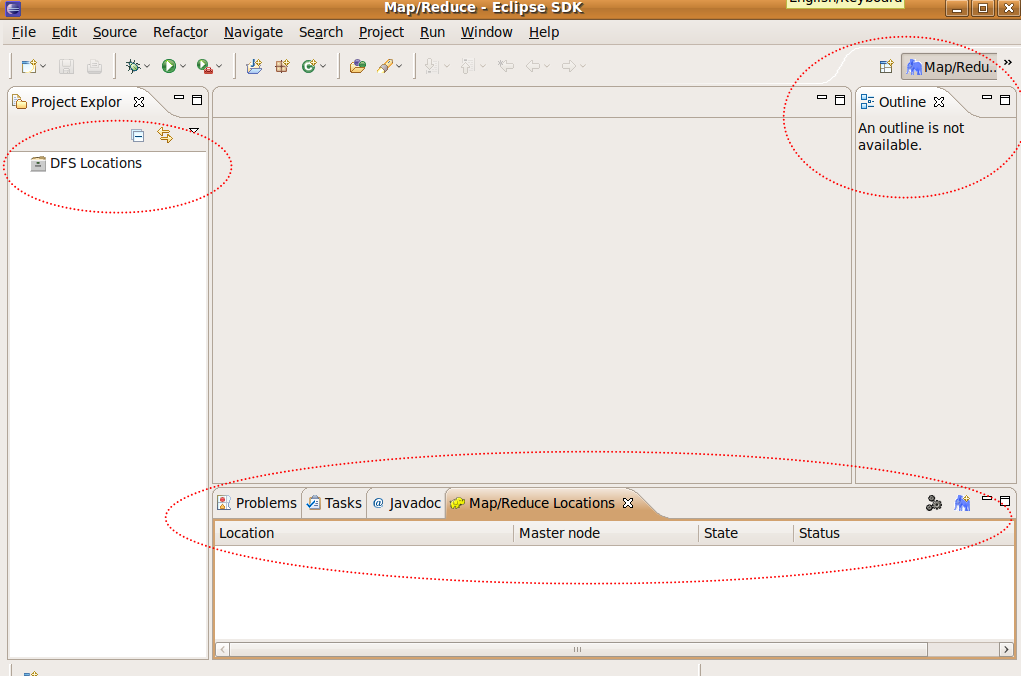

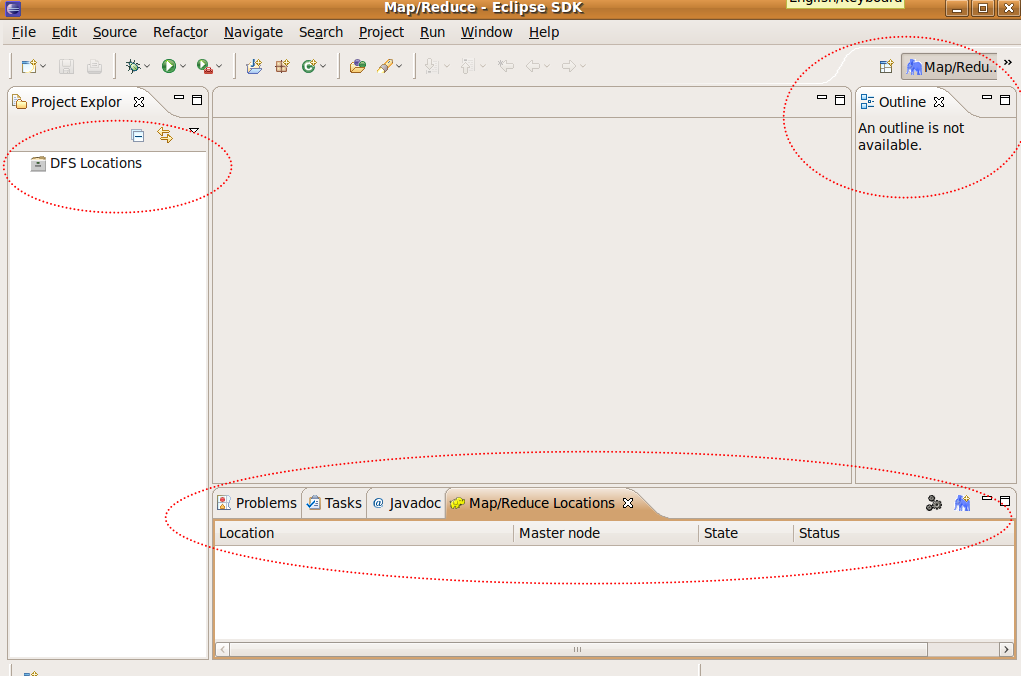

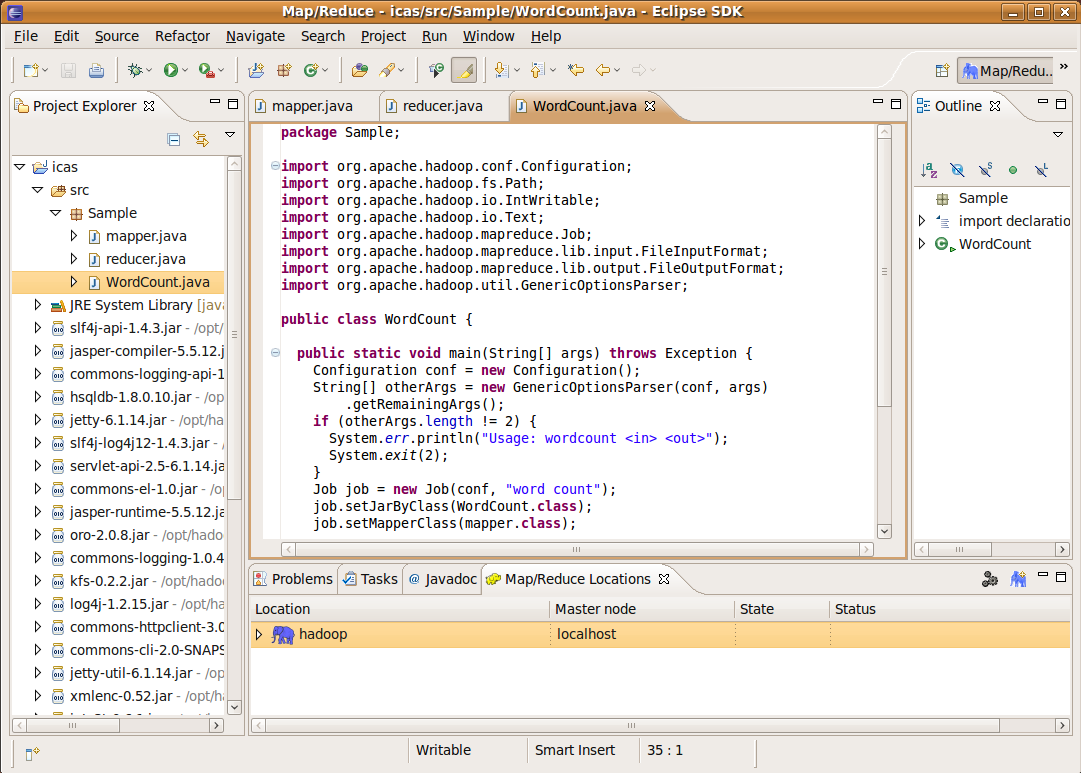

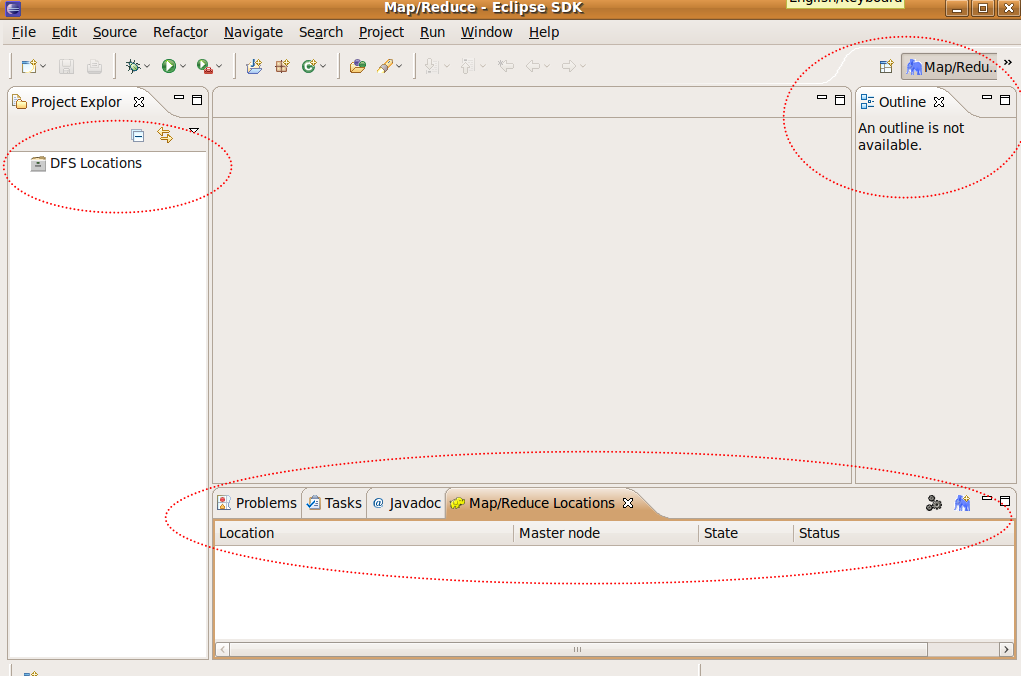

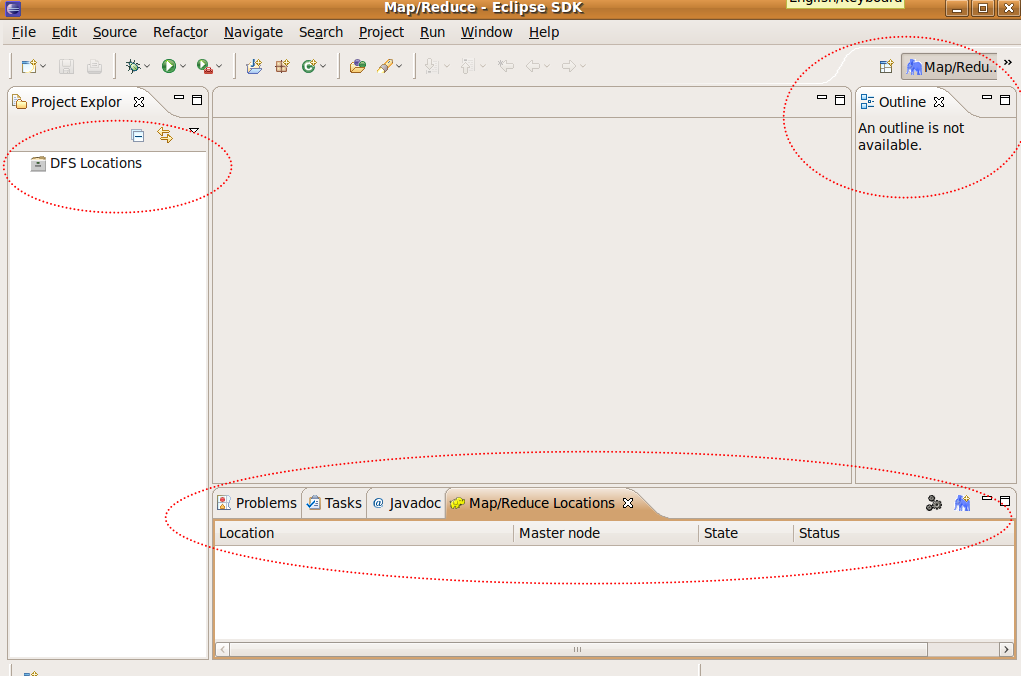

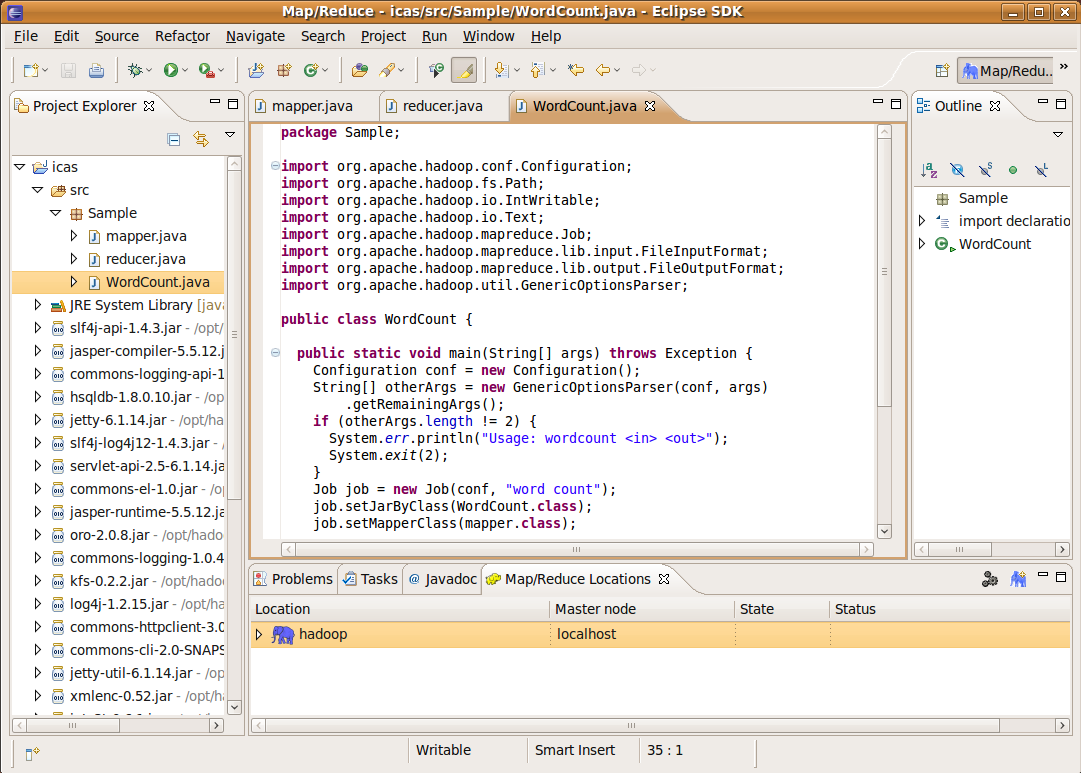

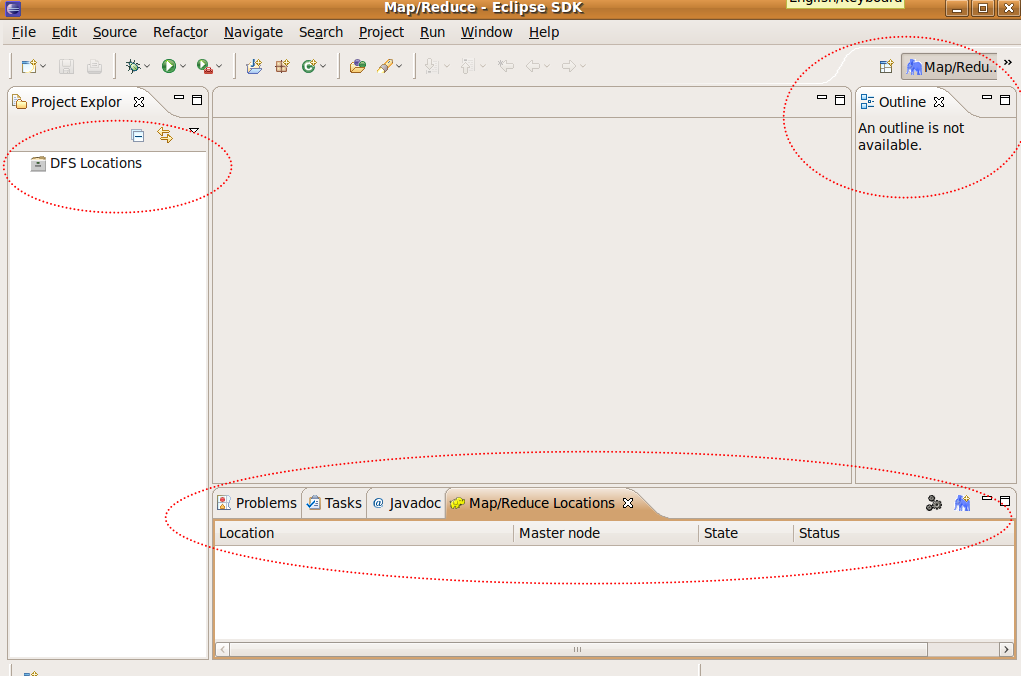

使用 Map/Reduce 的野後的介面呈

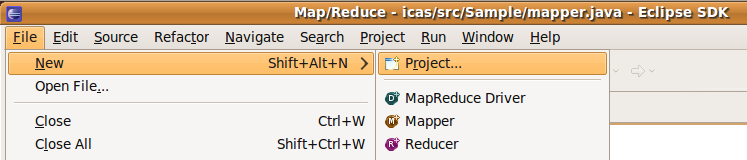

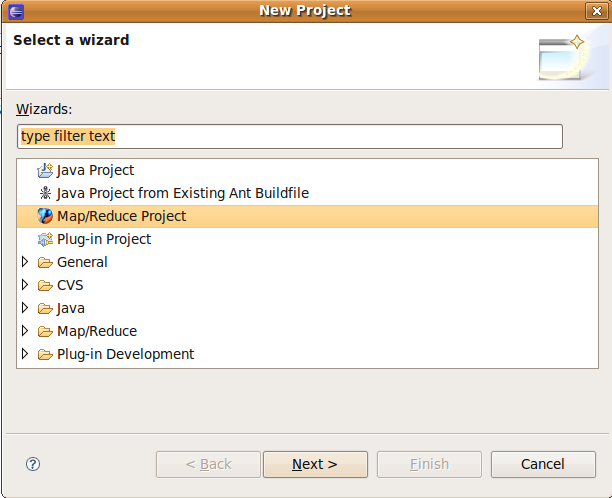

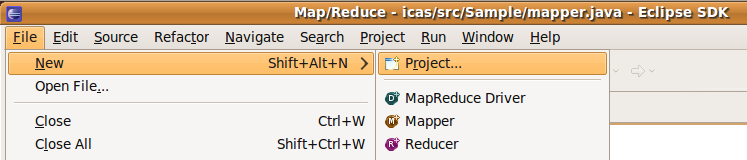

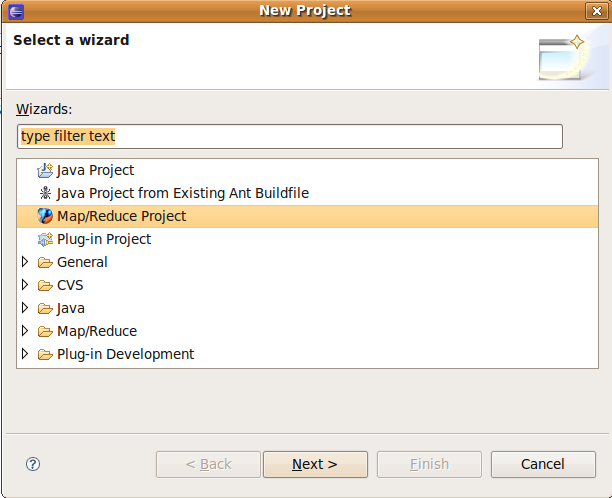

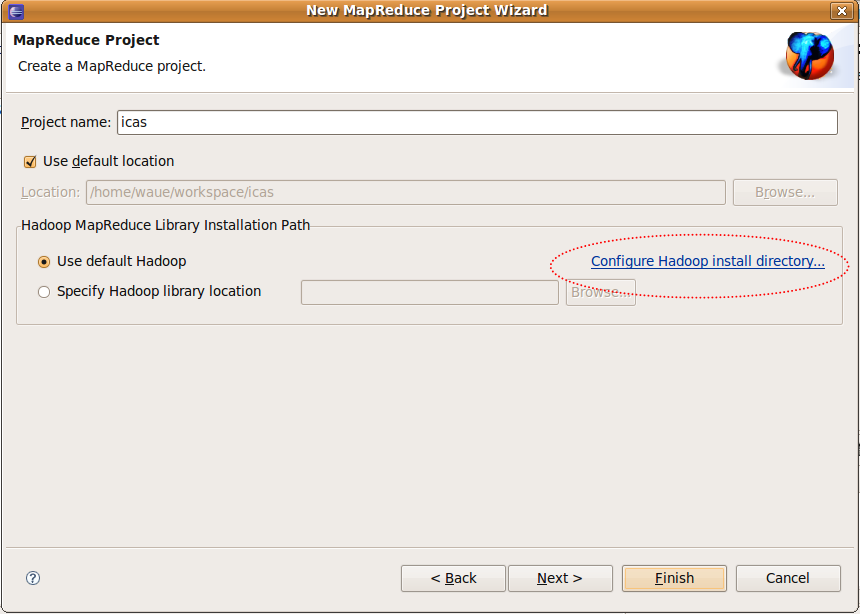

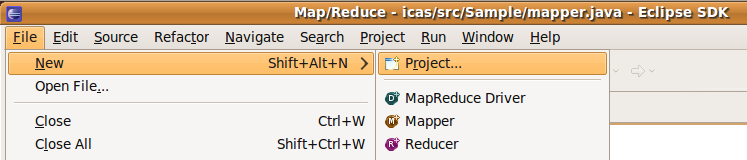

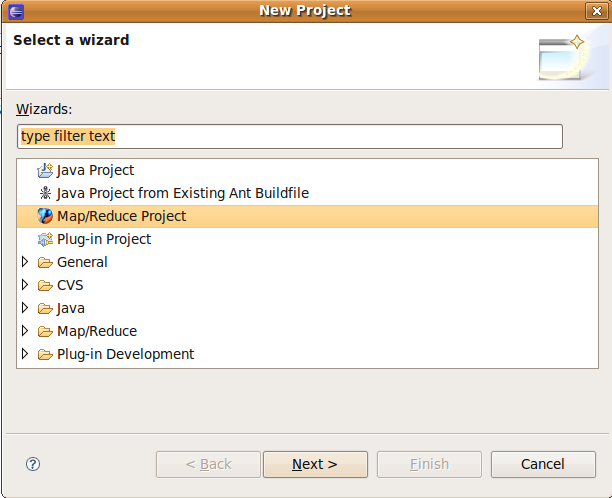

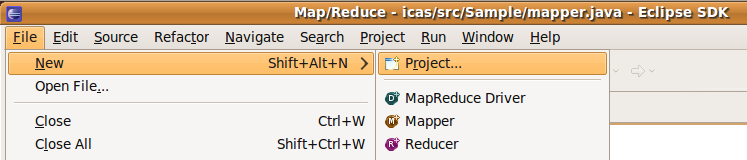

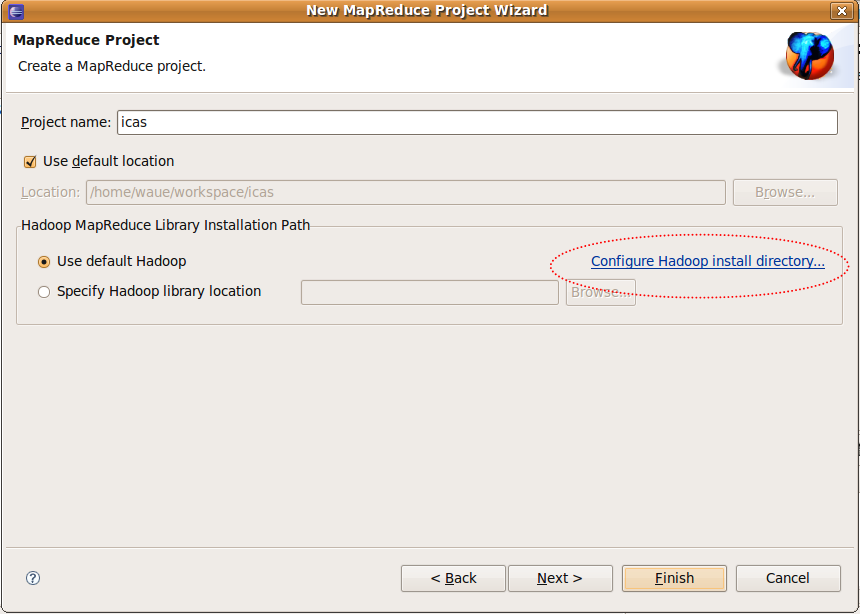

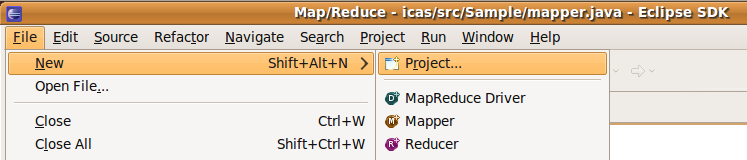

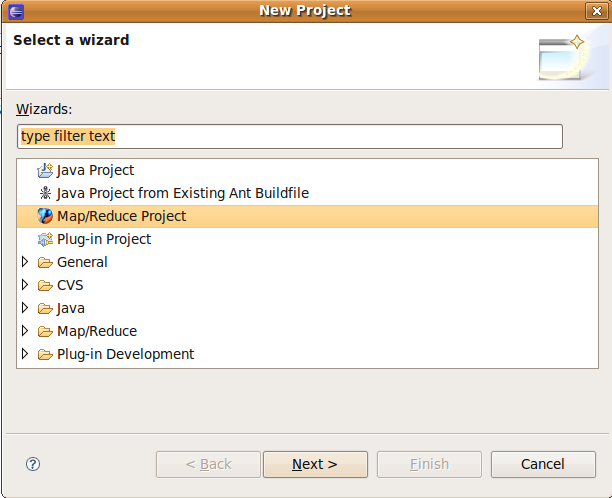

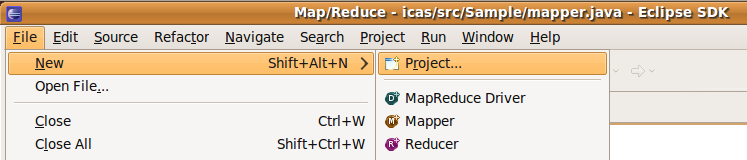

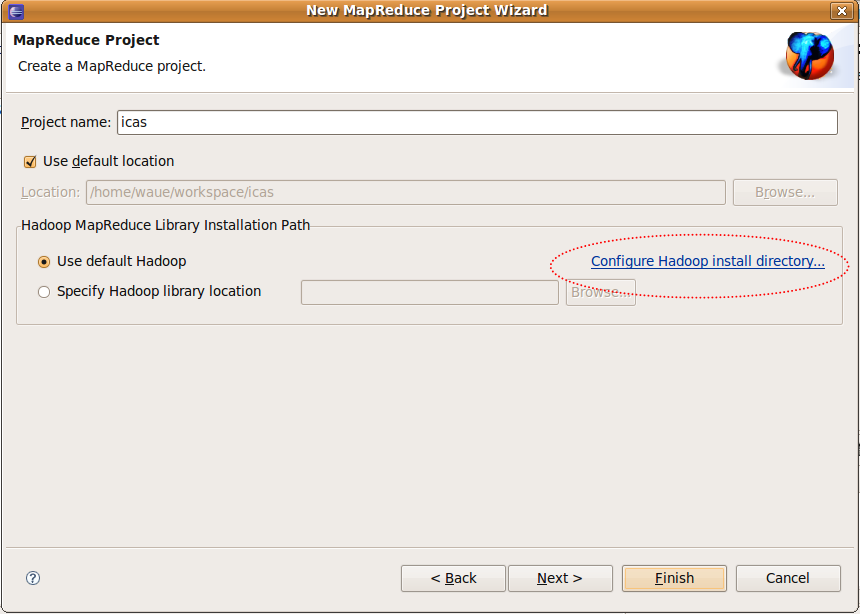

2.4 建立案??file ->new ->project ->Map/Reduce ->Map/Reduce Project ->next

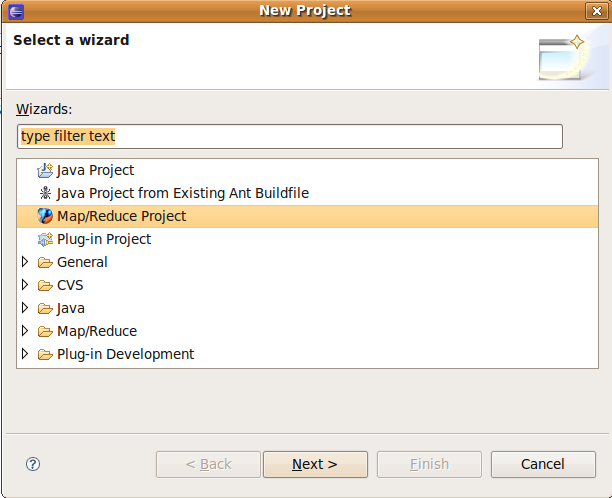

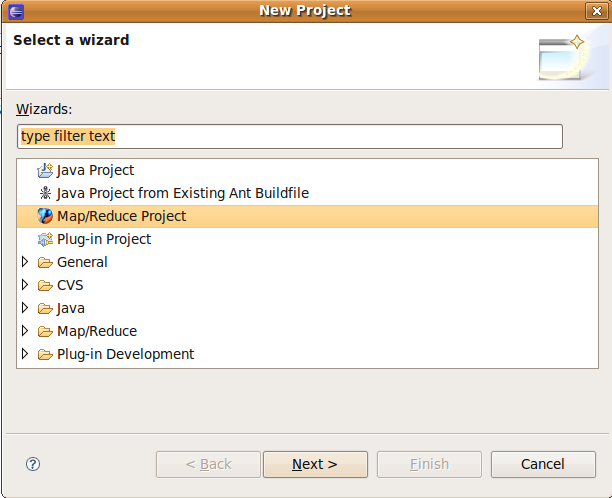

建立mapreduce案(1)

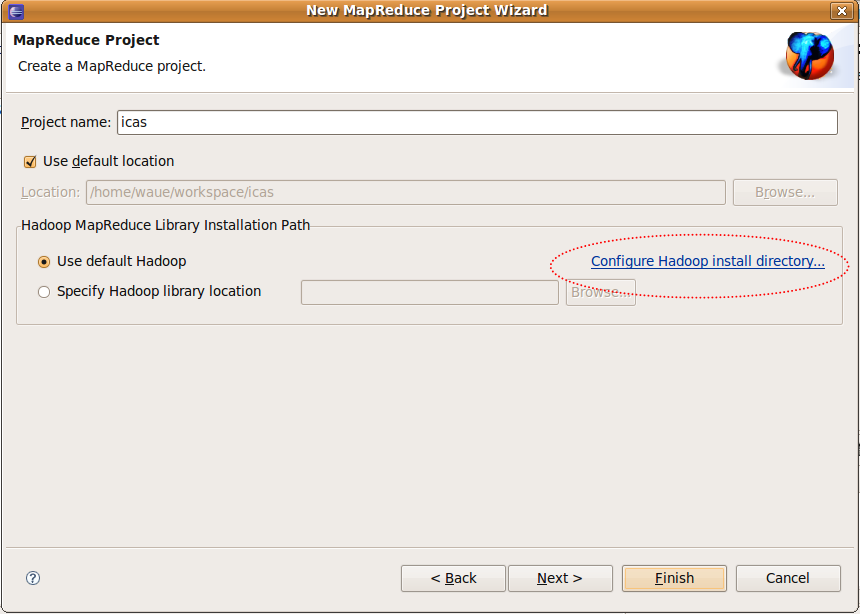

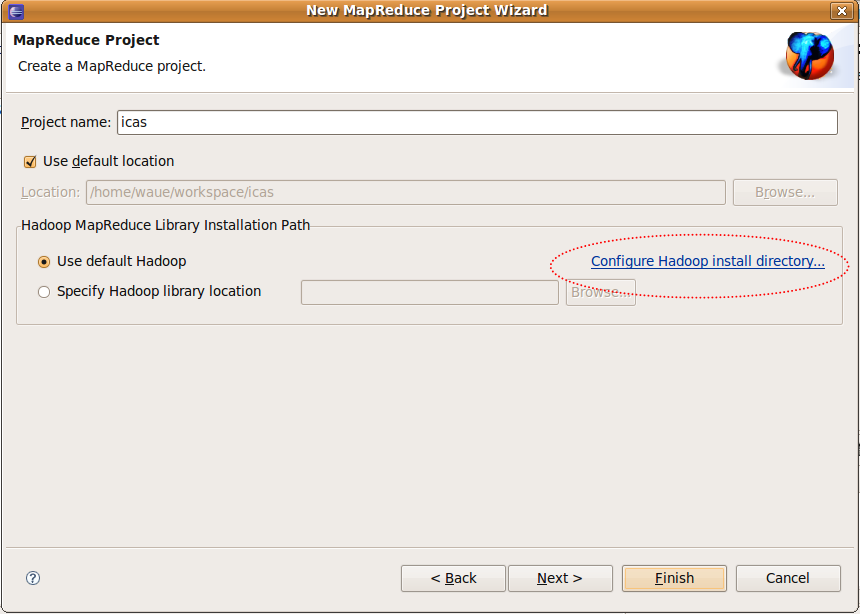

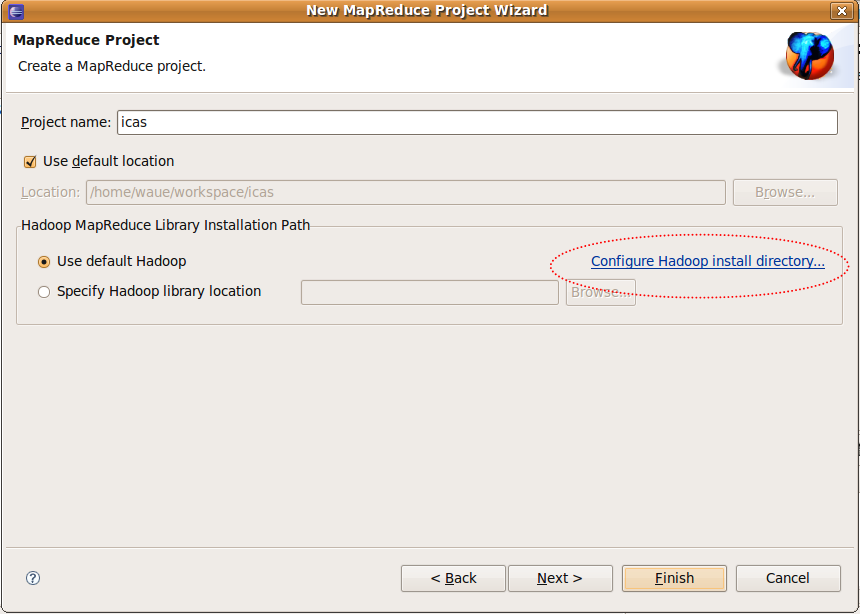

建立mapreduce案的(2)

project name-> 入 : icas?(意)?use default hadoop -> Configur Hadoop install... -> 入:"/opt/hadoop"?-> ok Finish

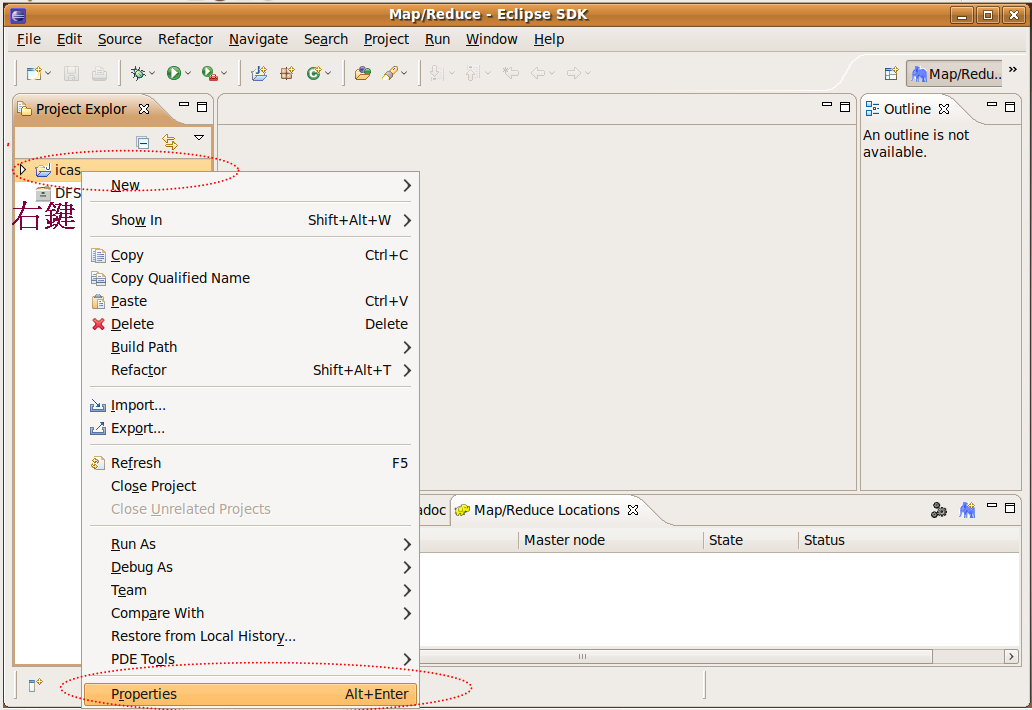

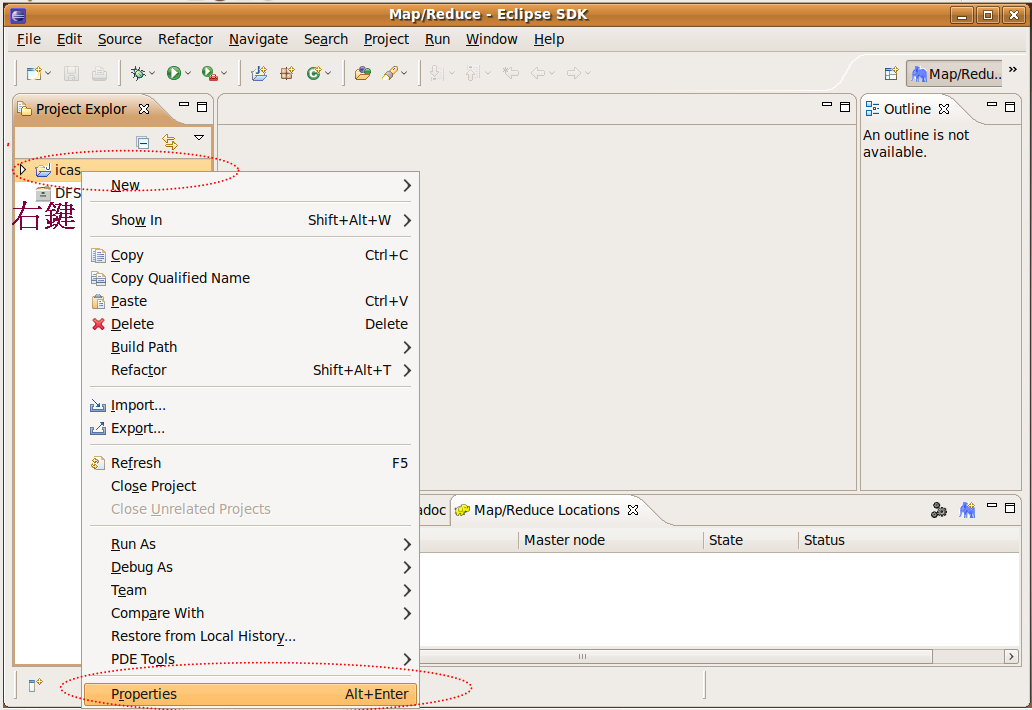

2.5 定案??

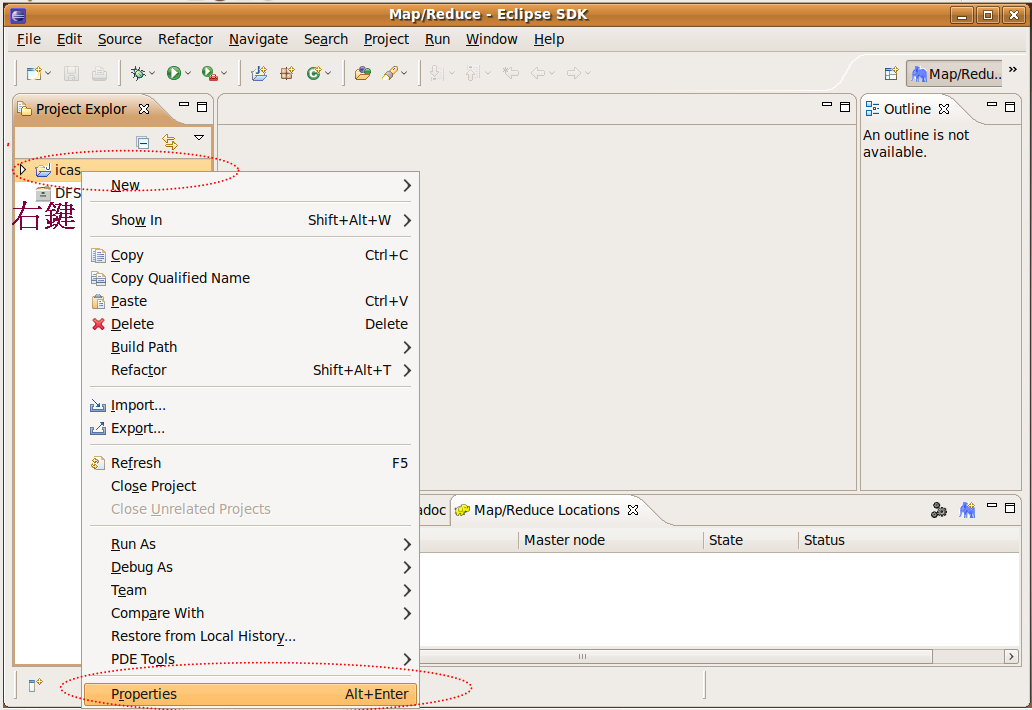

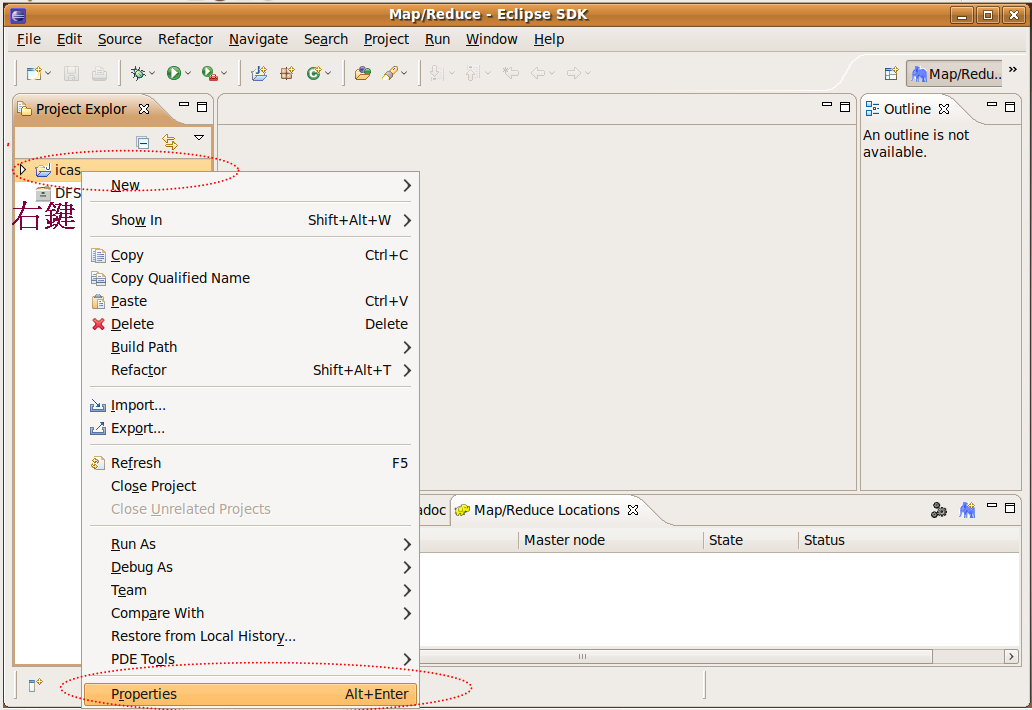

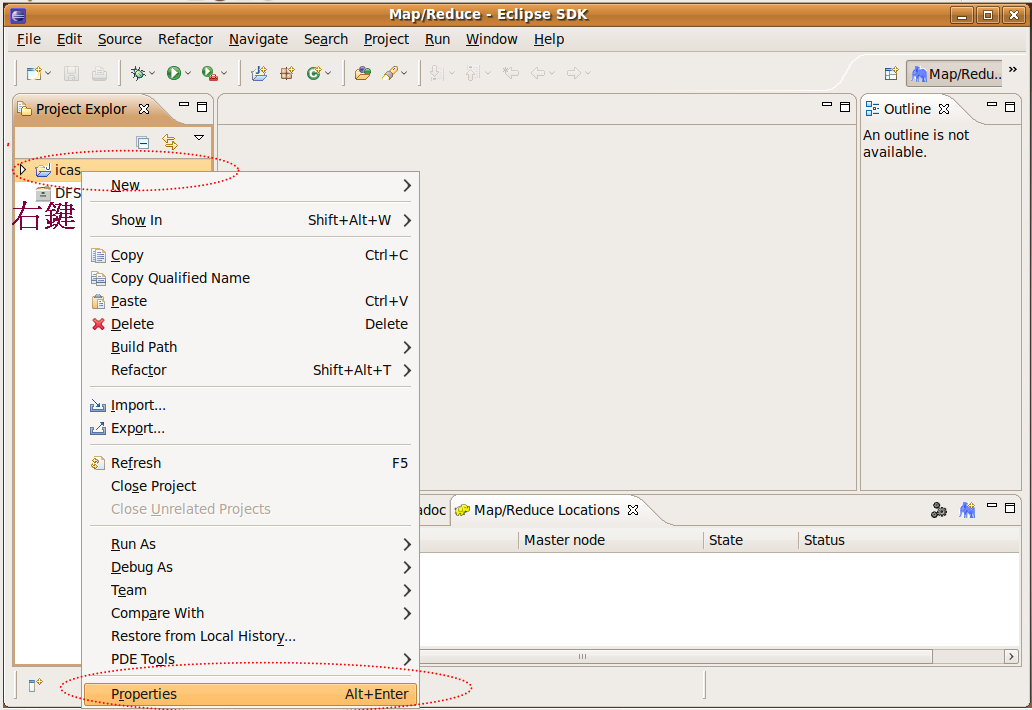

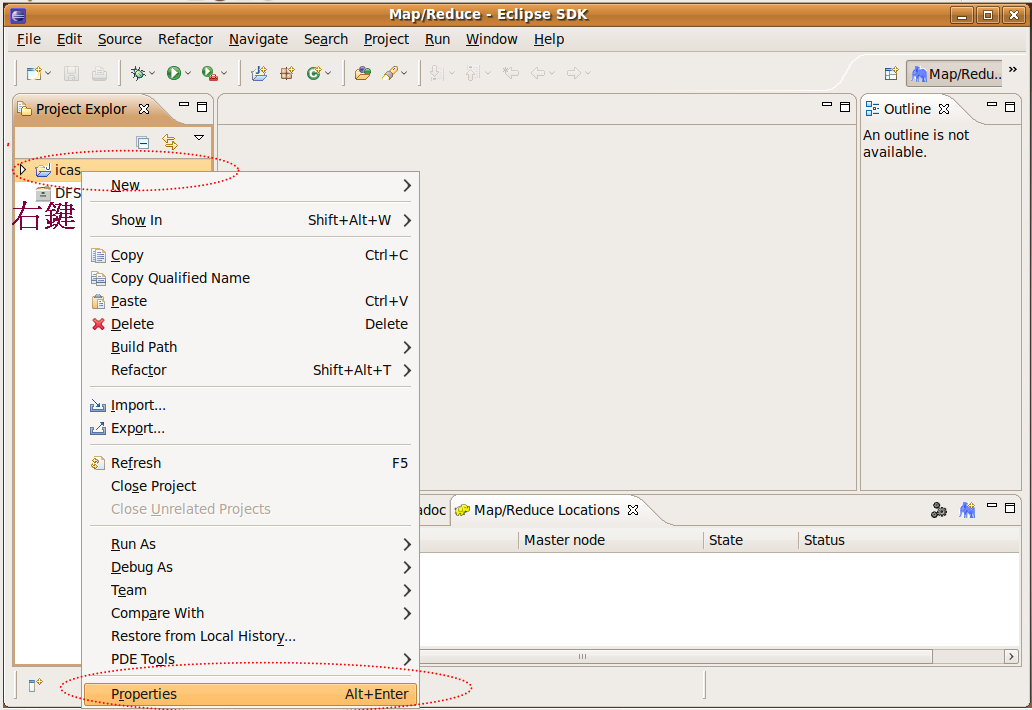

由於建立了icas案,因此eclipse已建立了新的案,出在左窗,右料,properties

Step1. 右project的properties做部定

Step2. 入案的部定

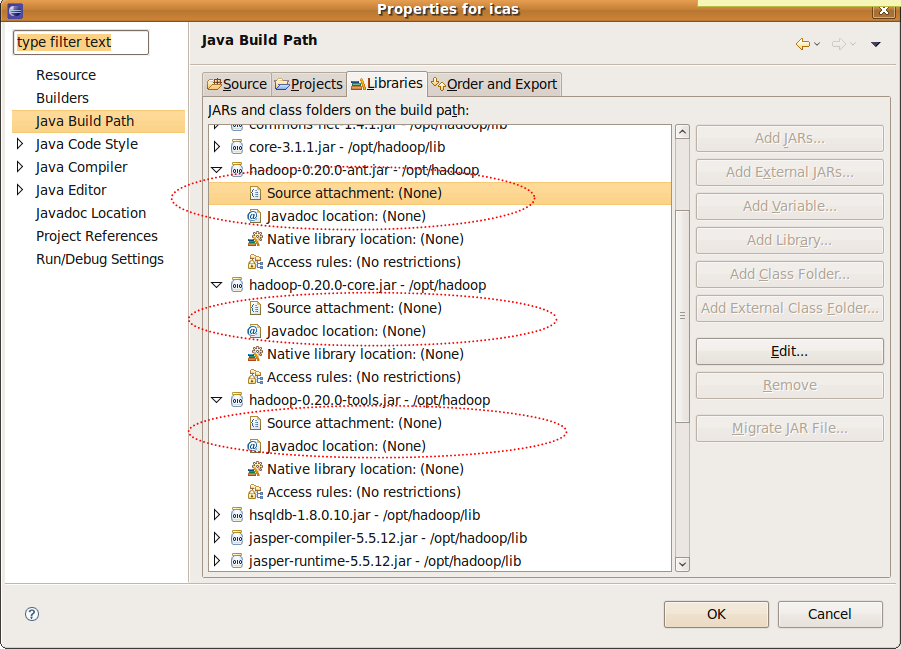

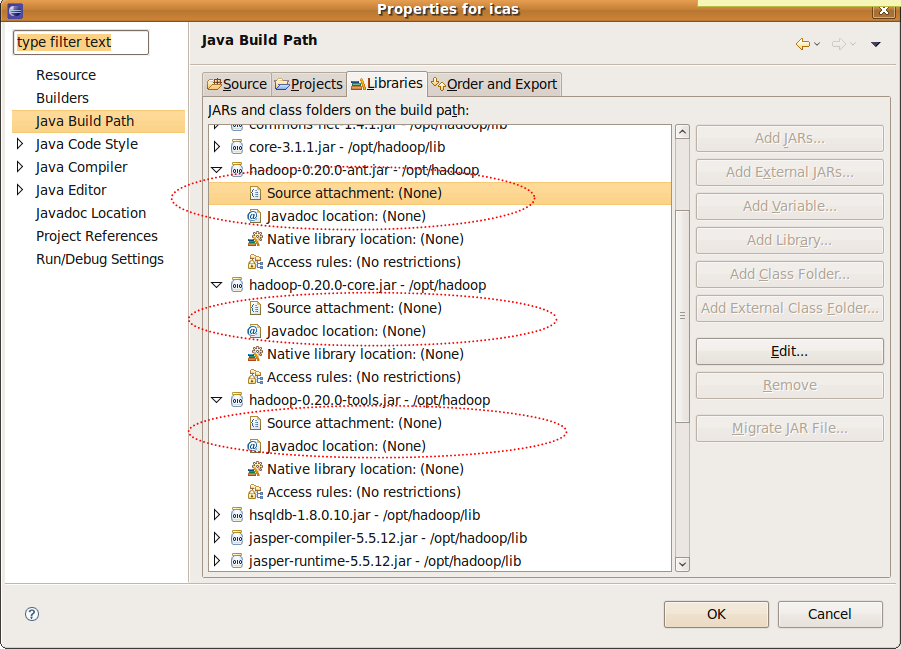

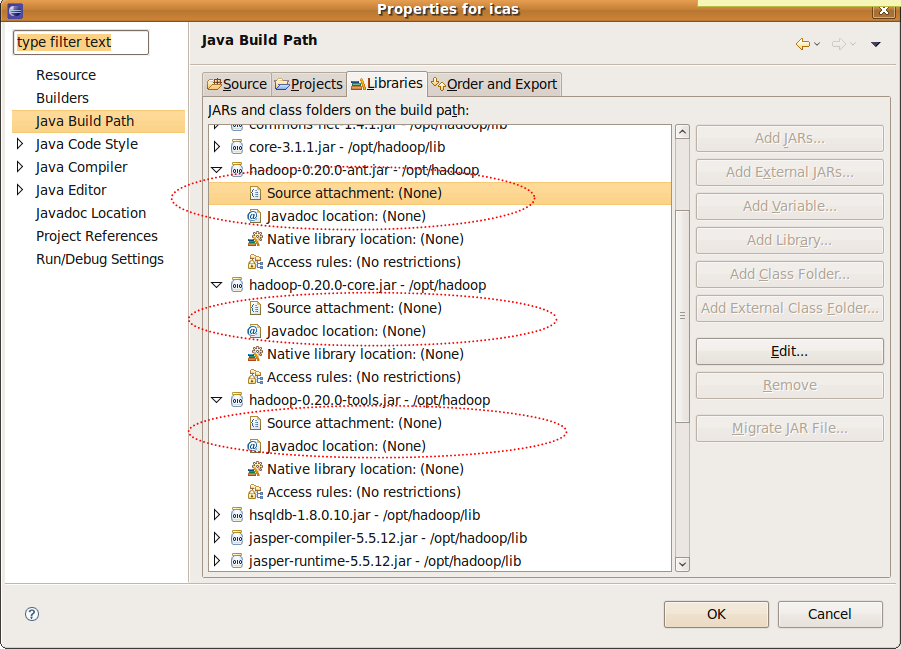

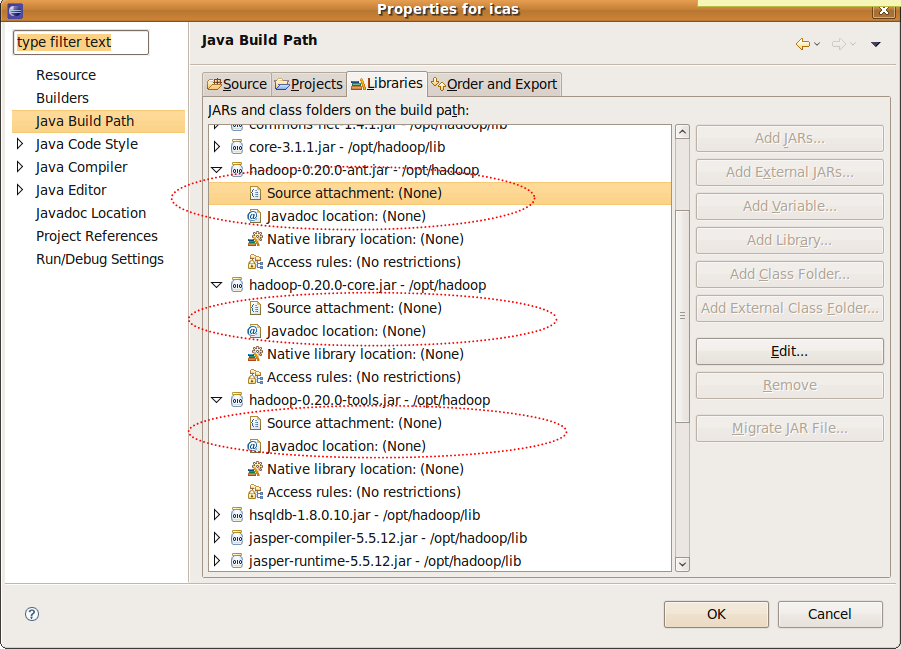

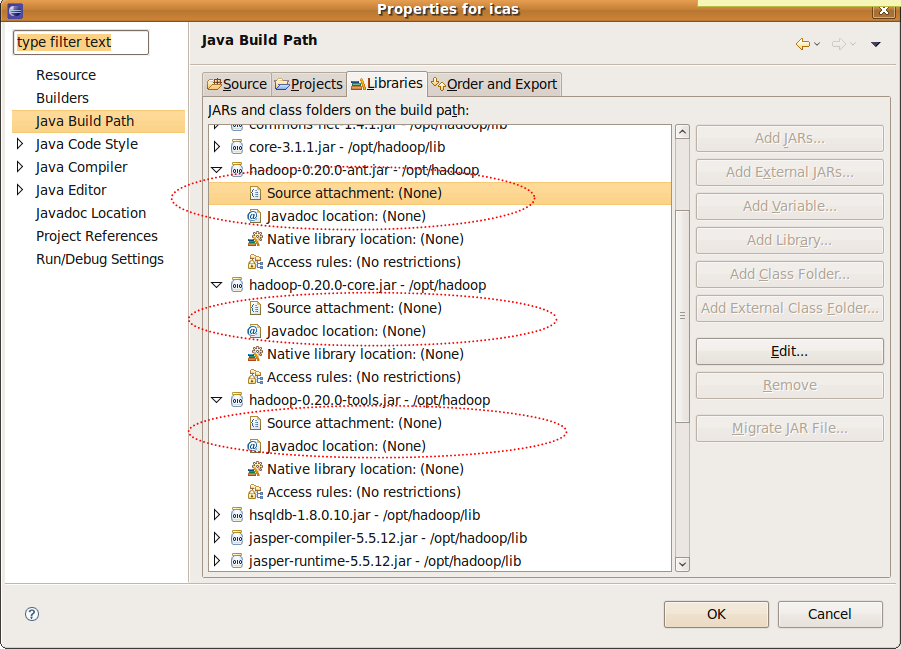

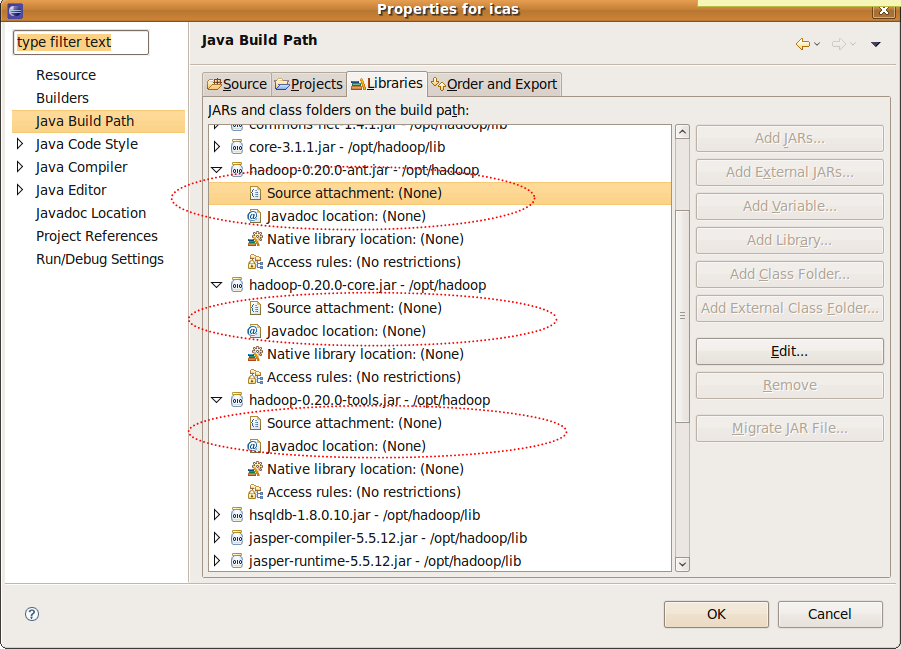

hadoop的javadoc的定(1)

- java Build Path -> Libraries -> hadoop-0.20.0-ant.jar

- java Build Path -> Libraries -> hadoop-0.20.0-core.jar

- java Build Path -> Libraries -> hadoop-0.20.0-tools.jar

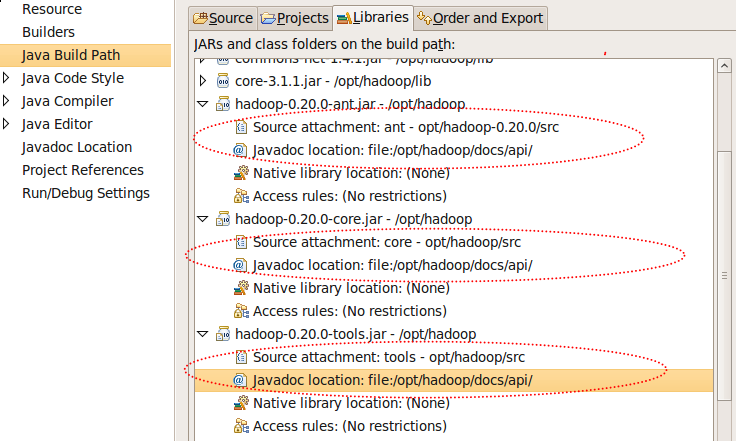

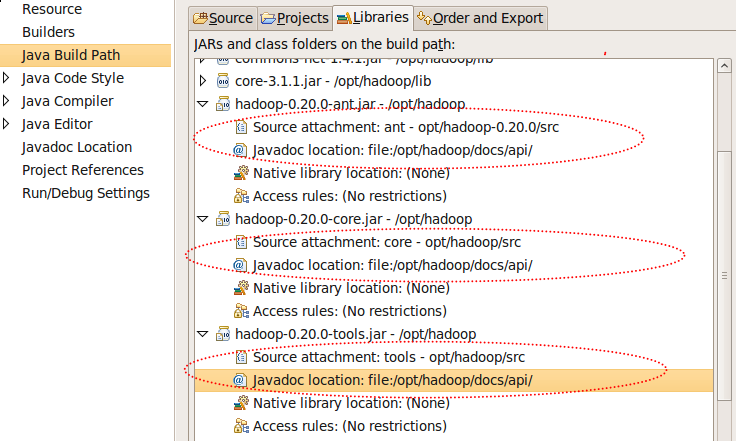

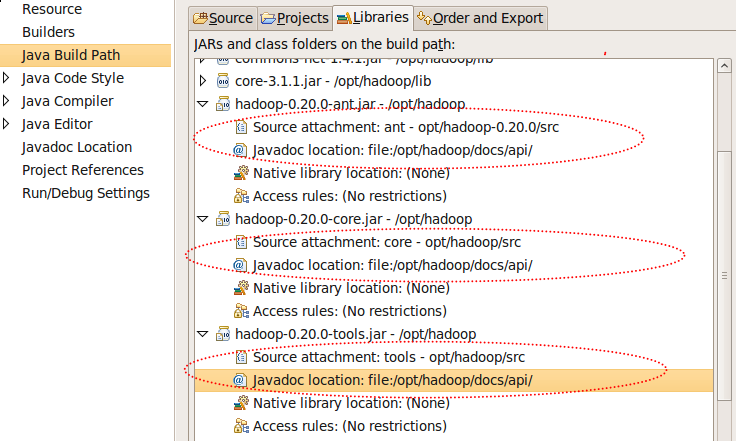

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

?

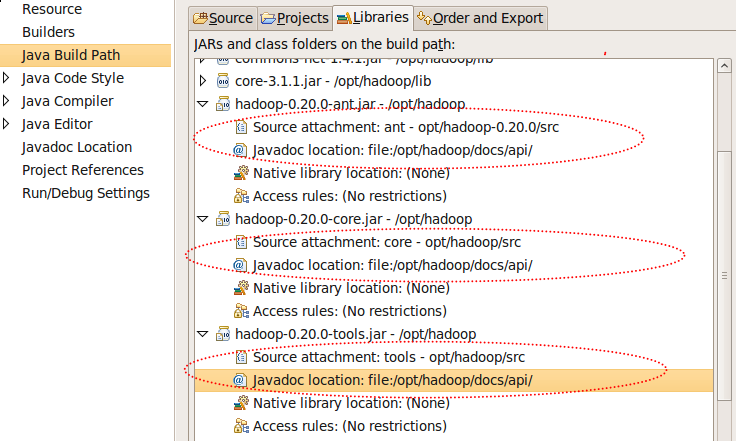

source?...-> 入:/opt/opt/hadoop-0.20.0/src javadoc ...-> 入:file:/opt/hadoop/docs/api/

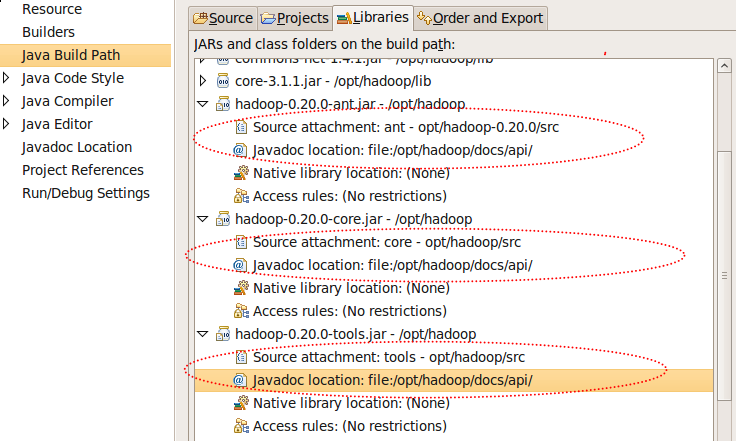

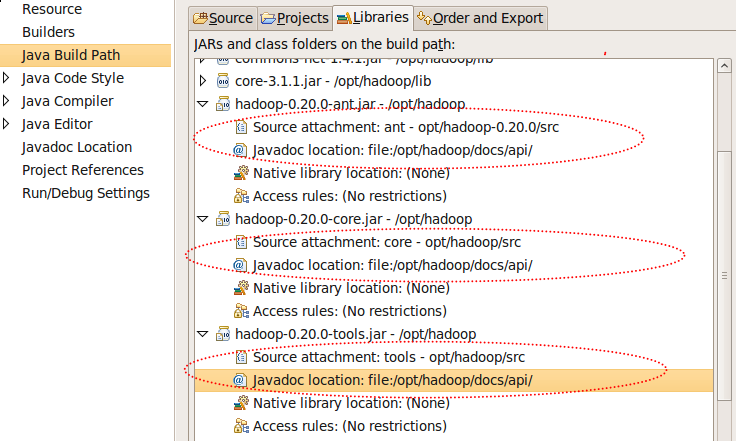

Step3. hadoop的javadoc的定完後(2)

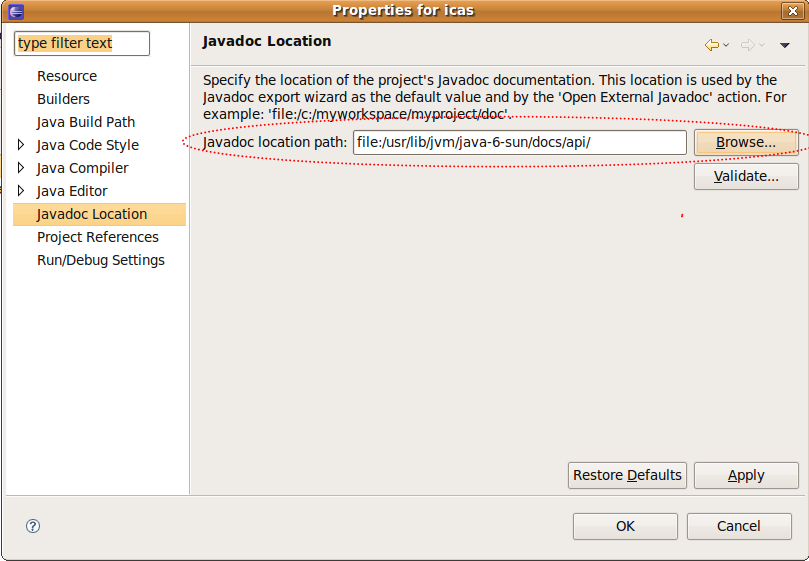

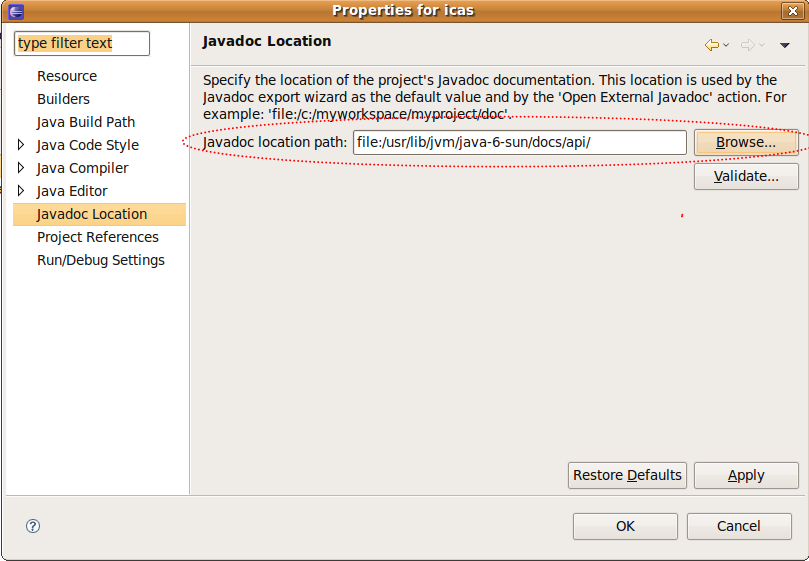

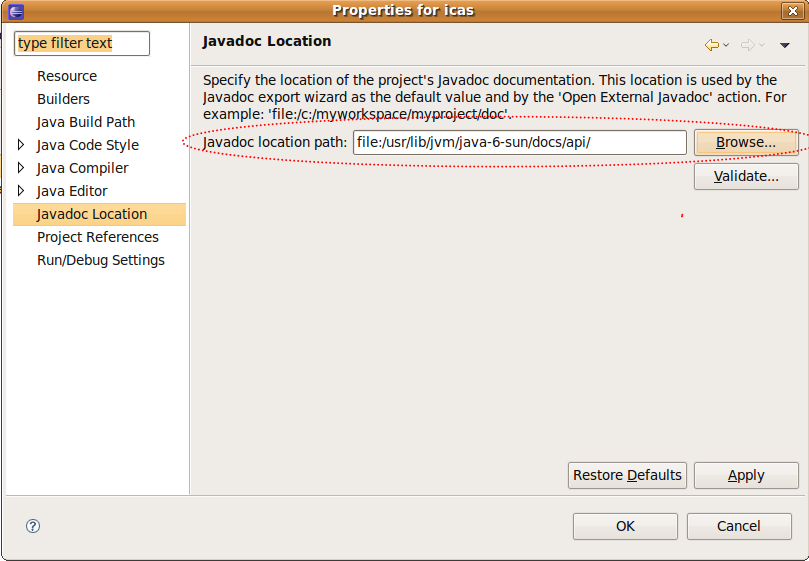

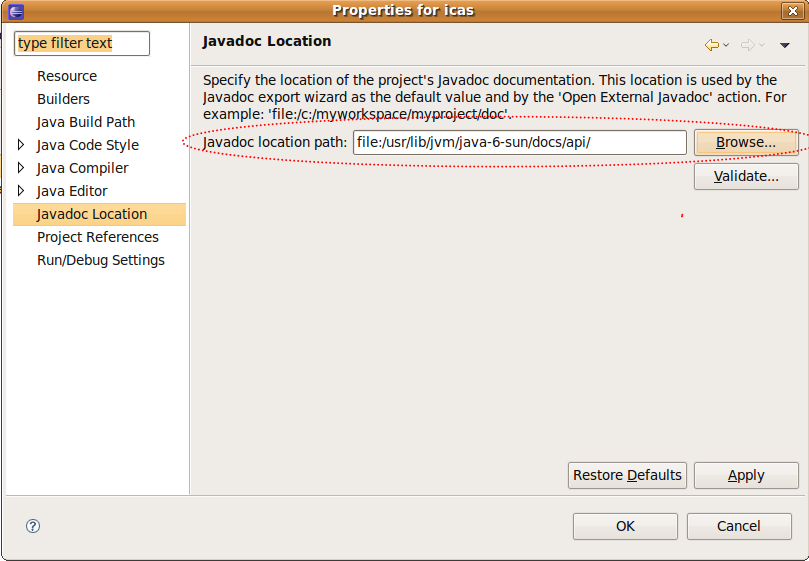

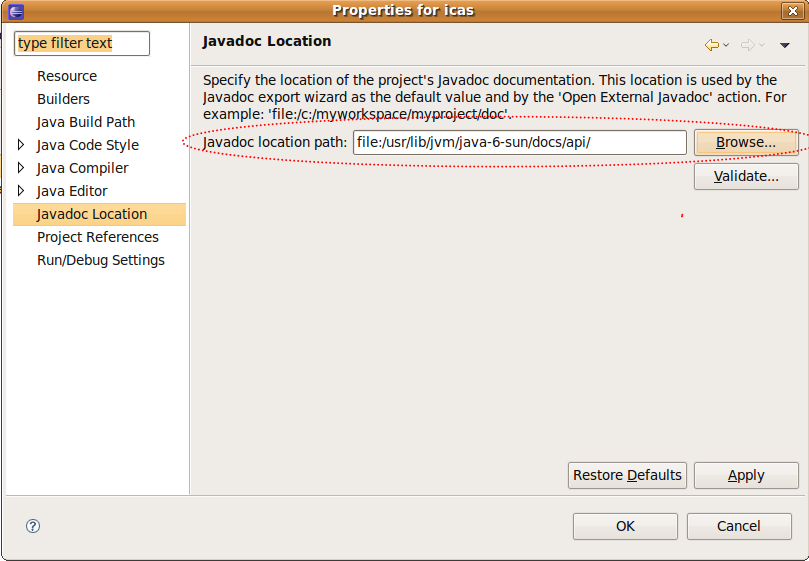

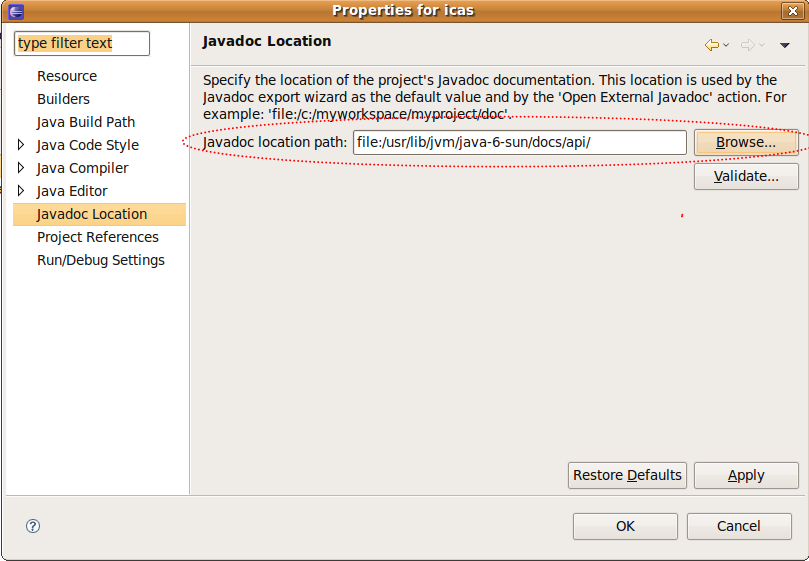

Step4. java本身的javadoc的定(3)

?

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

定完後回到eclipse 主窗

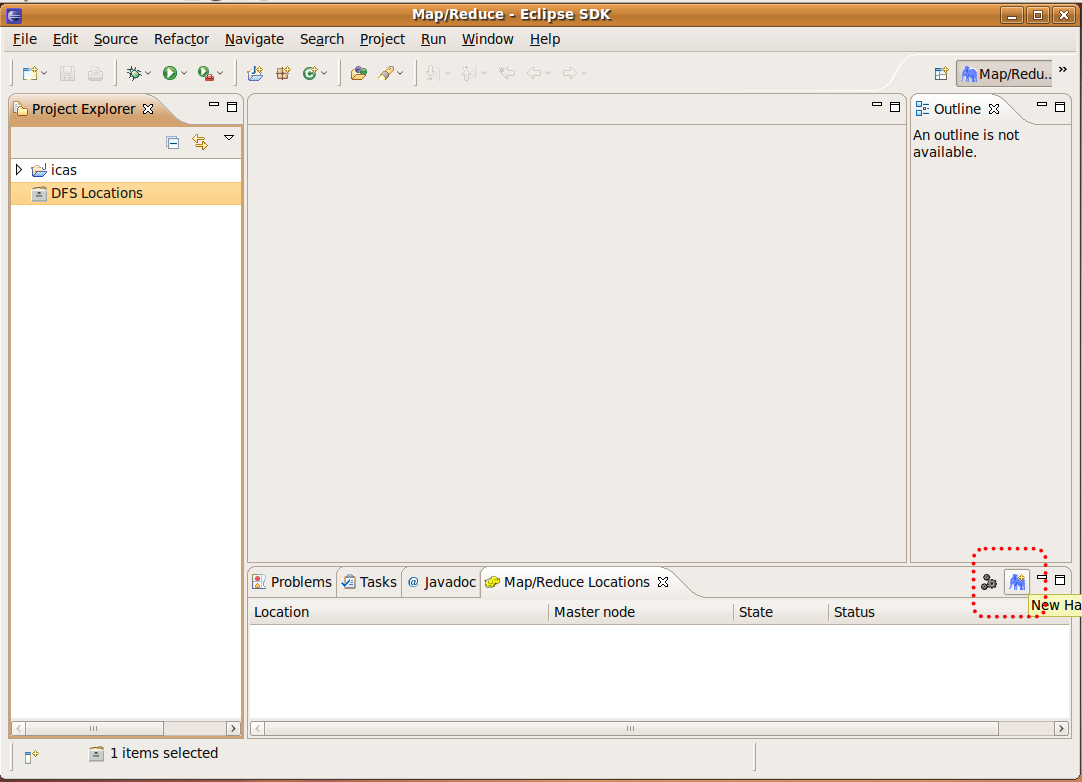

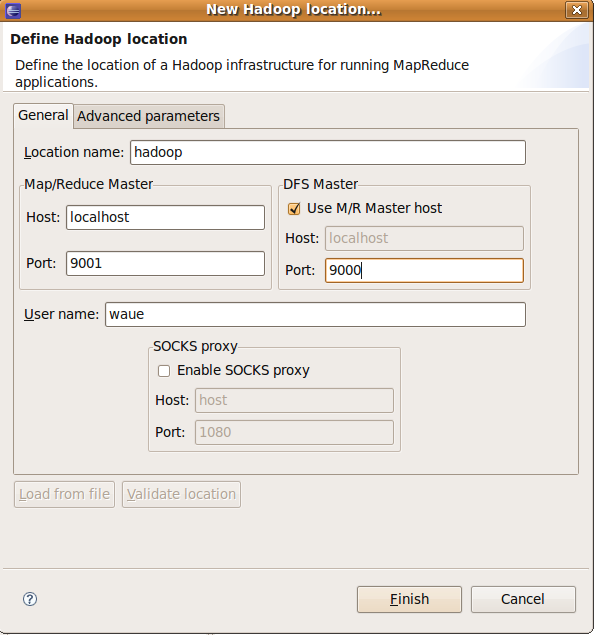

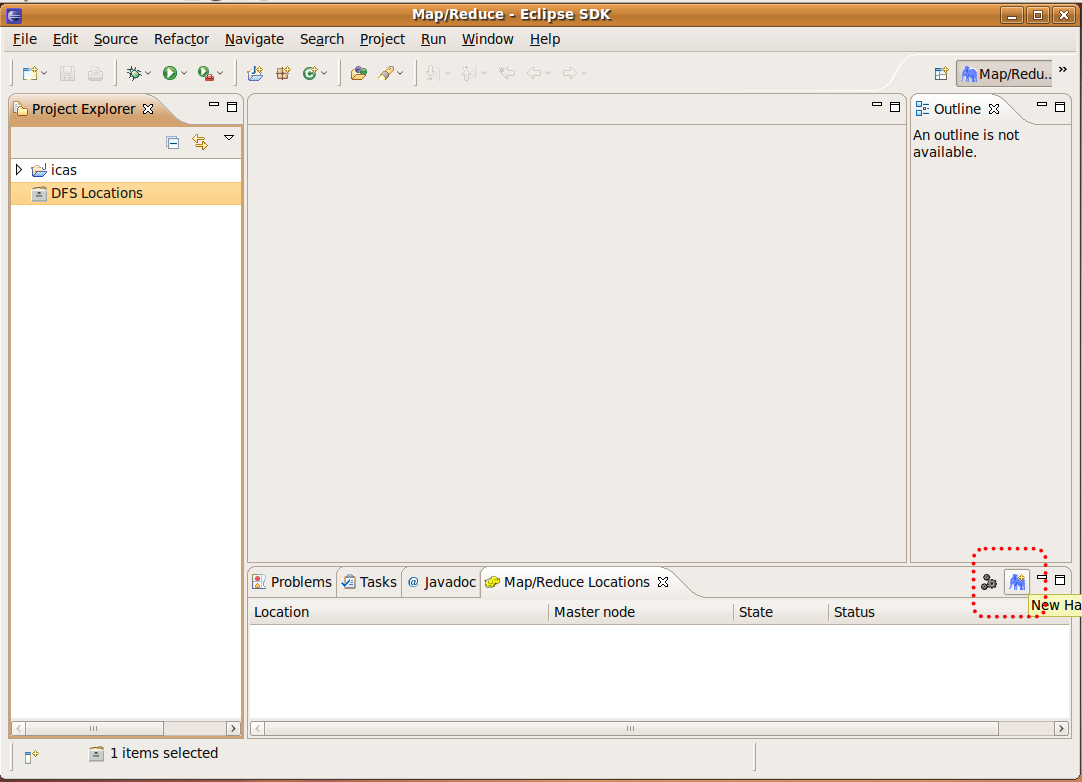

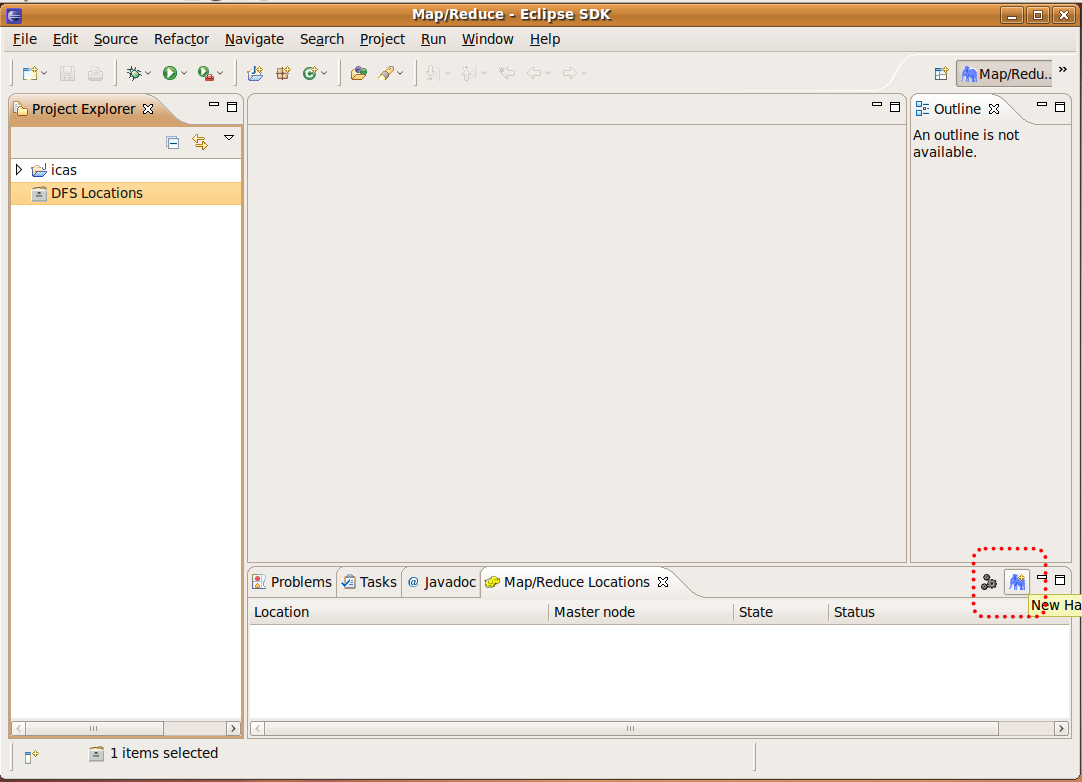

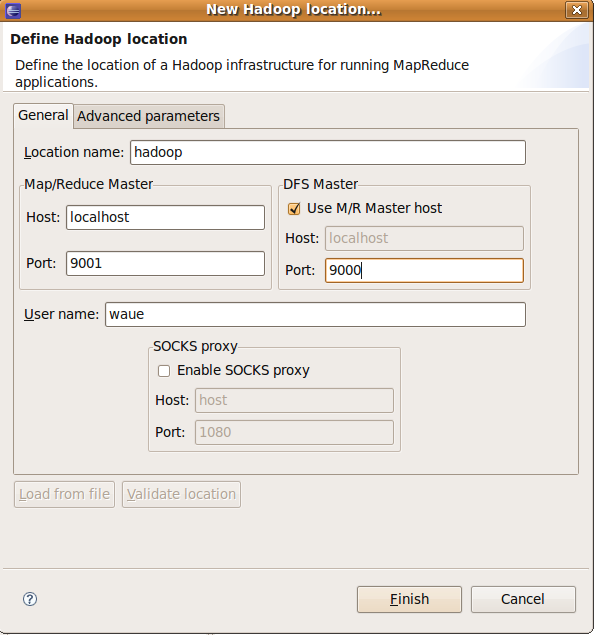

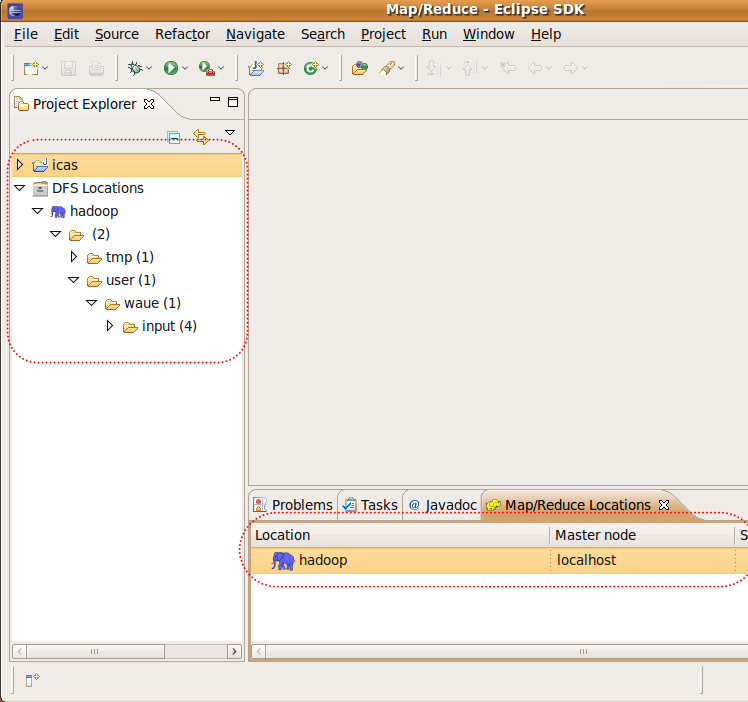

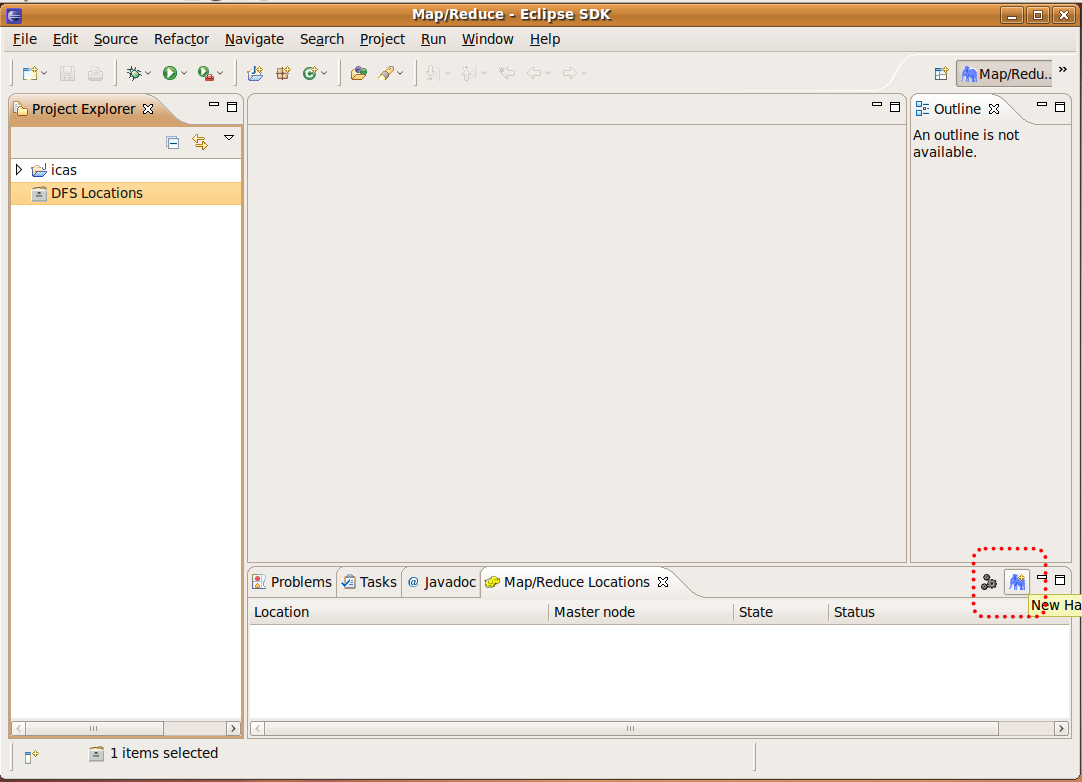

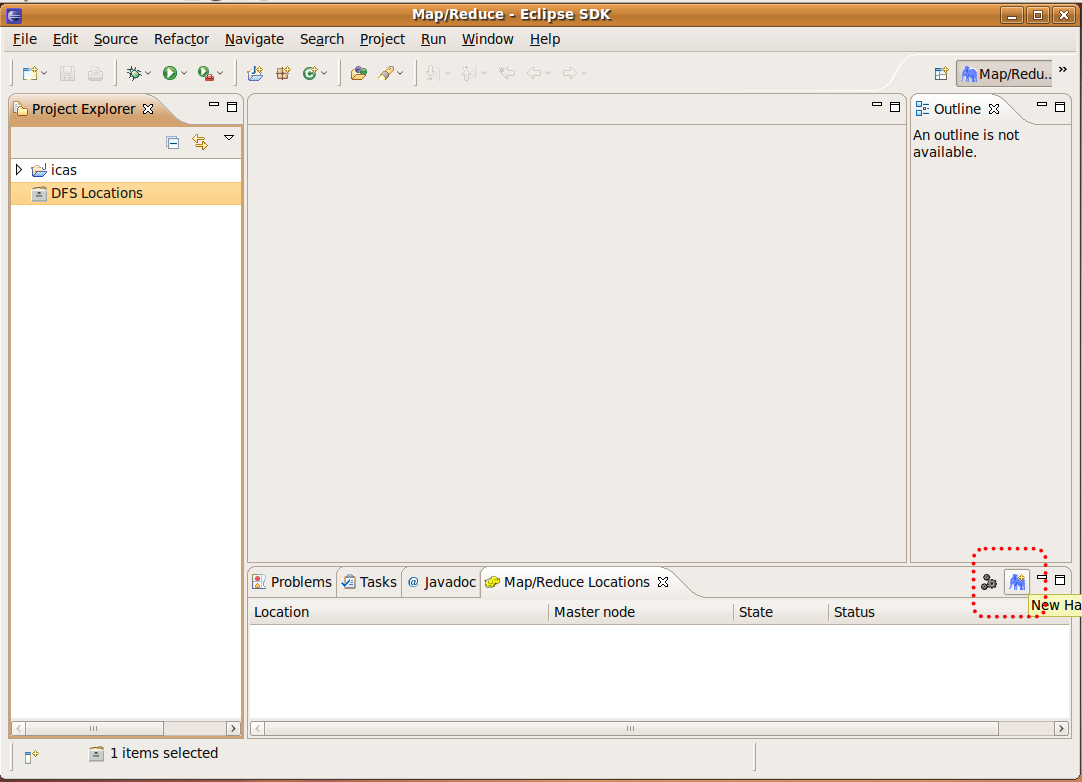

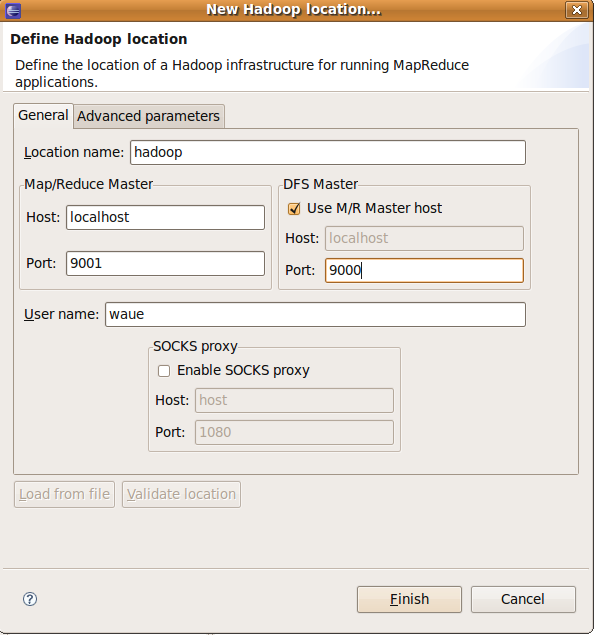

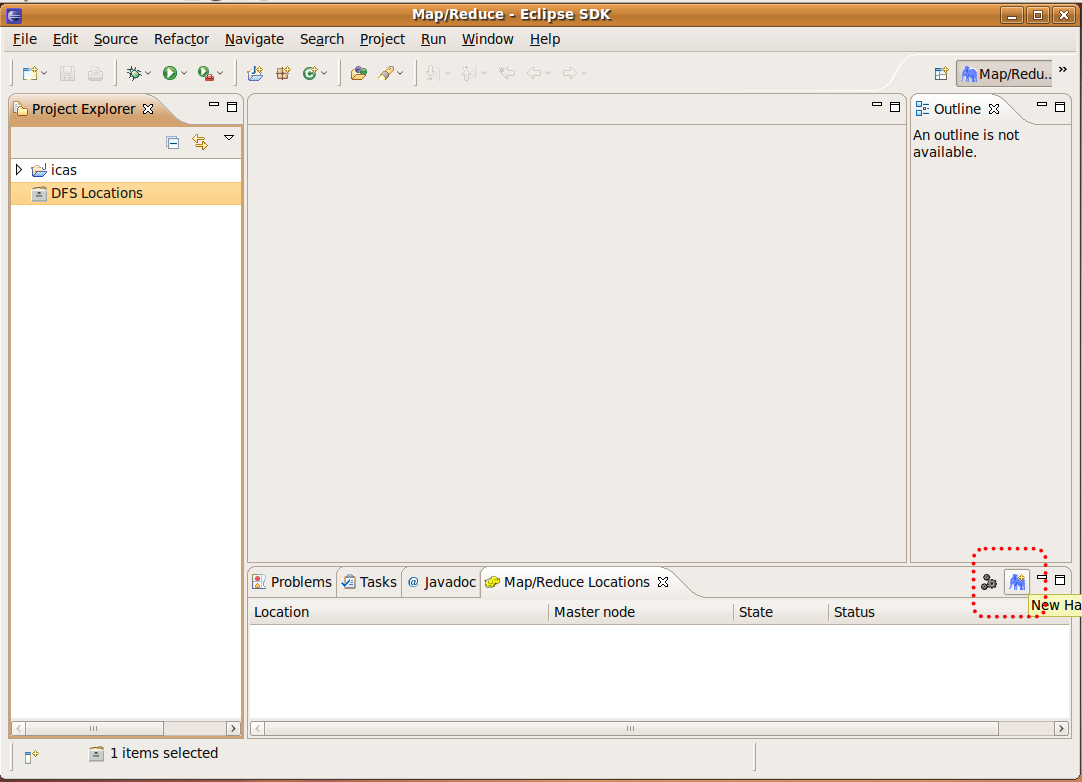

2.6 接hadoop server??Step1. 窗右下角色大象示"Map/Reduce Locations tag" -> 右的色大象示:

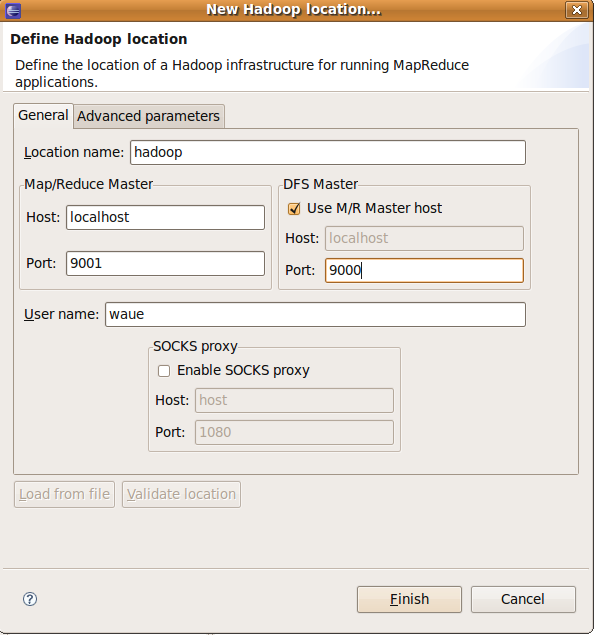

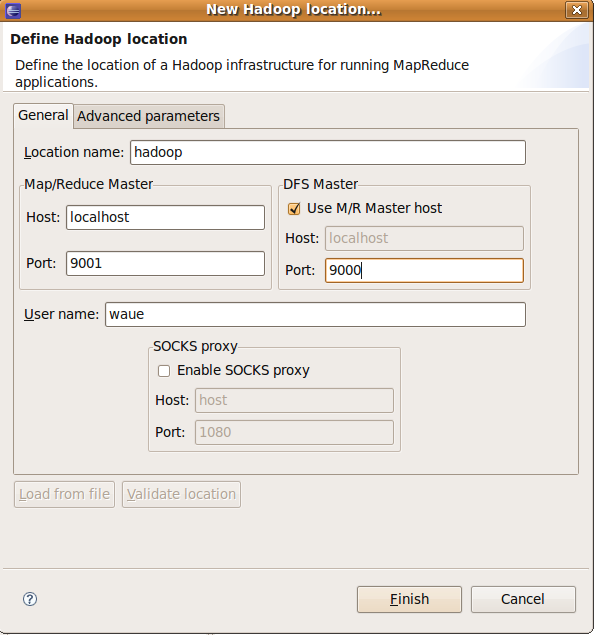

Step2. 行eclipse hadoop 的定(2)

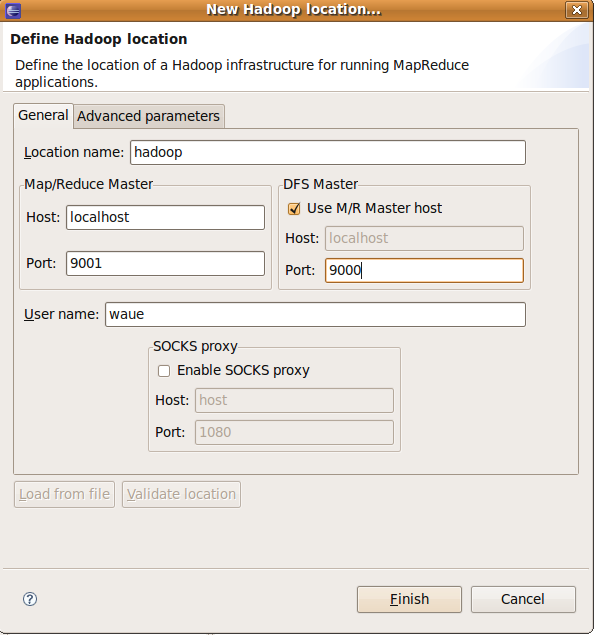

Location Name -> 入:hadoop?(意)?Map/Reduce Master -> Host-> 入:localhost Map/Reduce Master -> Port-> 入:9001 DFS Master -> Host-> 入:9000 Finish

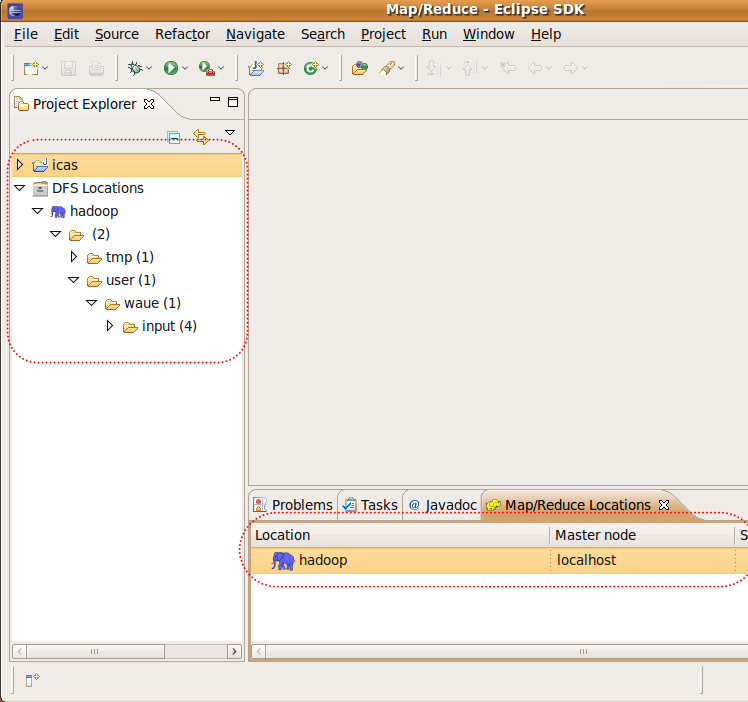

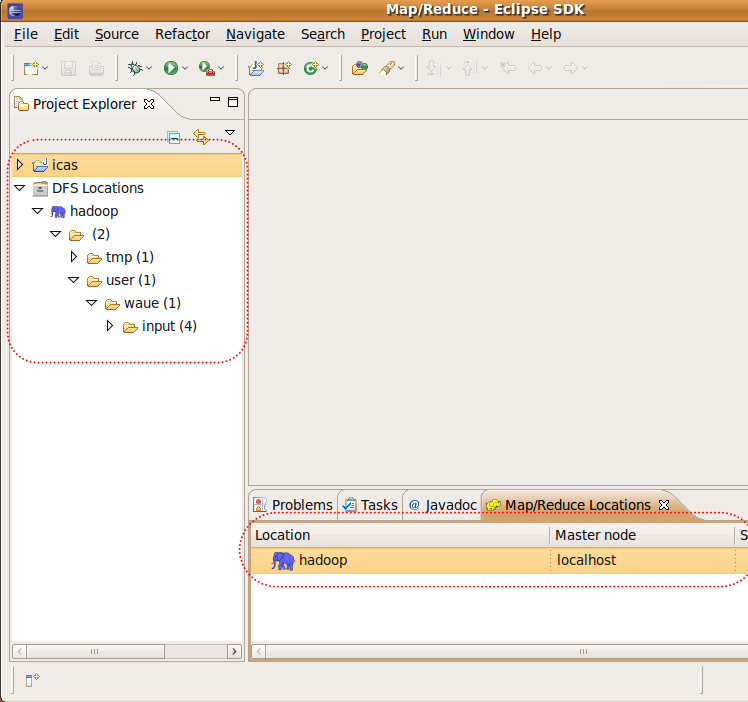

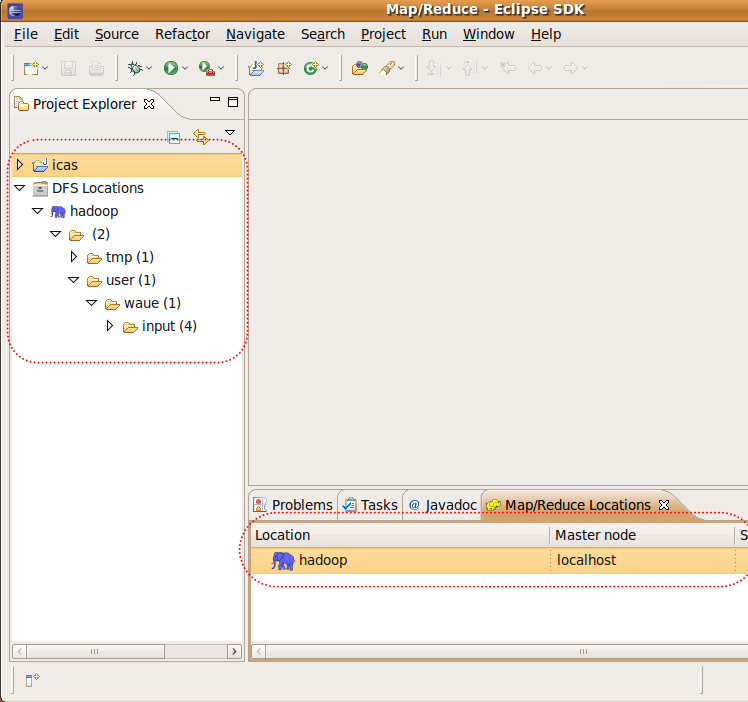

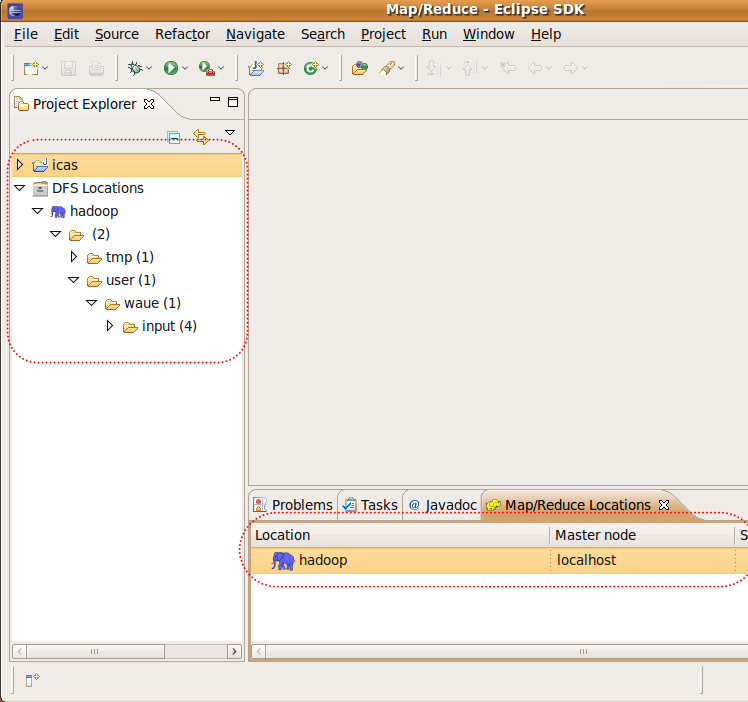

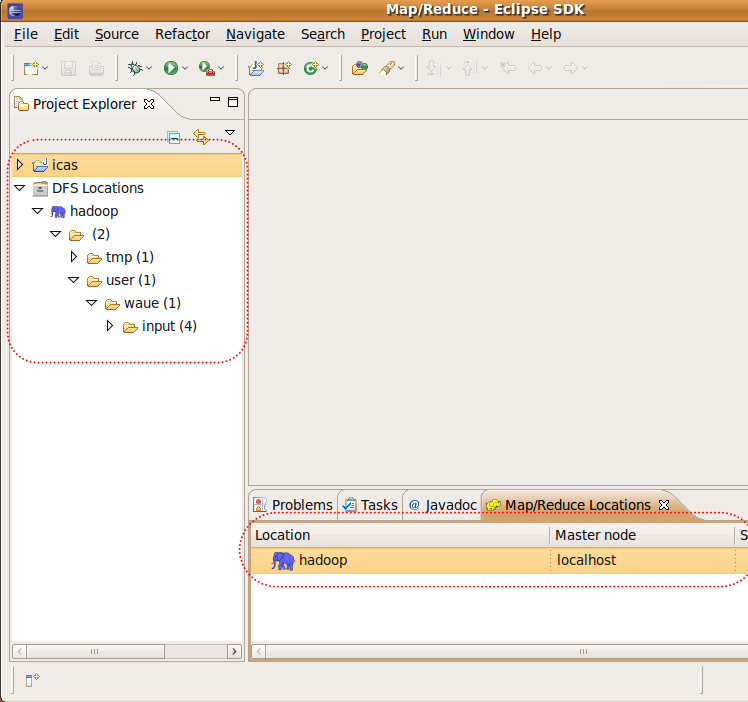

定完後,可以看到下方多了一色大象,左方展料也可以秀出在hdfs的案

三、 撰例程式??- 之前在eclipse上已了案icas,因此目在:

- /home/waue/workspace/icas

- 在目有料:

- src : 用程式原始

- bin : 用後的class

- 如此一原始和就不混在一起,之後生jar很有助

- 在我一例程式 :?WordCount

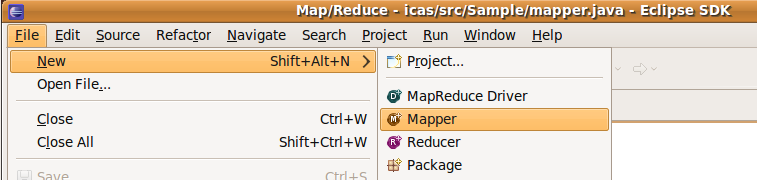

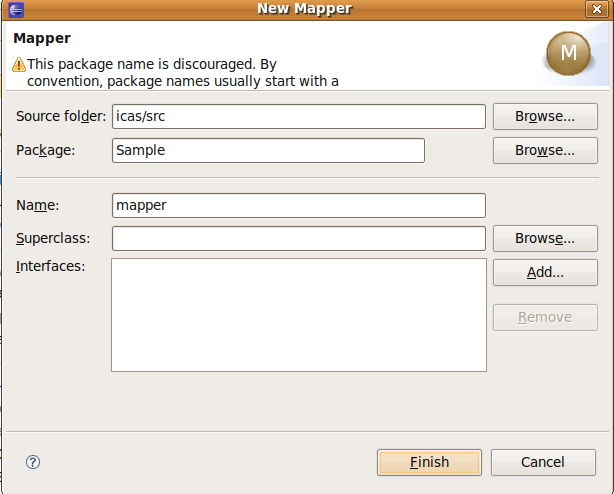

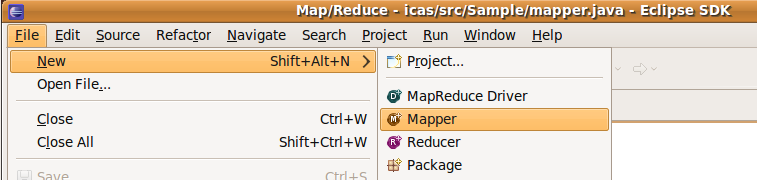

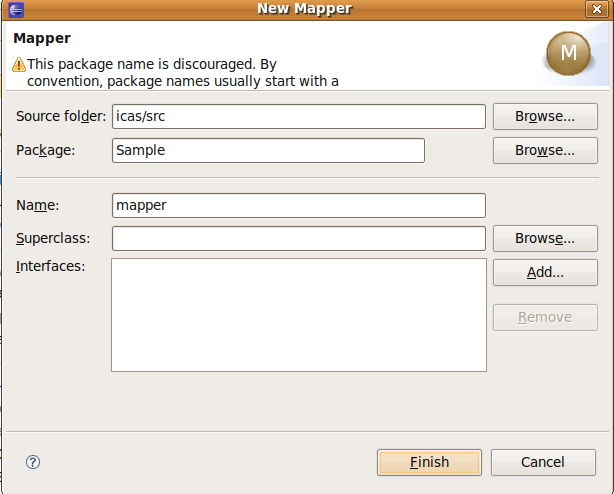

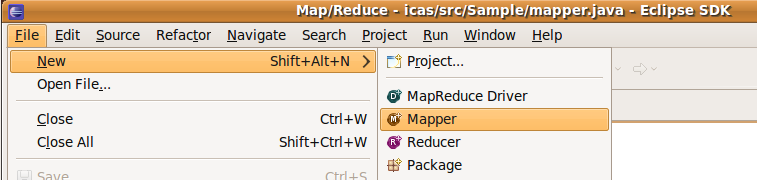

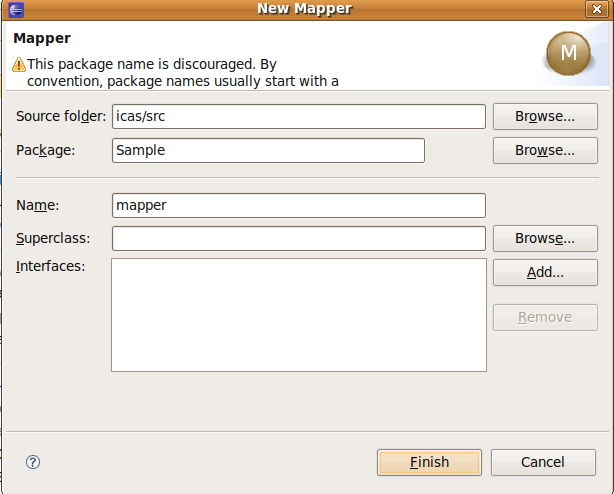

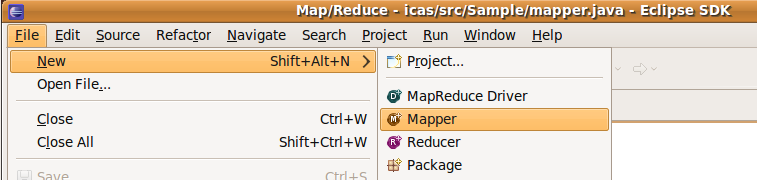

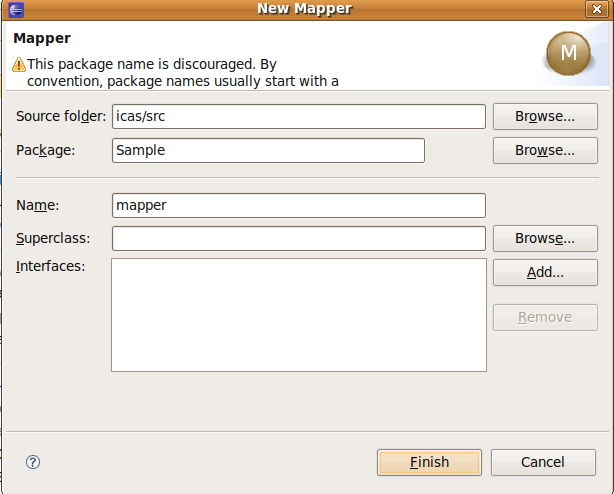

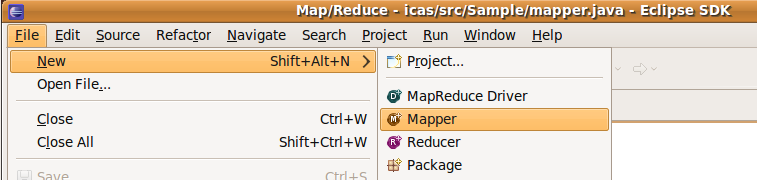

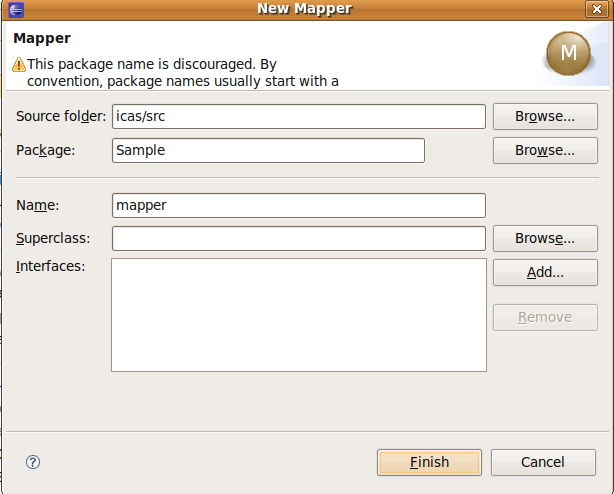

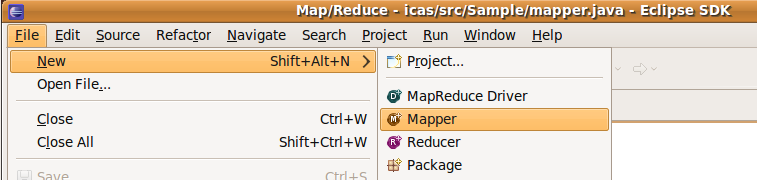

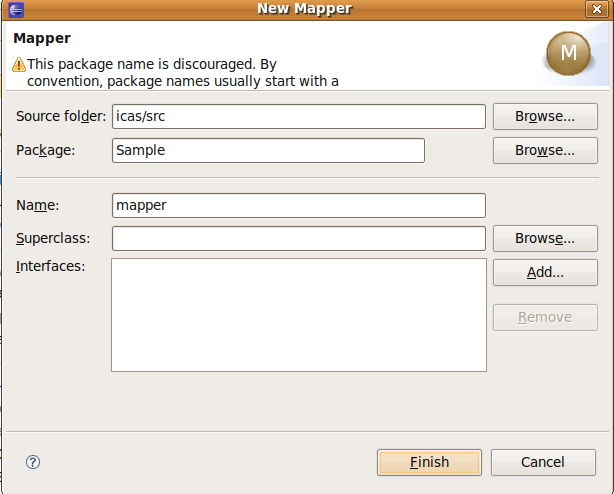

3.1 mapper.java??

?

- new

?

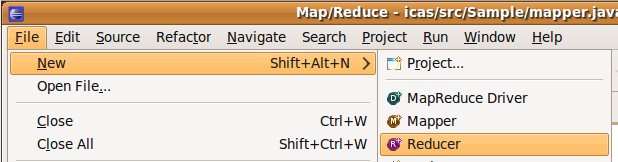

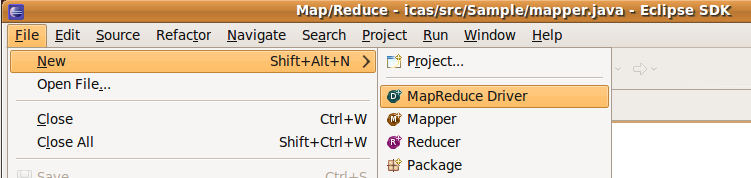

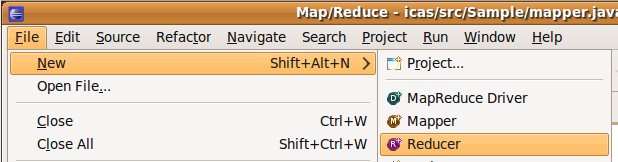

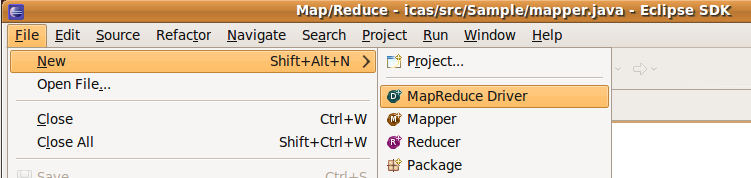

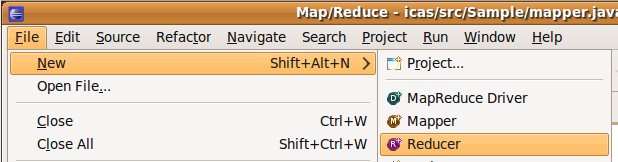

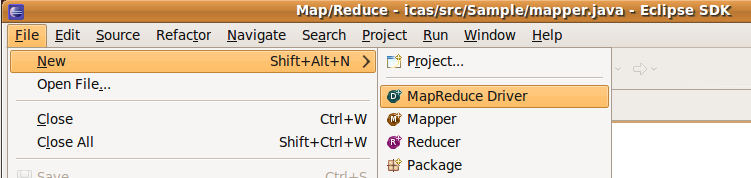

File ->new ->mapper

- create

source?folder-> 入: icas/src Package : Sample Name -> : mapper

- modify

?

package?Sample;?import?java.io.IOException;?import?java.util.StringTokenizer;?importorg.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Mapper;?public?class?mapper?extends?Mapper<Object,?Text,?Text,IntWritable>?{?private?final?static?IntWritable one?=?new?IntWritable(1);?private?Text word?=?newText();?public?void?map(Object key,?Text value,?Context context)?throws?IOException,InterruptedException?{?StringTokenizer itr?=?new?StringTokenizer(value.toString());?while(itr.hasMoreTokens())?{?word.set(itr.nextToken());?context.write(word,?one);?}?}?}建立mapper.java後,入程式

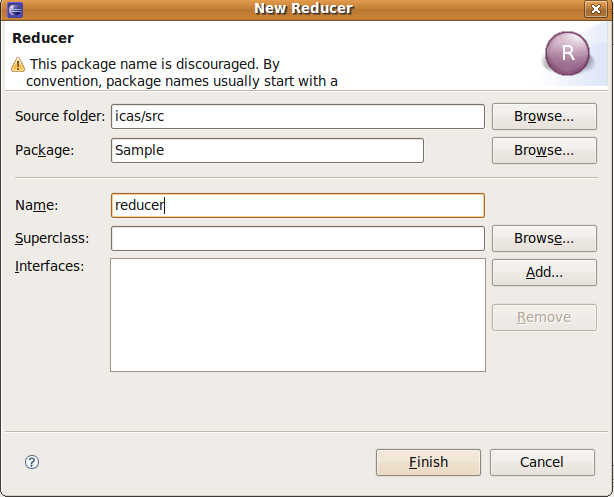

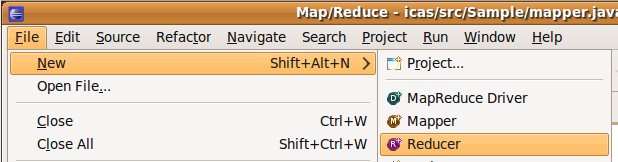

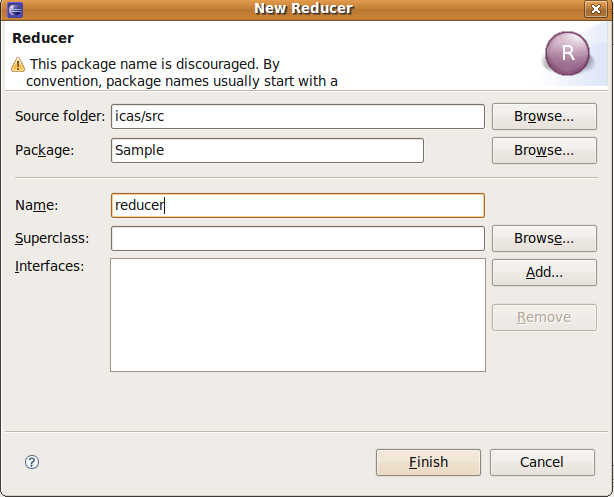

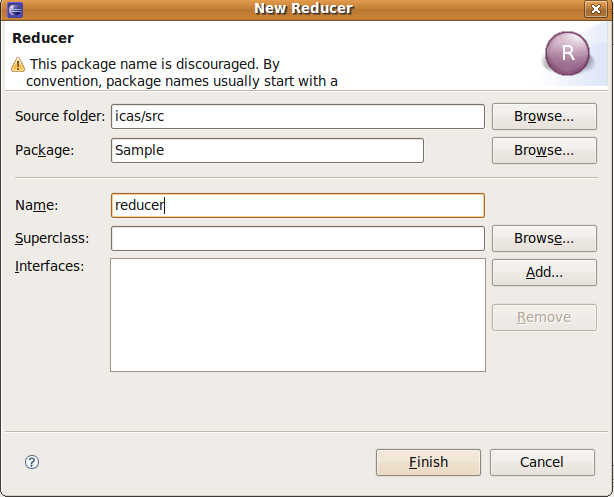

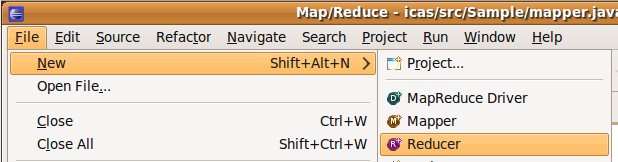

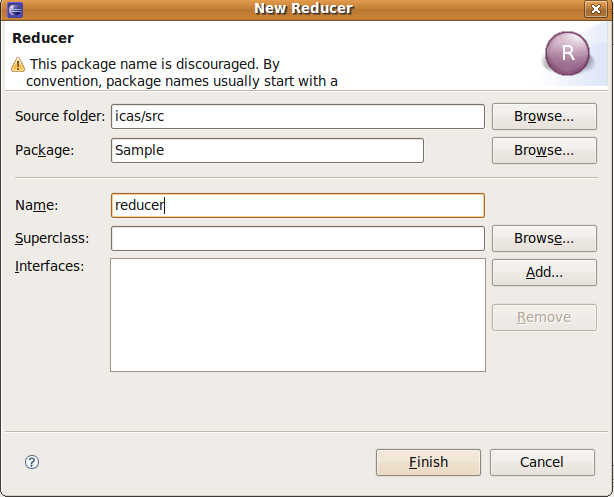

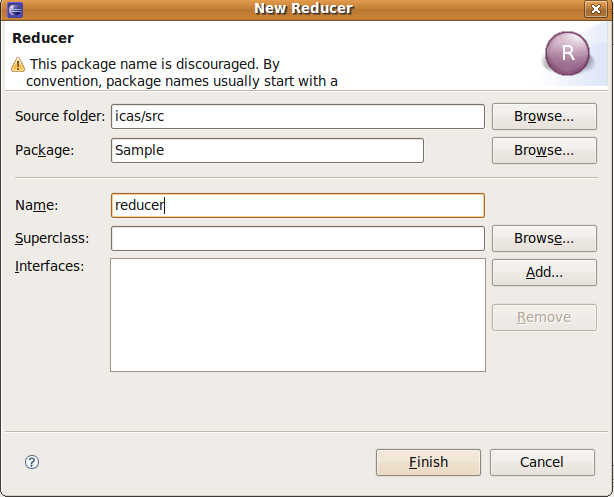

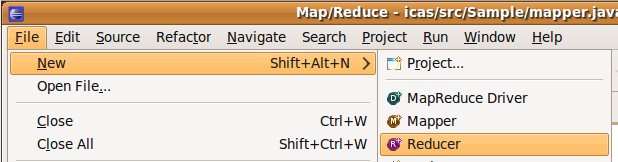

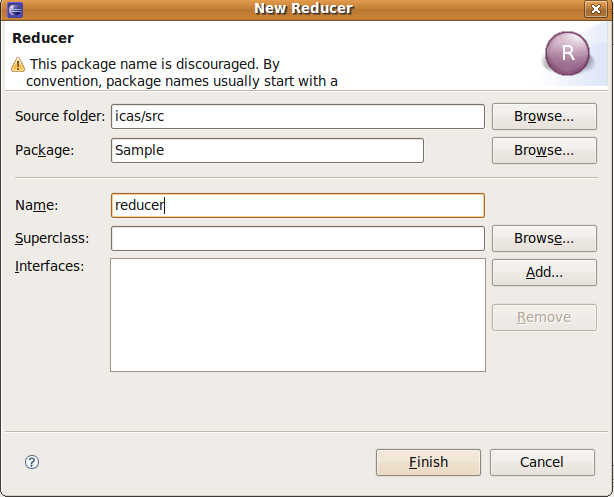

3.2 reducer.java??- new

- File -> new -> reducer

- create

source?folder-> 入: icas/src Package : Sample Name -> : reducer

- modify

?

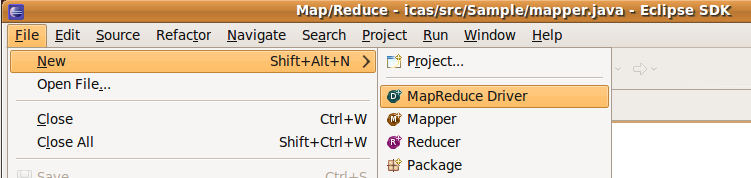

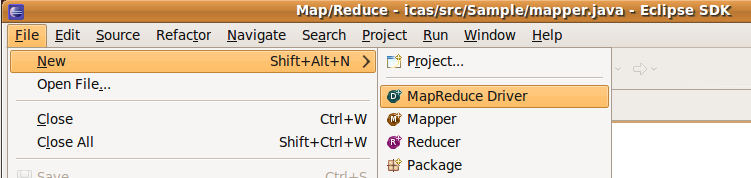

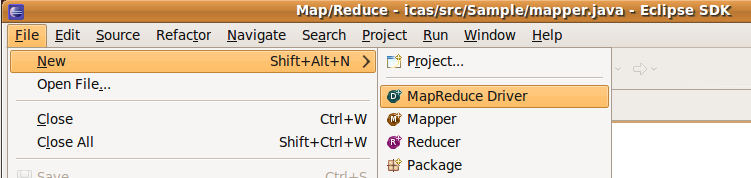

package?Sample;?import?java.io.IOException;?import?org.apache.hadoop.io.IntWritable;?importorg.apache.hadoop.io.Text;?import?org.apache.hadoop.mapreduce.Reducer;?public?class?reducerextends?Reducer<Text,?IntWritable,?Text,?IntWritable>?{?private?IntWritable result?=?newIntWritable();?public?void?reduce(Text key,?Iterable<IntWritable>?values,?Context context)?throwsIOException,?InterruptedException?{?int?sum?=?0;?for?(IntWritable val?:?values)?{?sum?+=val.get();?}?result.set(sum);?context.write(key,?result);?}?}- File -> new -> Map/Reduce Driver

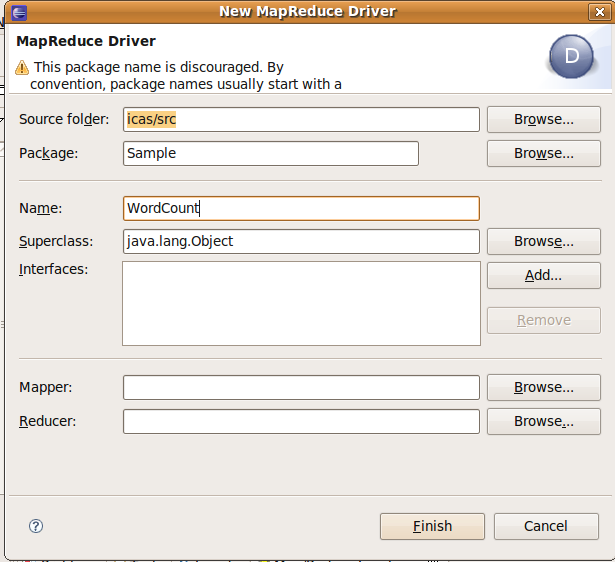

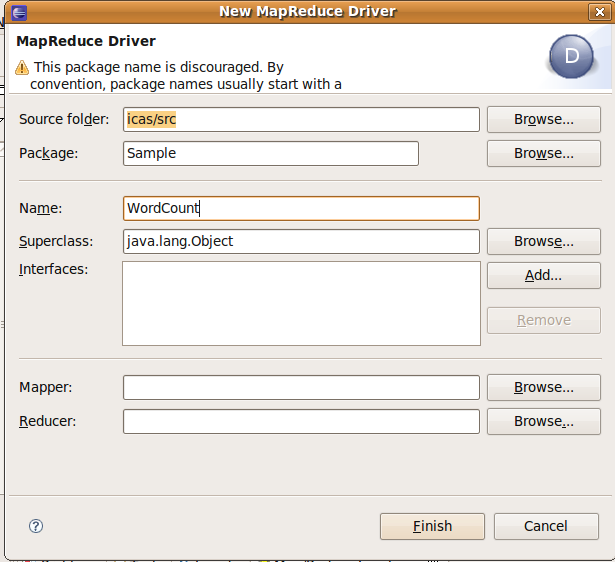

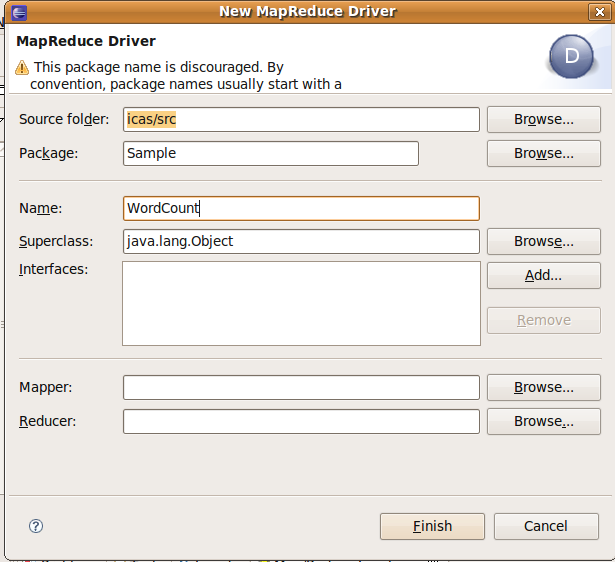

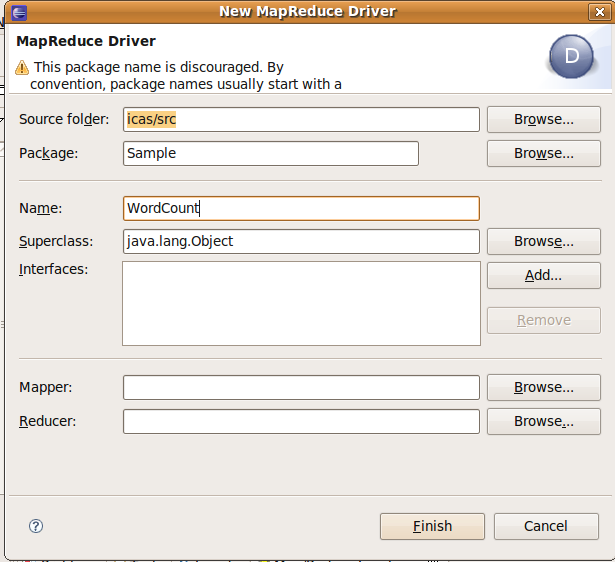

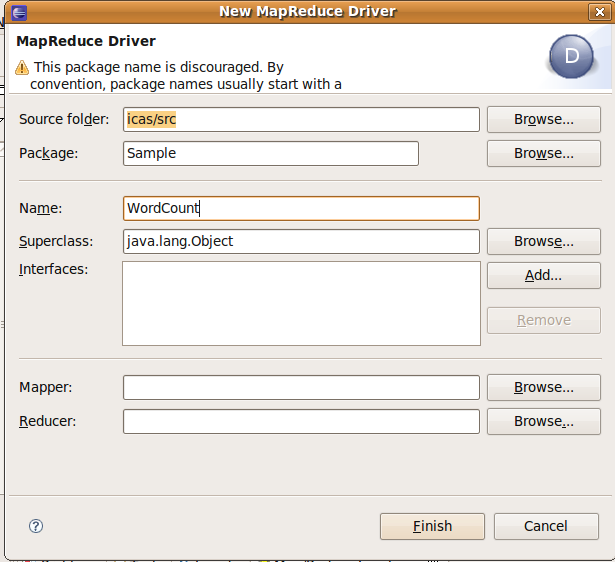

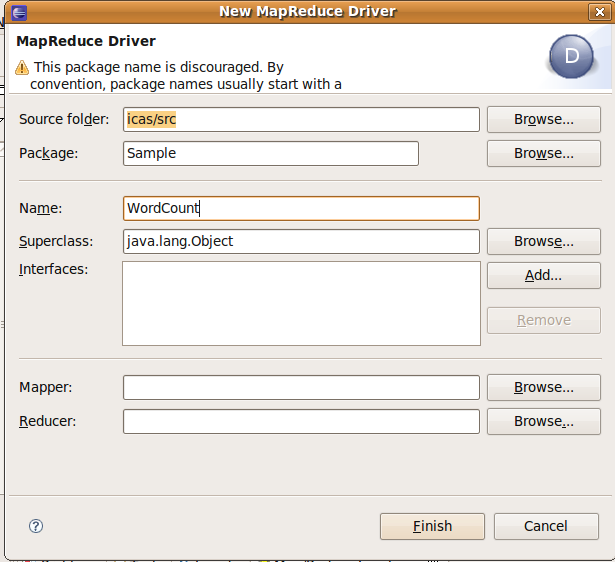

3.3?WordCount.java (main function)??- new

建立WordCount.java,此用mapper reducer,因此 Map/Reduce Driver

- create

source?folder-> 入: icas/src Package : Sample Name -> : WordCount.java

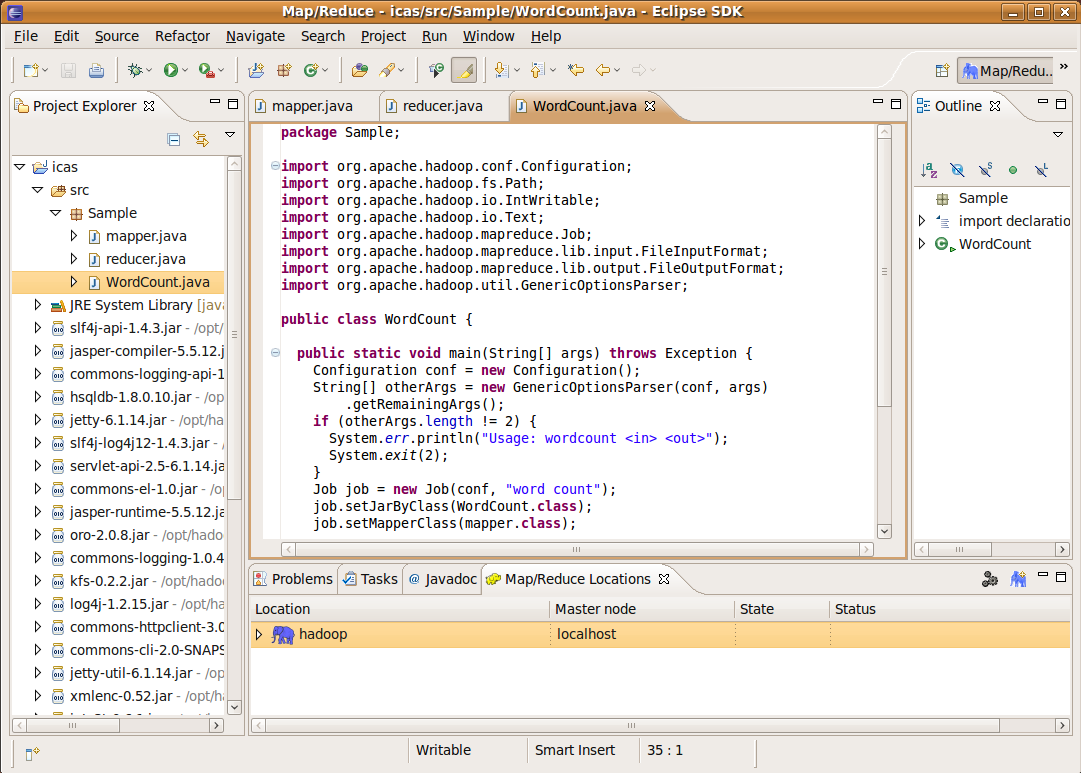

- modify

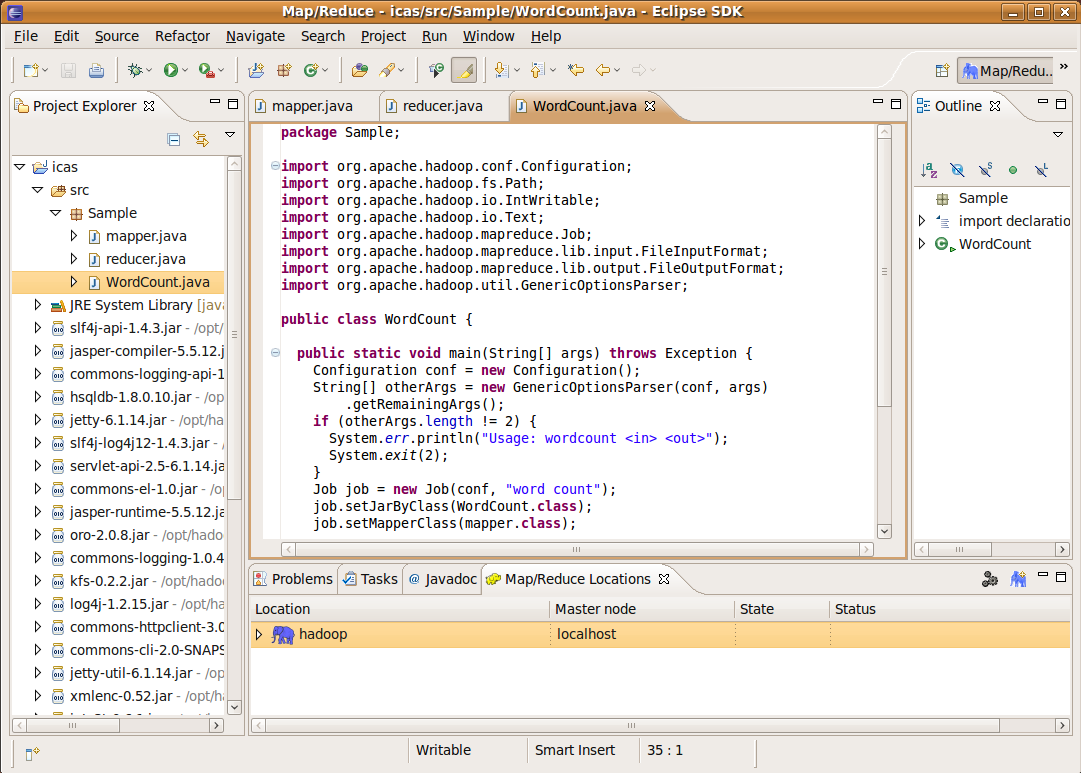

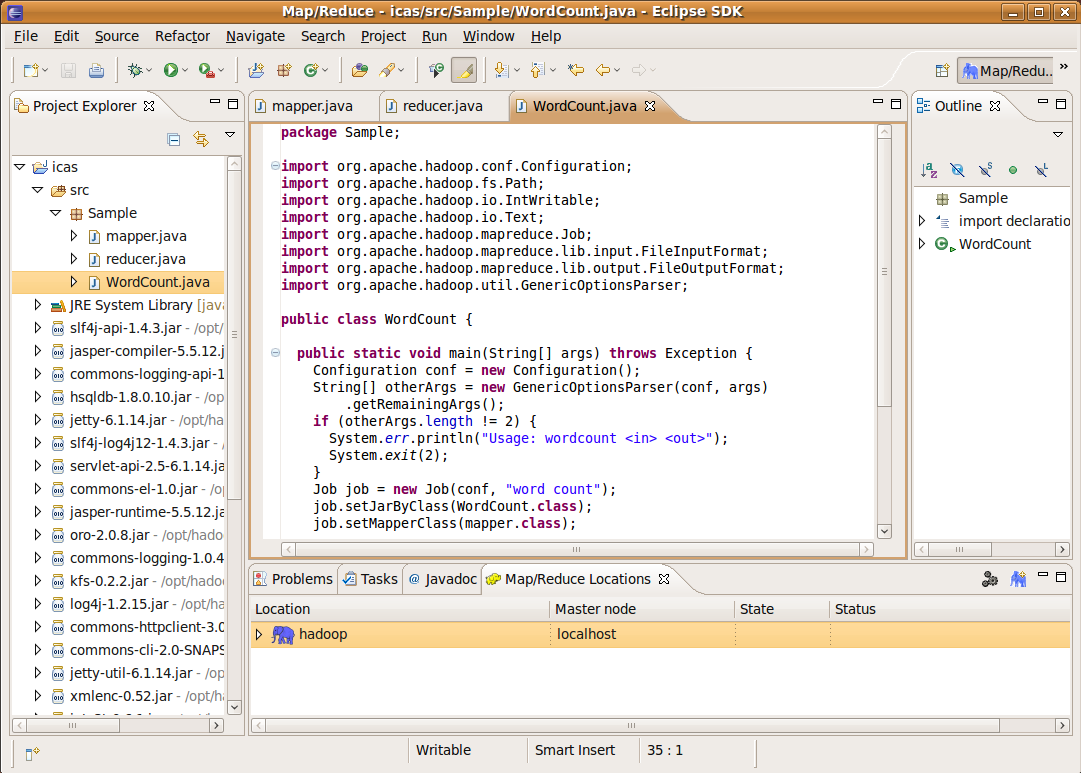

package?Sample;?import?org.apache.hadoop.conf.Configuration;?import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Job;?import?org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import?org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;?importorg.apache.hadoop.util.GenericOptionsParser;?public?class?WordCount?{?public?static?voidmain(String[]?args)?throws?Exception?{?Configuration conf?=?new?Configuration();?String[]otherArgs?=?new?GenericOptionsParser(conf,?args)?.getRemainingArgs();?if?(otherArgs.length?!=?2)?{System.err.println("Usage: wordcount <in> <out>");?System.exit(2);?}?Job job?=?new?Job(conf,?"word count");?job.setJarByClass(WordCount.class);?job.setMapperClass(mapper.class);job.setCombinerClass(reducer.class);?job.setReducerClass(reducer.class);job.setOutputKeyClass(Text.class);?job.setOutputValueClass(IntWritable.class);FileInputFormat.addInputPath(job,?new?Path(otherArgs[0]));?FileOutputFormat.setOutputPath(job,?newPath(otherArgs[1]));?System.exit(job.waitForCompletion(true)???0?:?1);?}?}三完成後存後,整程式建立完成

- 三都存後,可以看到icas案下的src,bin都有案生,我用指令check

?

$ cd workspace/icas $ ls src/Sample/ mapper.java reducer.java WordCount.java $ ls bin/Sample/ mapper.class reducer.class WordCount.class

四、例程式??

?

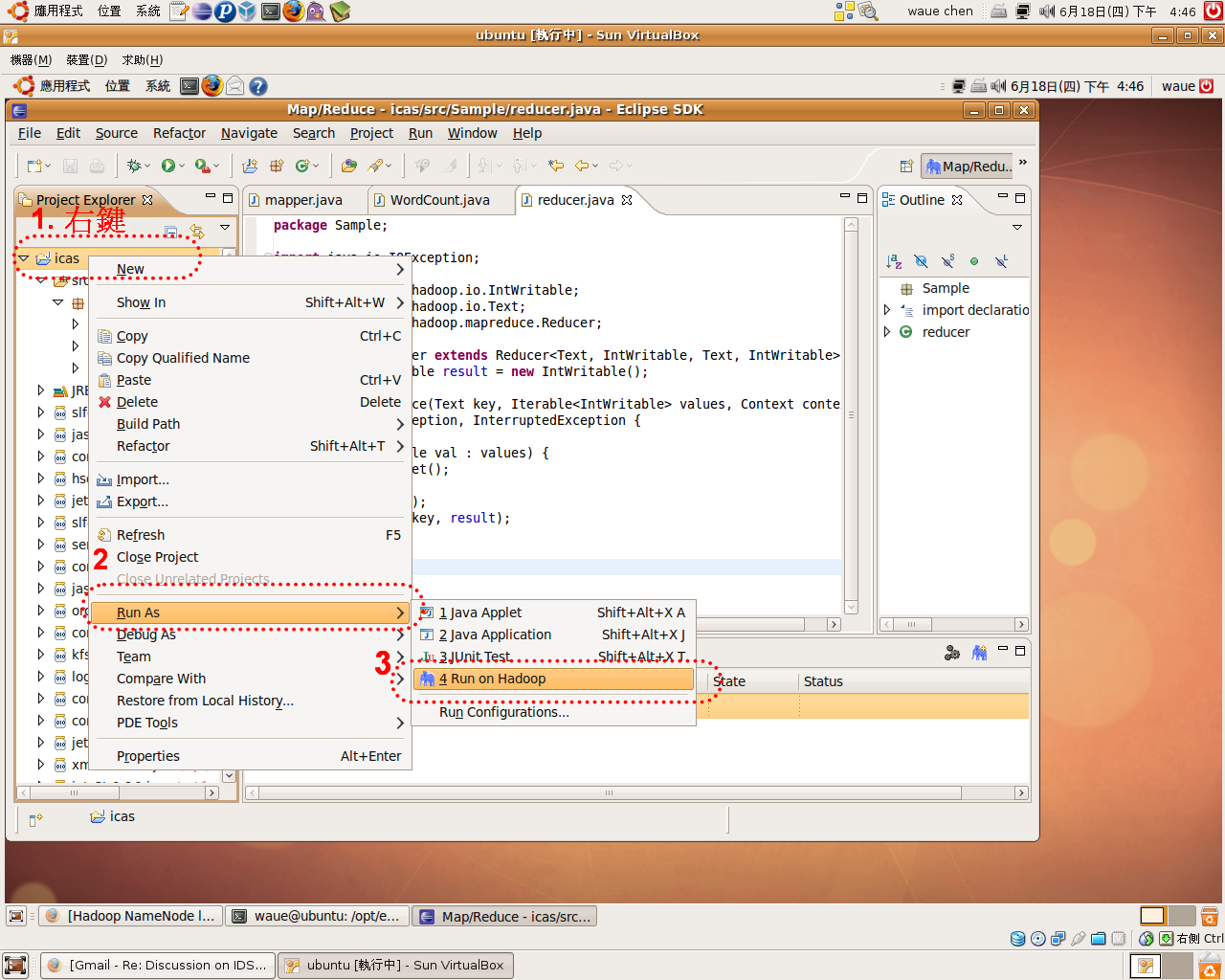

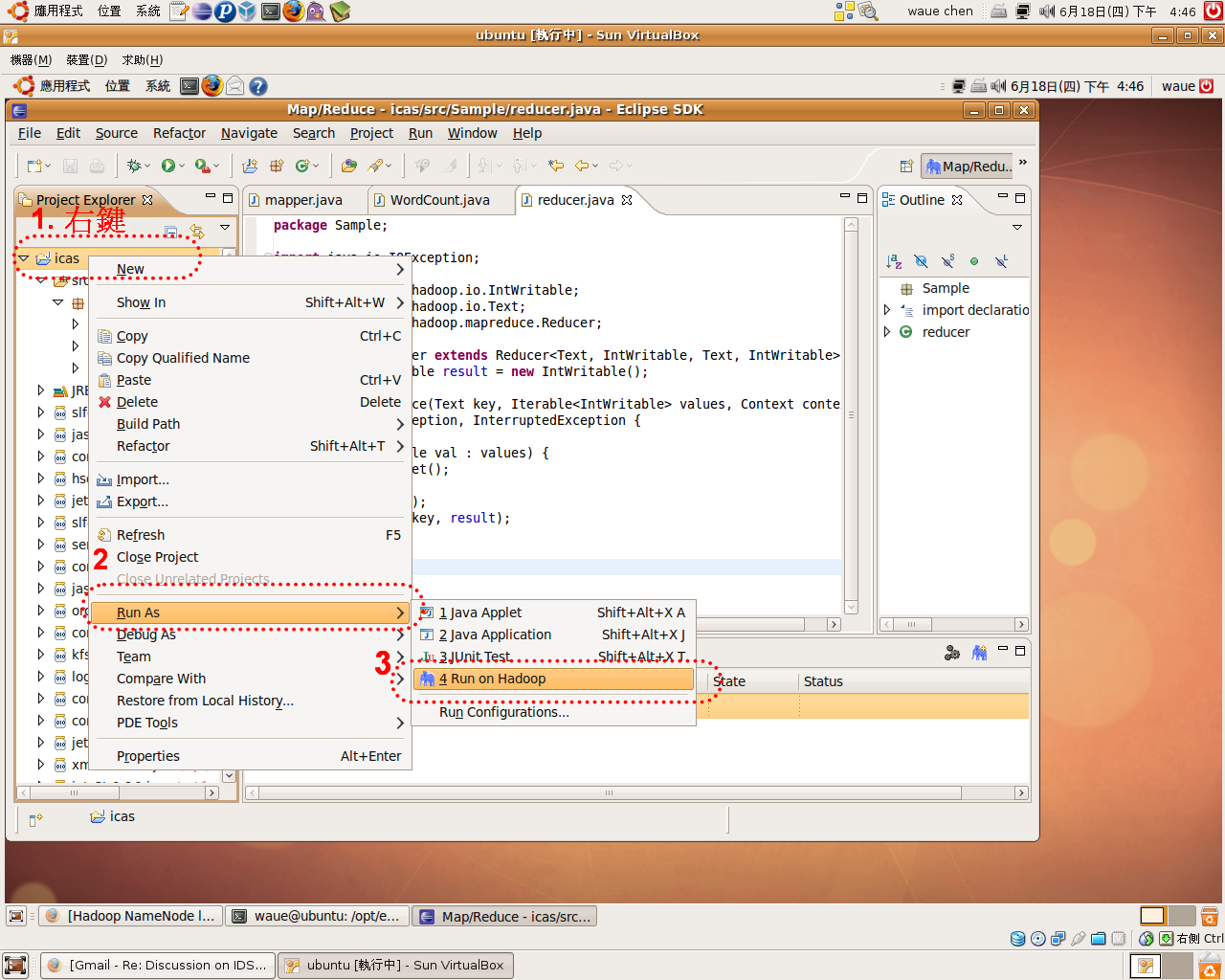

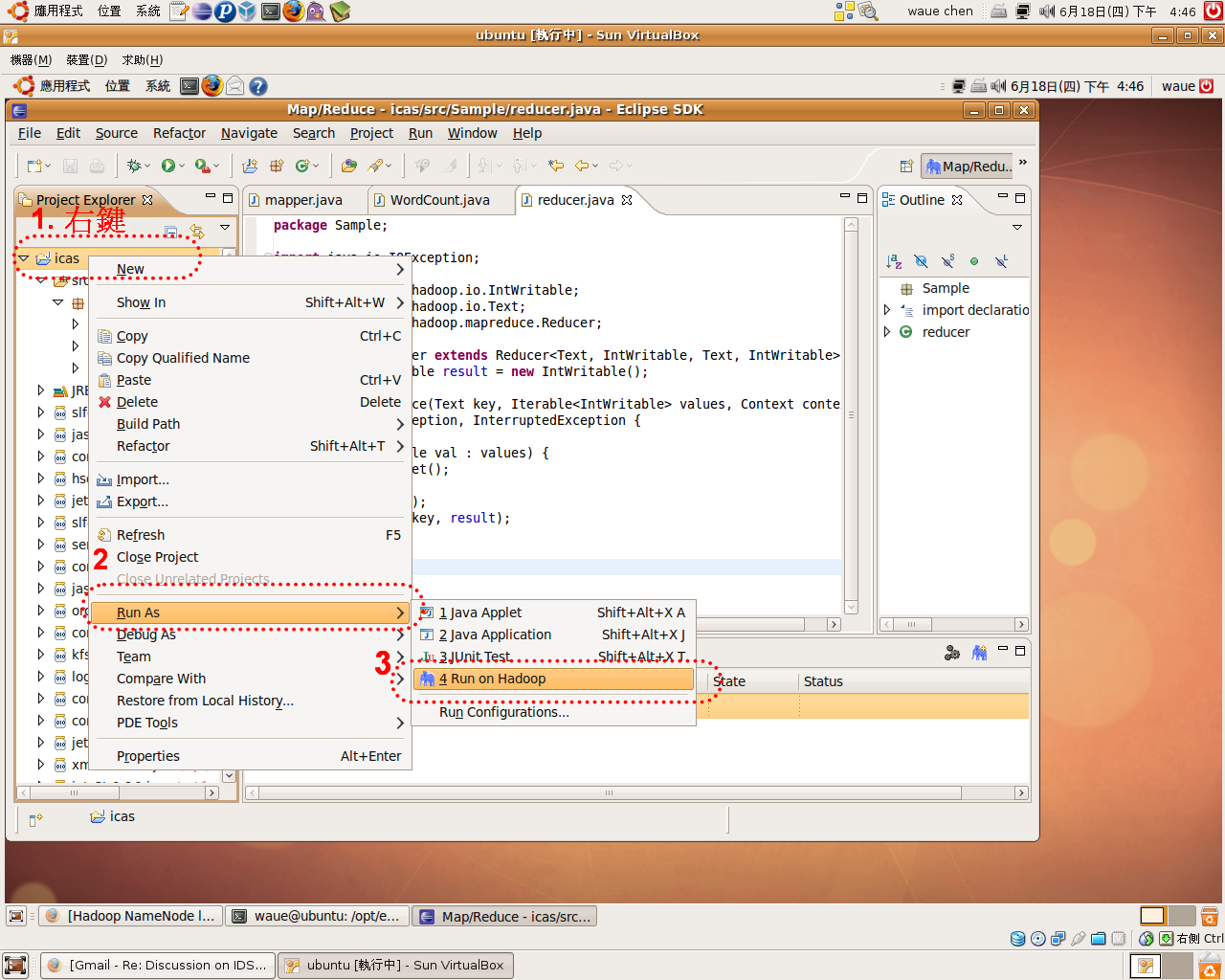

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

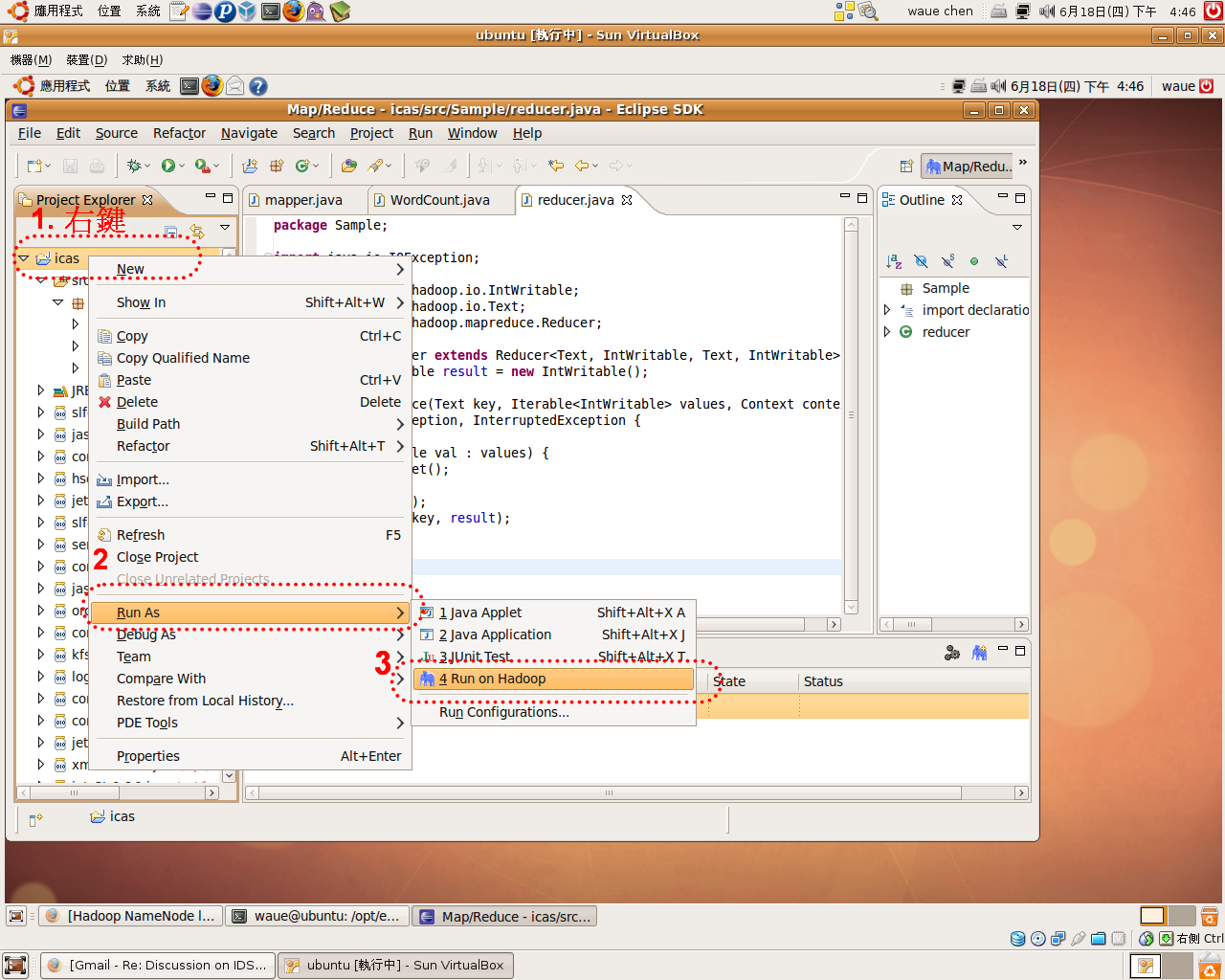

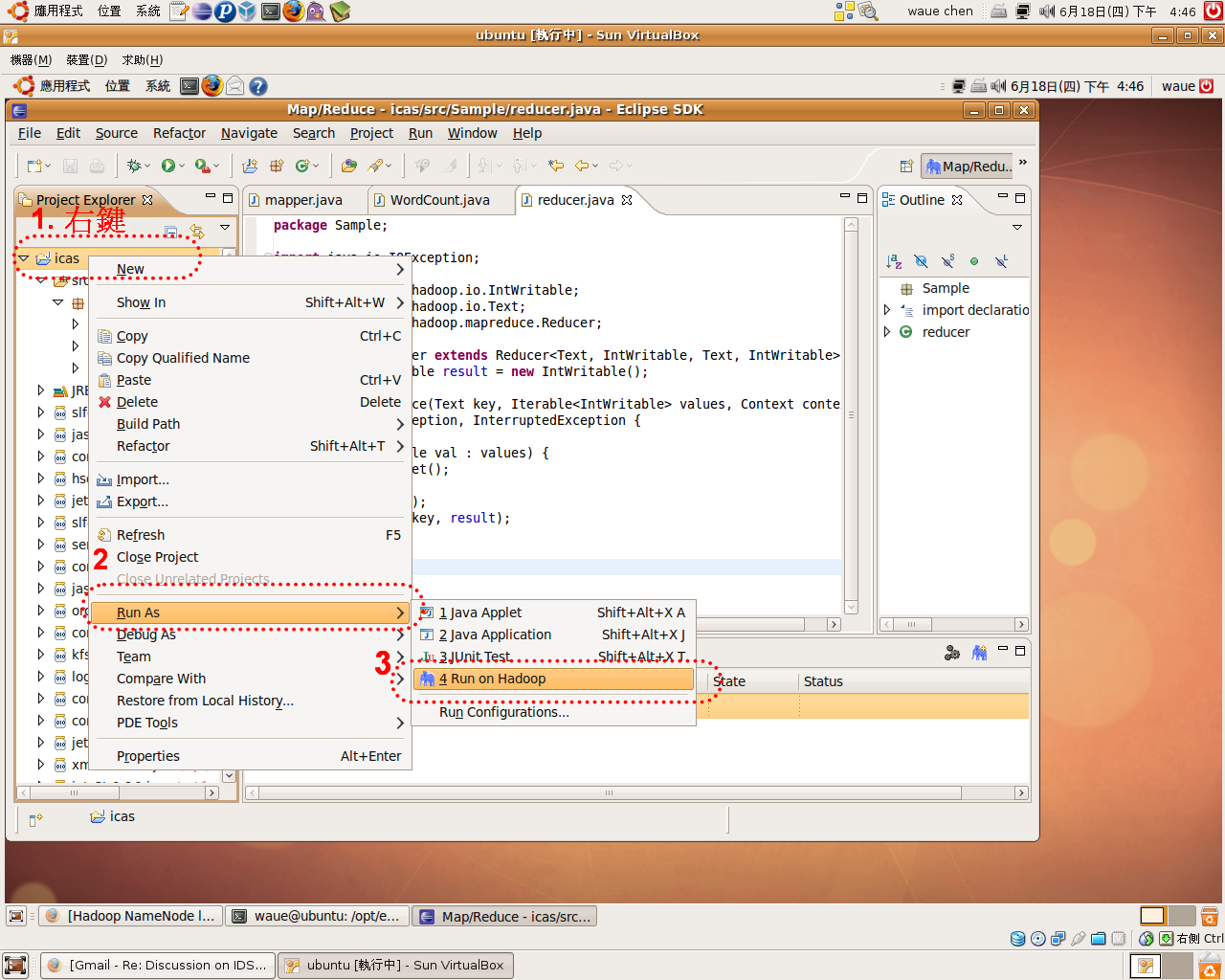

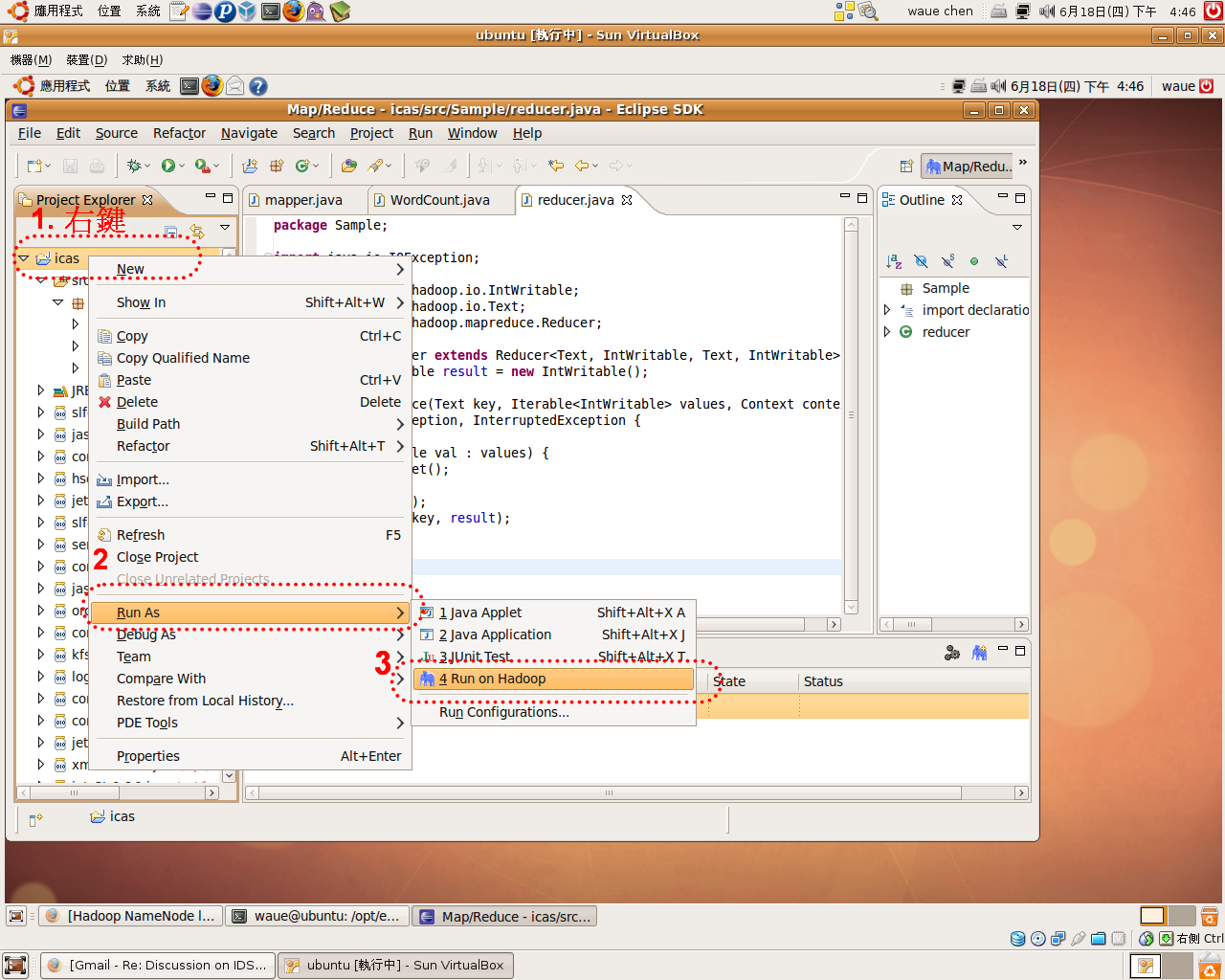

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

4.1 解除run-on-hadoop封印??

有一心的hadoop使用者提供一能 run-on-hadoop 功能恢的方法。

原因是hadoop 的 eclipse-plugin 也是用eclipse europa 版本的,而eclipse 的各版本 3.2 , 3.3, 3.4 也都有或多或少的差性存在。

因此如果先用eclipse europa 建立一新案,之後把europa的eclipse版本掉,用eclipse 3.4,之後案就能用run-on-mapreduce 功能!

有趣的可以!(感逢甲工所同)

4.2 用端指令??4.2.1 生Makefile ??$ cd /home/waue/workspace/icas/ $ gedit Makefile

- 入以下Makefile的容

JarFile="sample-0.1.jar"?MainFunc="Sample.WordCount"?LocalOutDir="/tmp/output"?all:help jar: jar -cvf?${JarFile}?-C bin/ . run: hadoop jar?${JarFile}?${MainFunc}?input output clean: hadoop fs -rmr output output: rm -rf?${LocalOutDir}?hadoop fs -get output?${LocalOutDir}?gedit${LocalOutDir}/part-r-00000 &?help: @echo?"Usage:"?@echo?" make jar - Build Jar File."?@echo?" make clean - Clean up Output directory on HDFS."?@echo?" make run - Run your MapReduce code on Hadoop."?@echo?" make output - Download and show output file"?@echo?" make help - Show Makefile options."?@echo?" "?@echo?"Example:"?@echo?" make jar; make run; make output; make clean"4.2.2 行??

?

- 行Makefile,可以到目下,行make [],若不知道何,可以打make 或 make help

- make 的用法明

$ cd /home/waue/workspace/icas/ $ make Usage: make jar - Build Jar File. make clean - Clean up Output directory on HDFS. make run - Run your MapReduce code on Hadoop. make output - Download and show output file make help - Show Makefile options. Example: make jar; make run; make output; make clean

- 下面提供各make 的

?

make jar??- 1. 生jar

?

$ make jar

make run??- 2. 跑我的wordcount 於hadoop上

$ make run

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

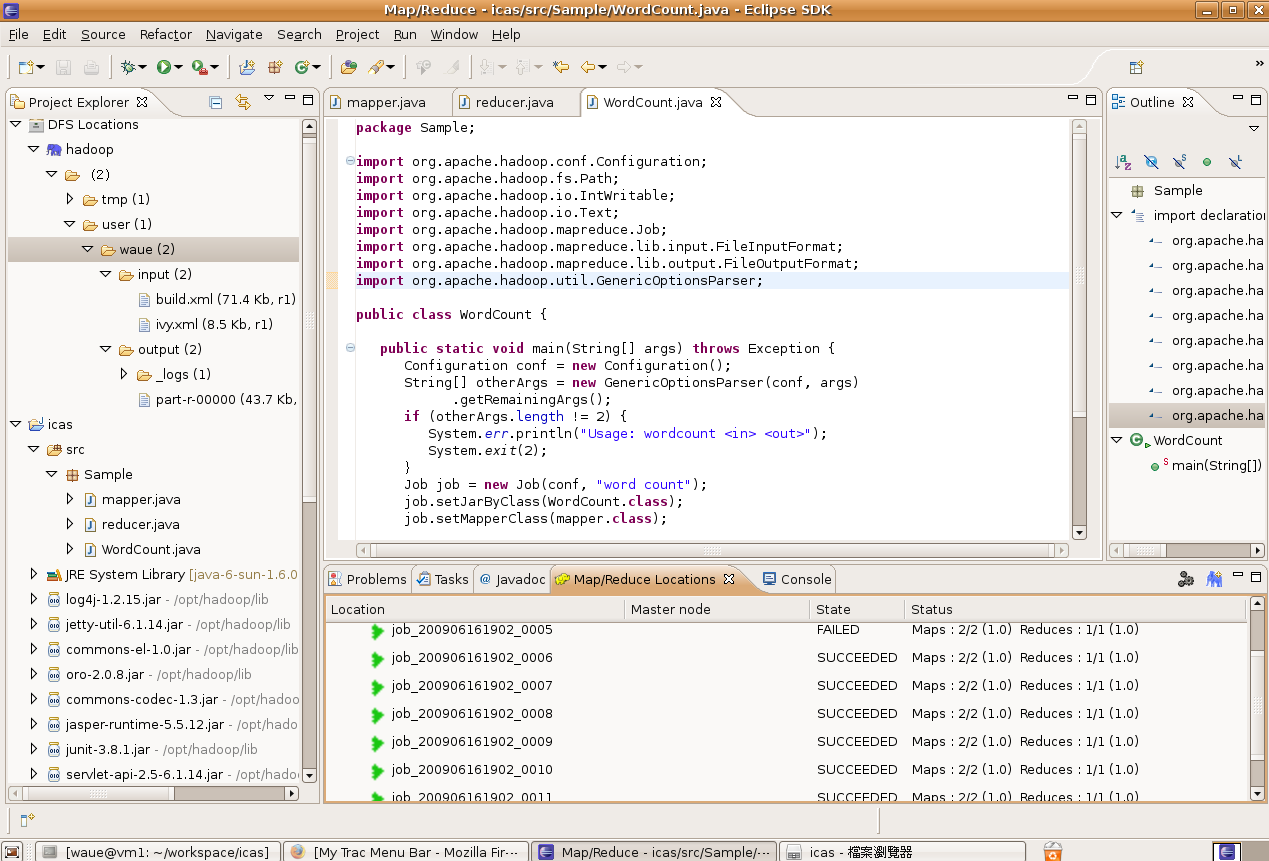

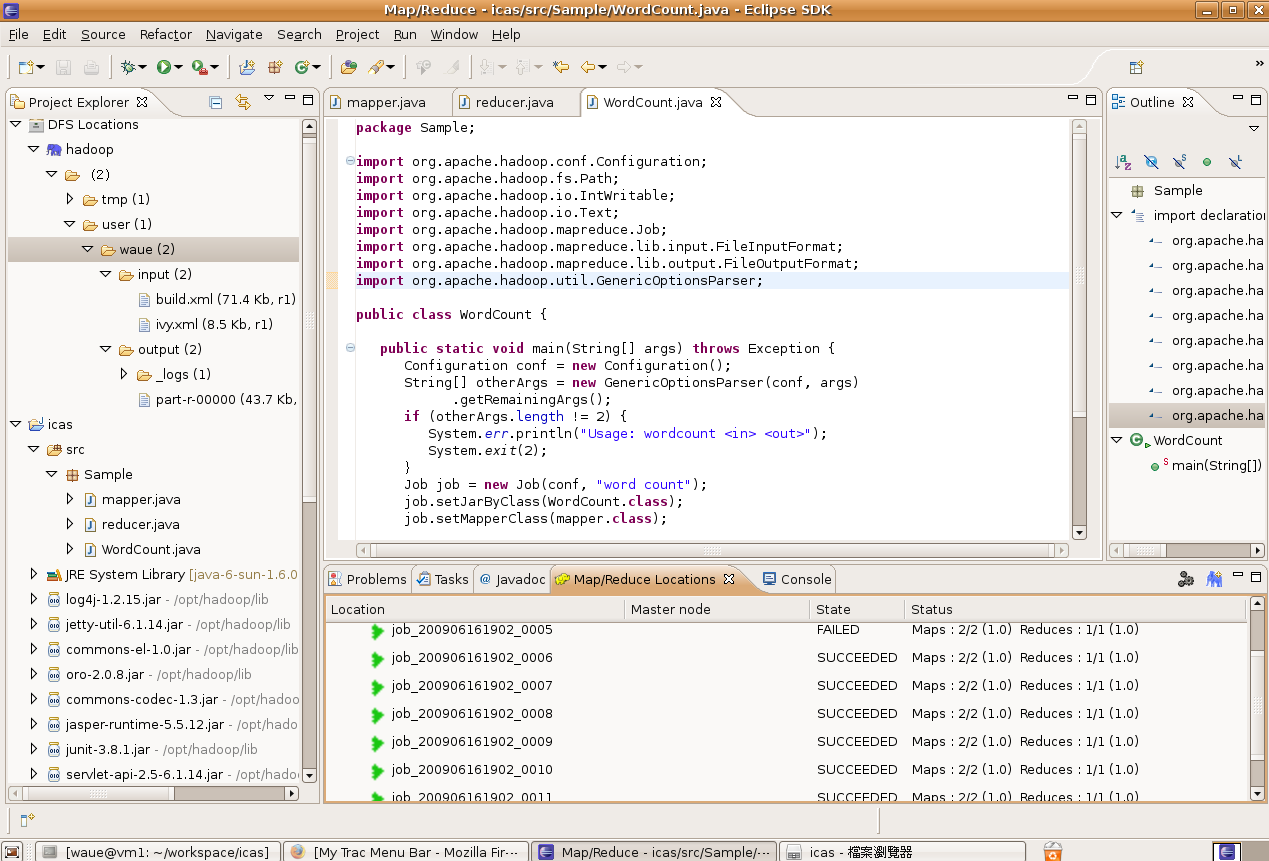

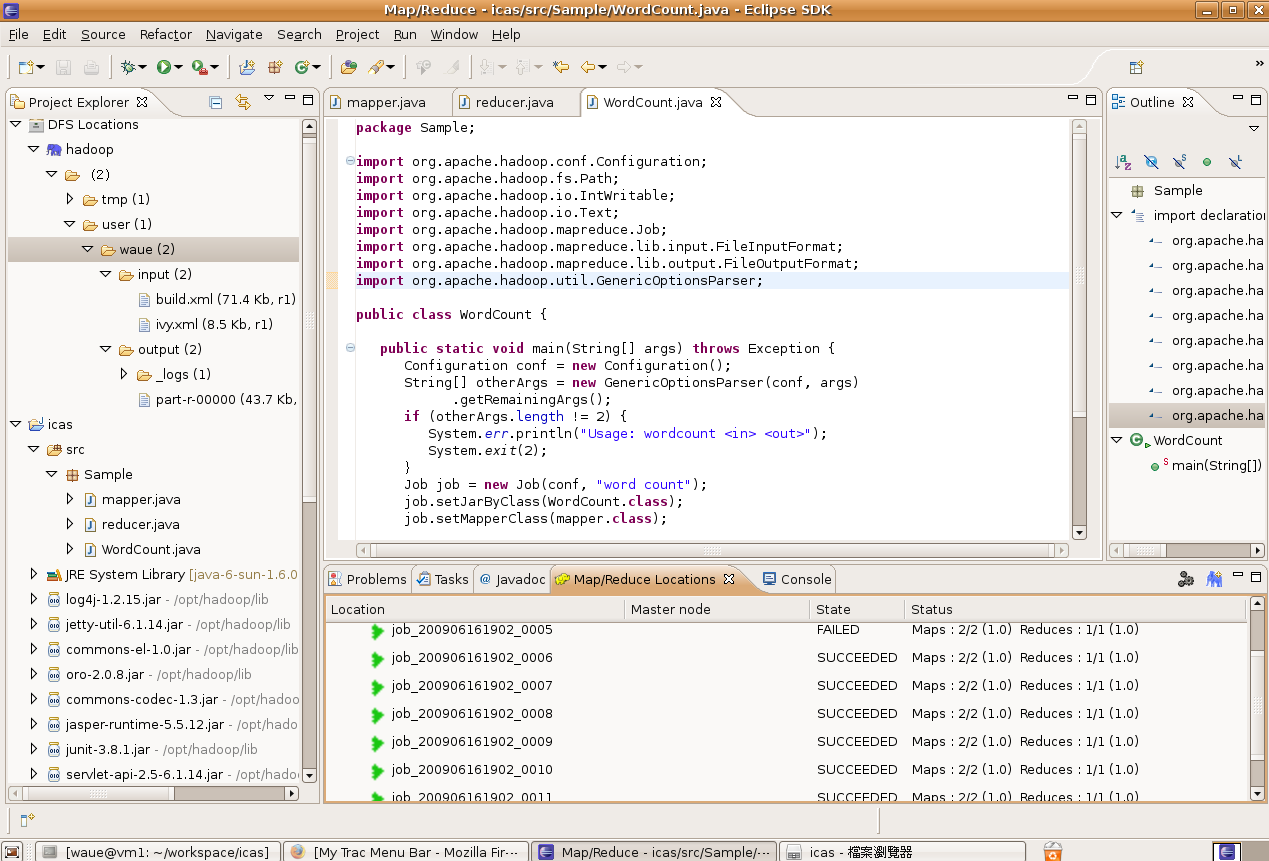

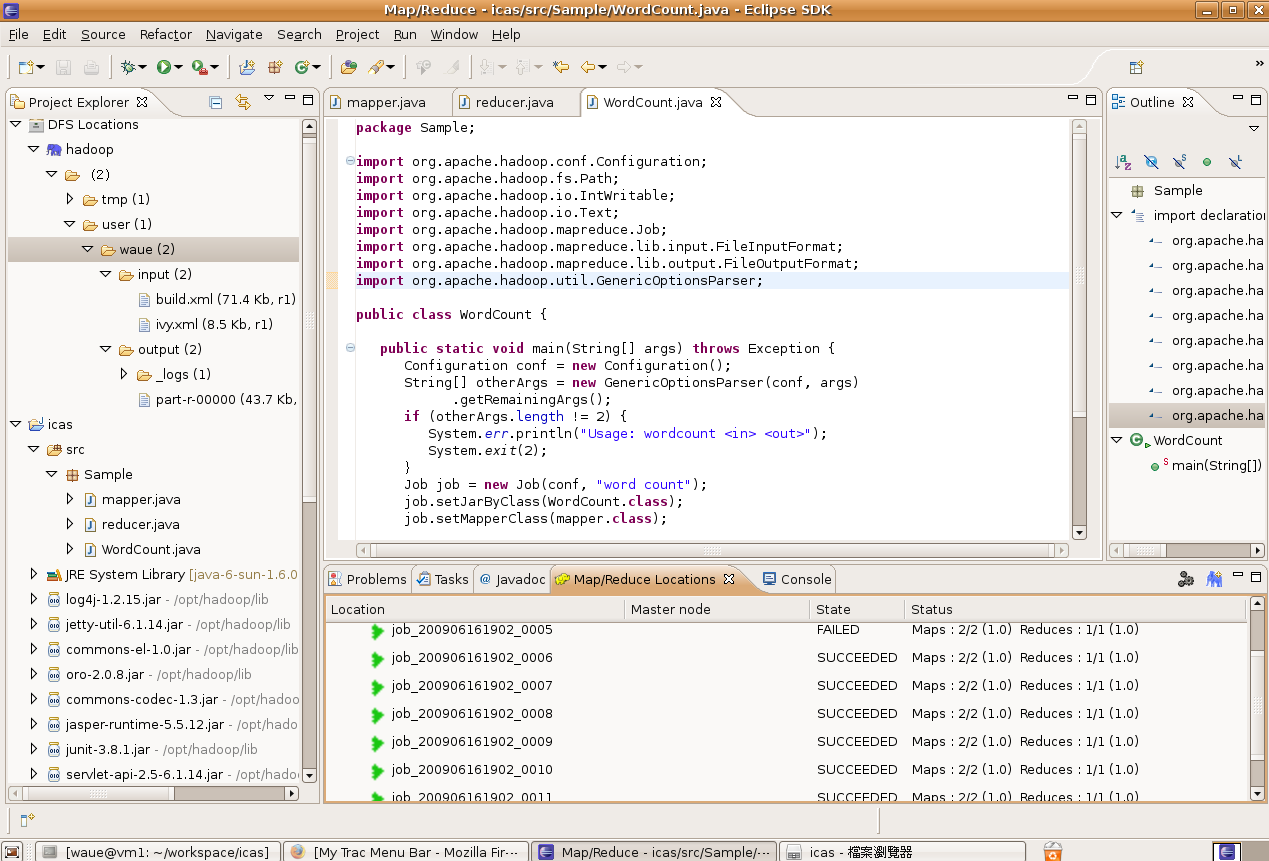

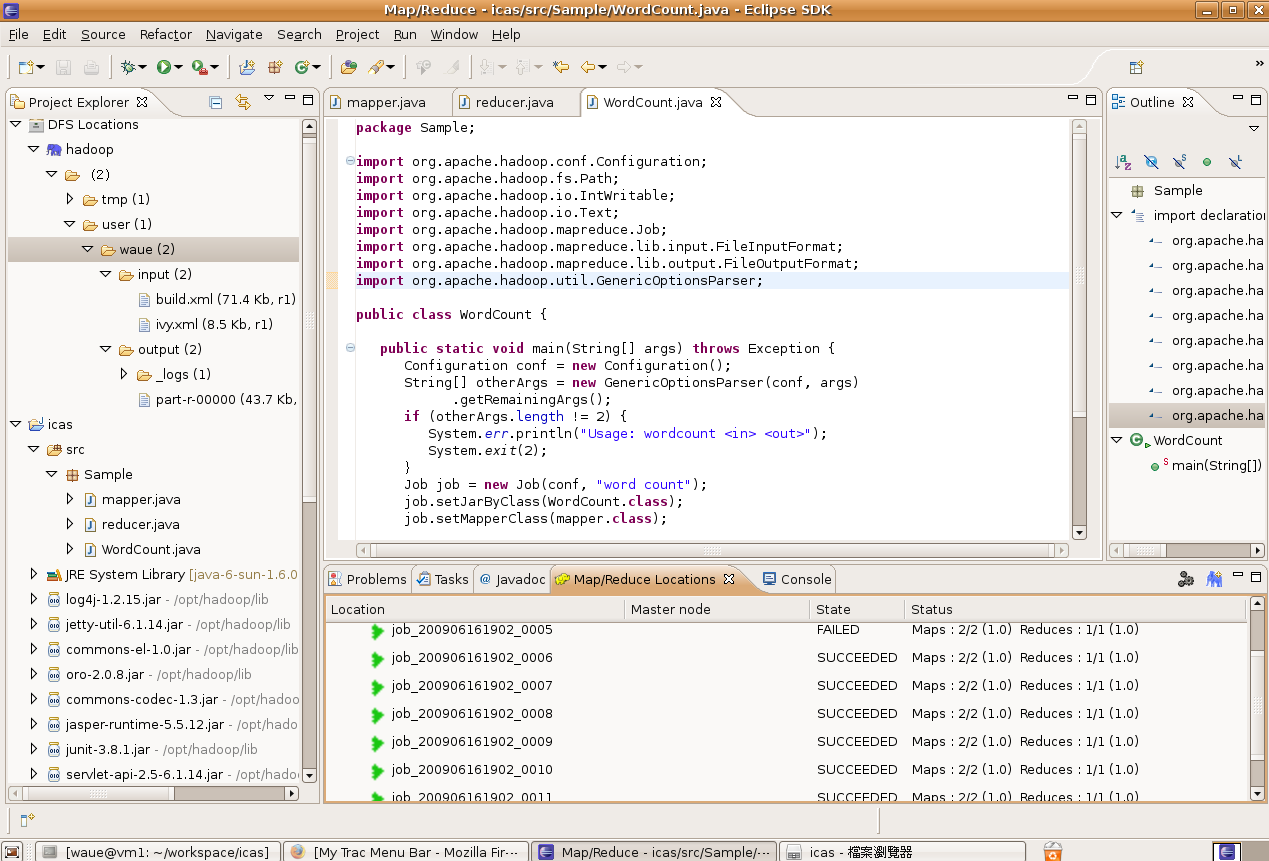

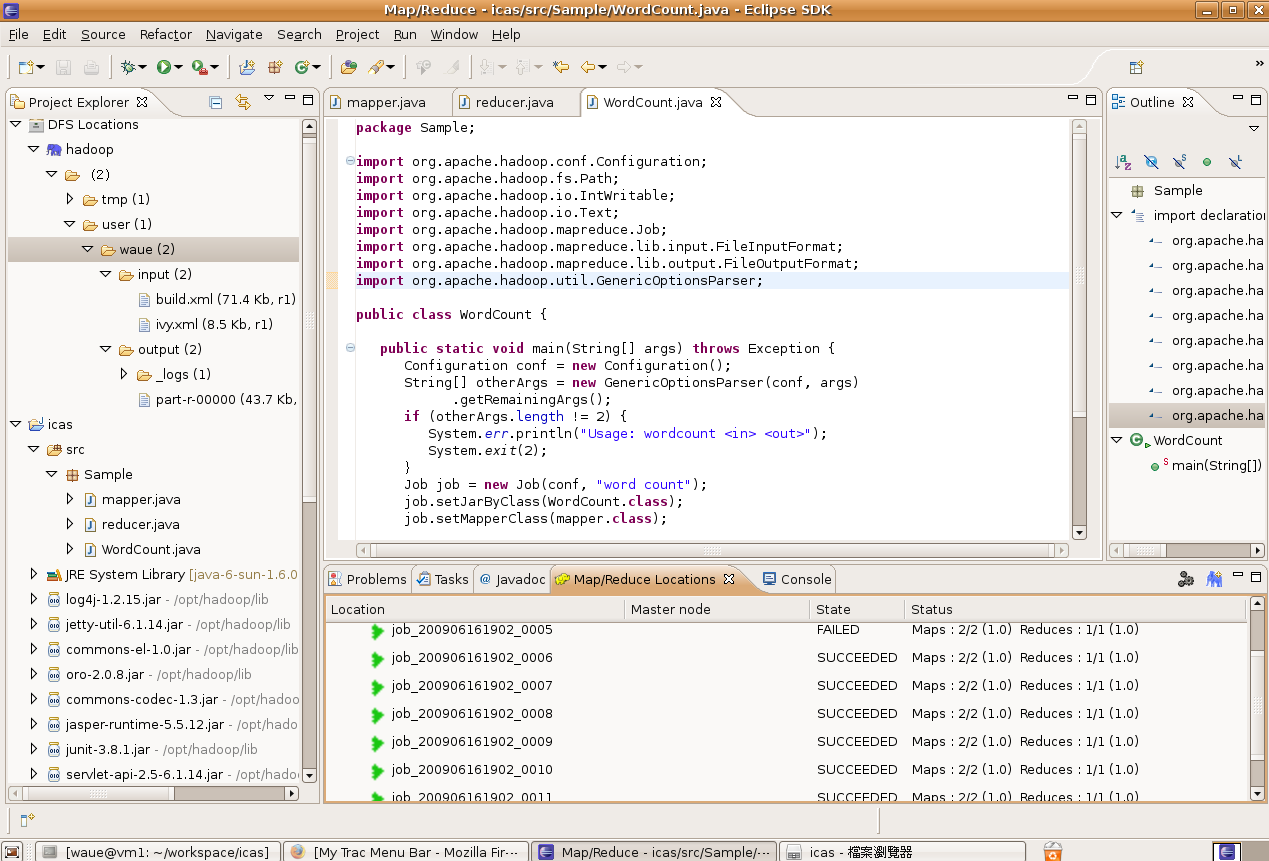

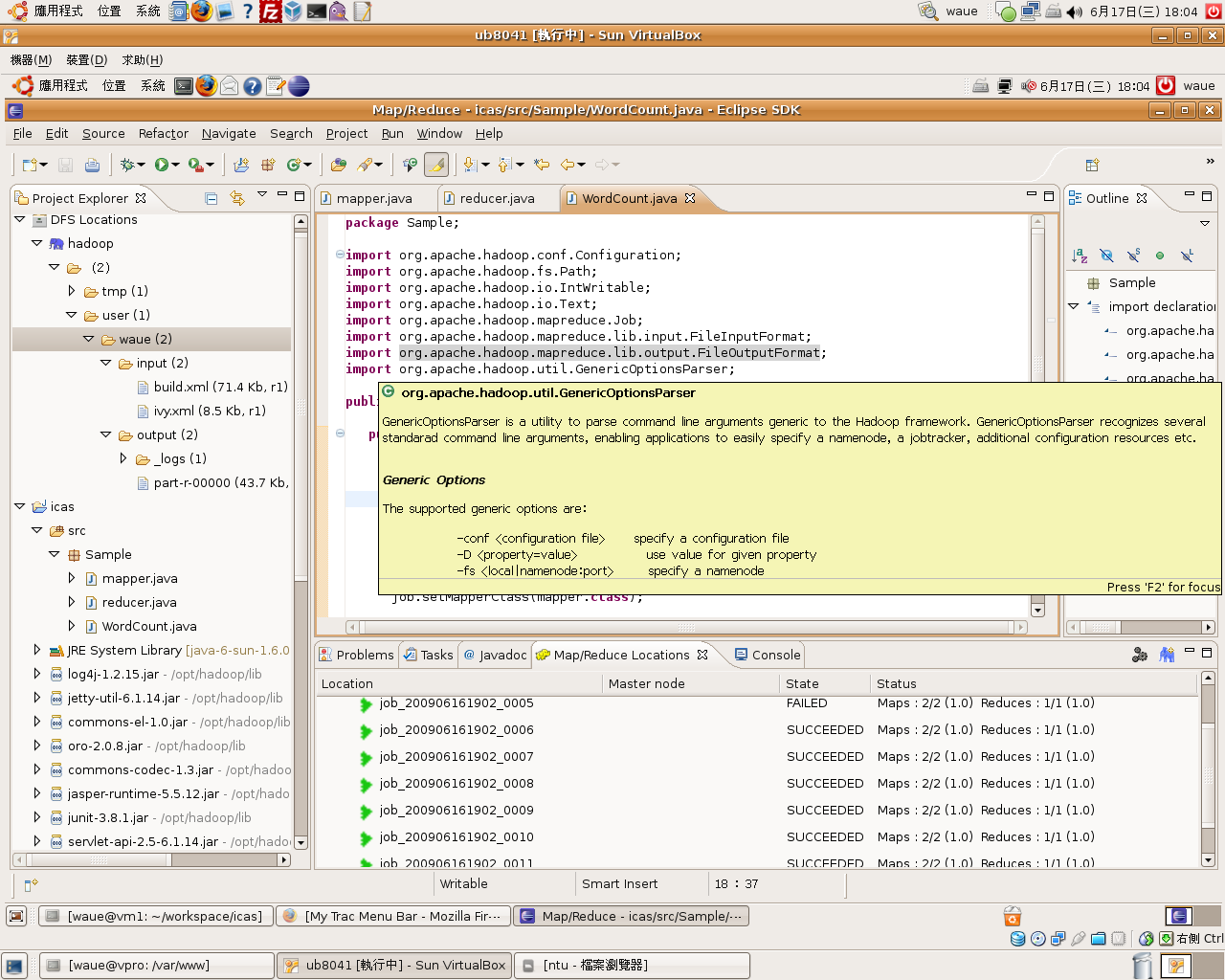

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

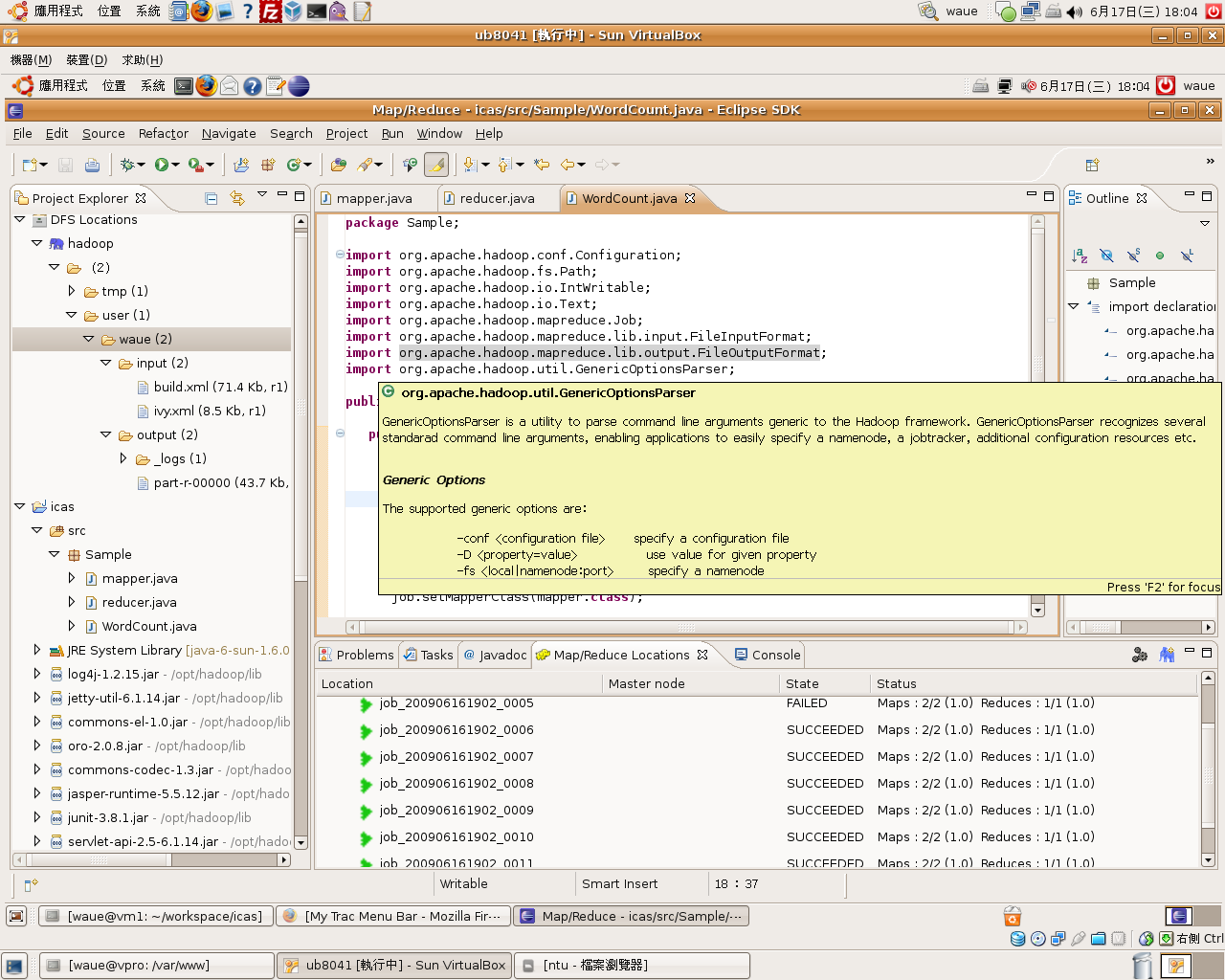

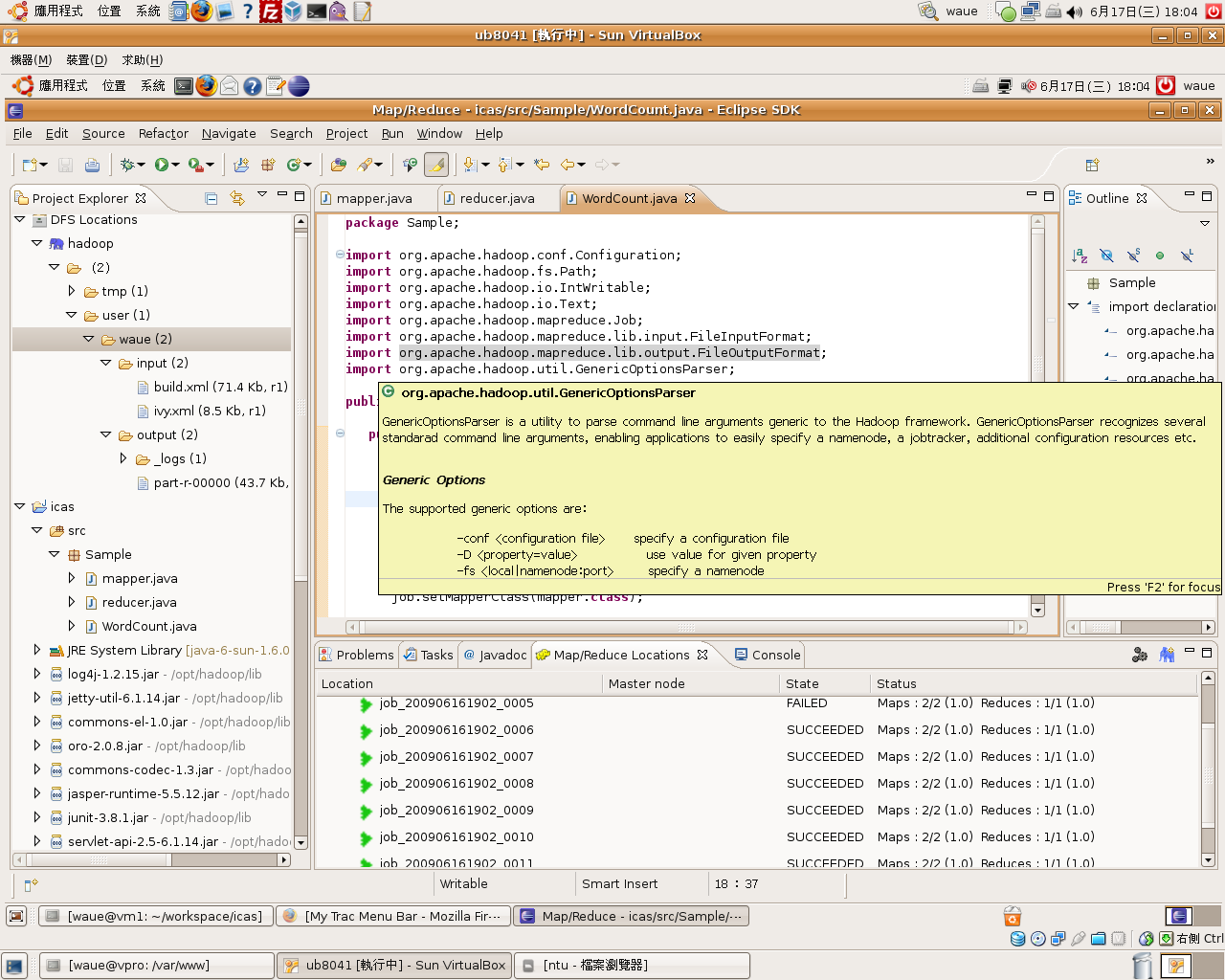

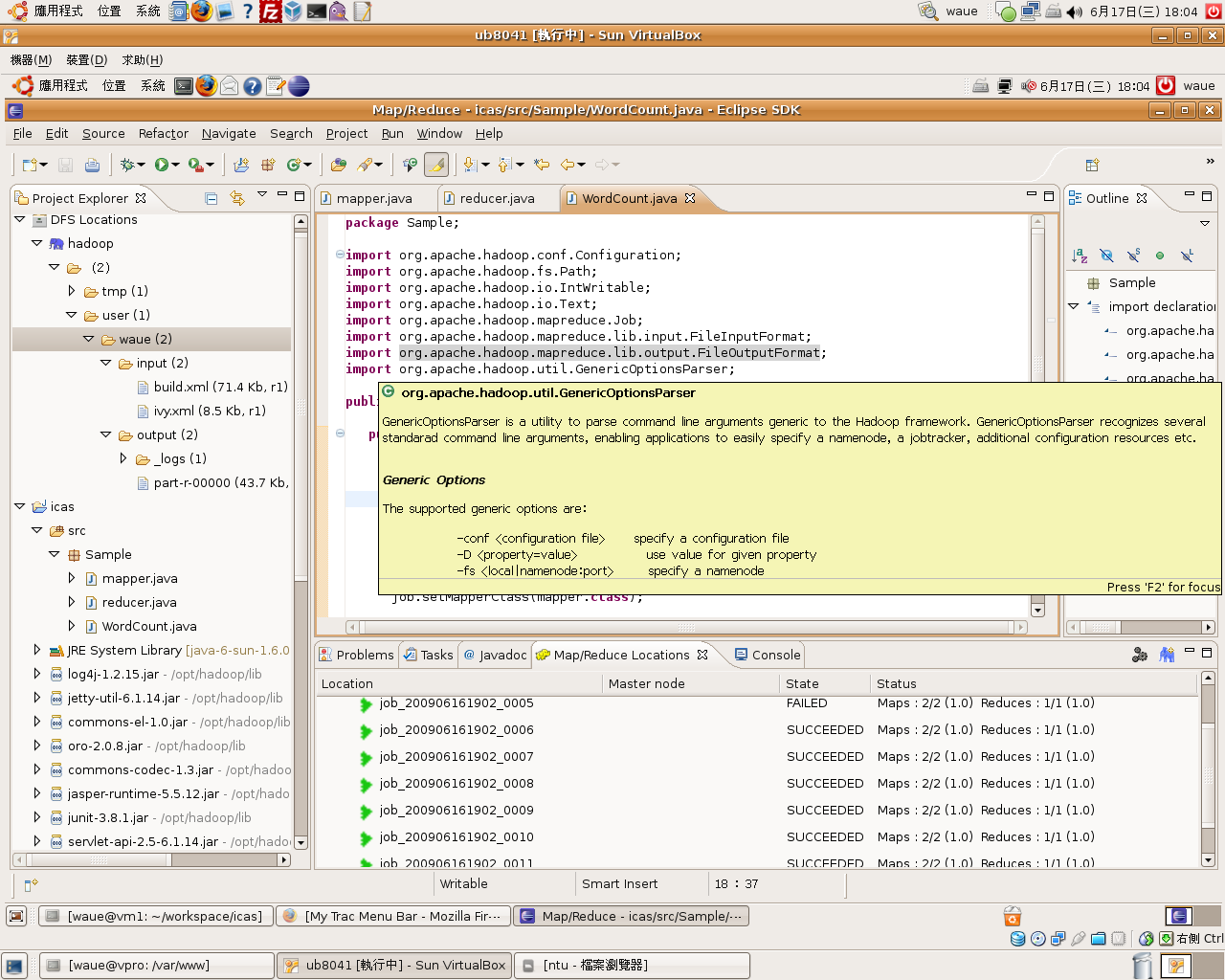

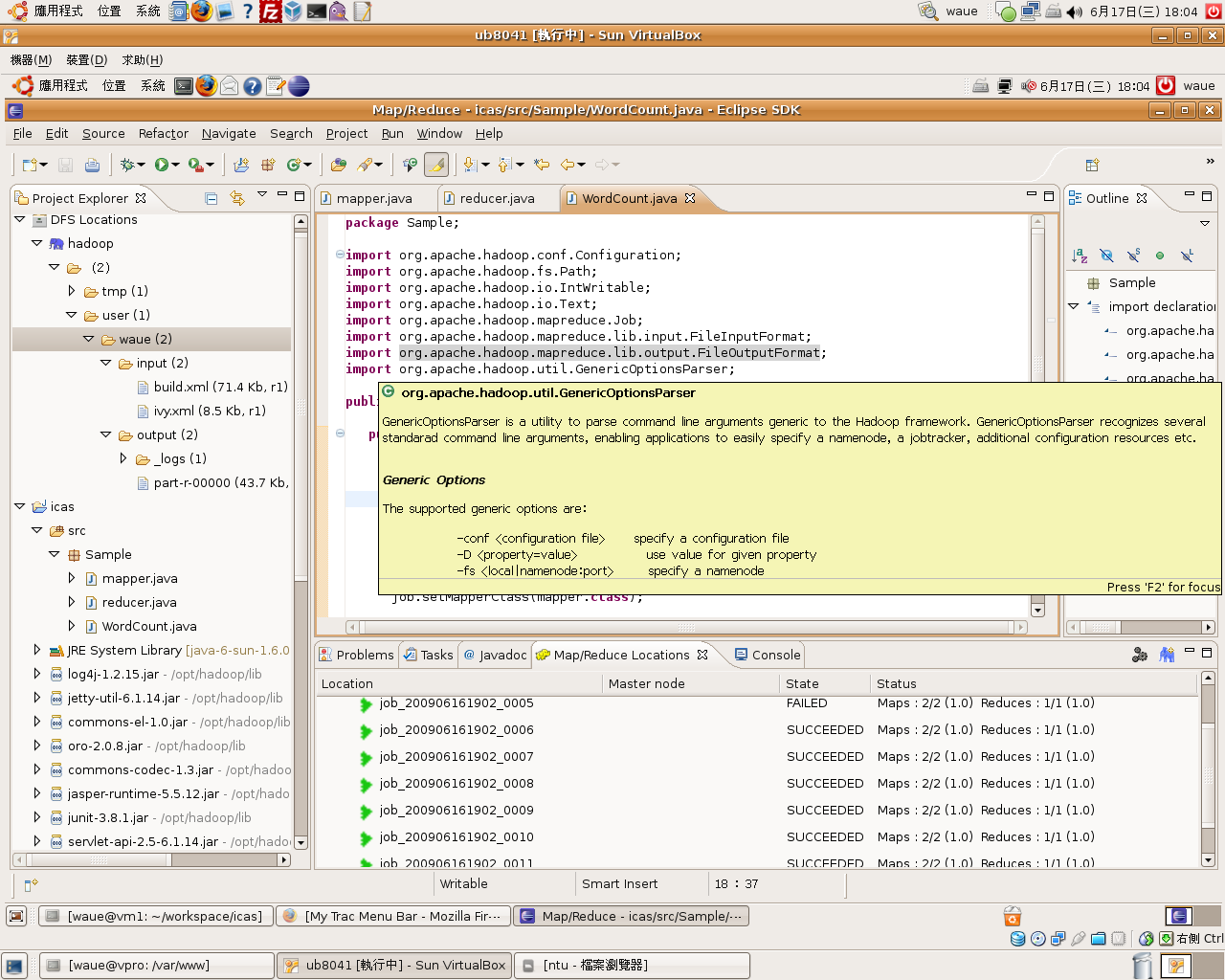

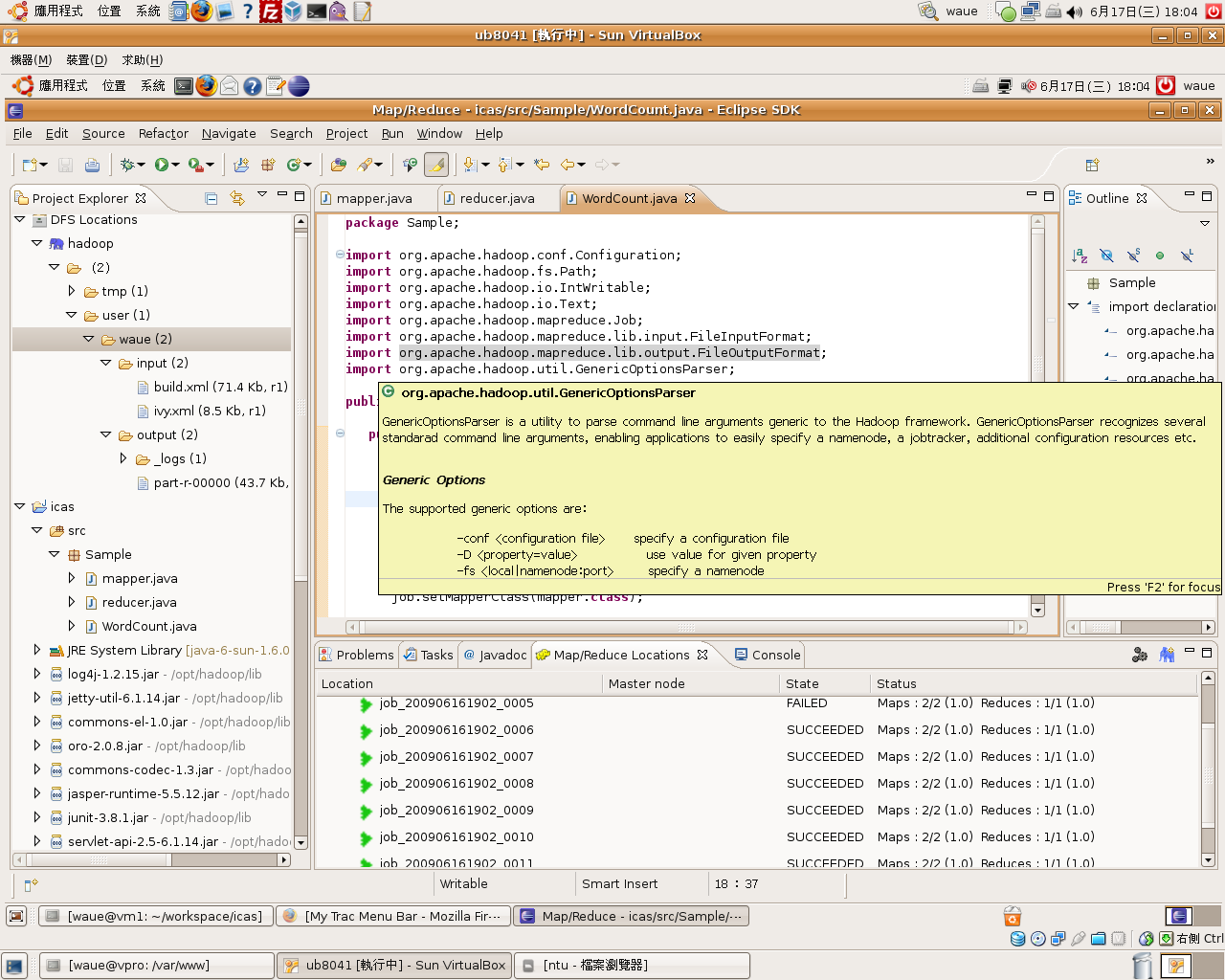

- 因有定完整的javadoc, 因此可以得到的解助

make output??- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

$ make output

make clean??- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

?

$ make clean

五、??<ul style=

位作者Mail家高速路中心-格技Wei-Yu Chenwaue @ nchc.org.tw

?

0.0 Info Update??- Last Update: 2010/01/22

最新版本的 Eclipse 3.5 搭配 Ubuntu 9.04 + hadoop-eclipse-plugin 0.20.1 ,初步功能皆可正常作

但 Ubuntu 9.10 的 各版本 Eclipse , 似乎有 gtk 形介面的bug ,有此一增加 GDK_NATIVE_WINDOWS=1 就可以解,但初步似乎用

0.1 境明??- ubuntu 8.10

- sun-java-6

- eclipse 3.4.2

- hadoop 0.20.0

0.2 目明??- 使用者:waue

- 使用者家目: /home/waue

- 案目 : /home/waue/workspace

- hadoop目: /opt/hadoop

一、安??

安的部份必要都一模一,提供考,反正只要安好java , hadoop , eclipse,清楚自己的路就可以了

1.1. 安java??

首先安java 基本套件

$ sudo apt-get install java-common sun-java6-bin sun-java6-jdk sun-java6-jre

1.1.1. 安sun-java6-doc??

?

1 javadoc (jdk-6u10-docs.zip) 下下?下

2 下完後案放在 /tmp/ 下

3 行

?

$ sudo apt-get install sun-java6-doc

1.2. ssh 安定??$ apt-get install ssh $ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys $ ssh localhost

行ssh localhost 有出密的息

1.3. 安hadoop??

安hadoop0.20到/opt/取目名hadoop

$ cd ~ $ wget http://apache.ntu.edu.tw/hadoop/core/hadoop-0.20.0/hadoop-0.20.0.tar.gz $ tar zxvf hadoop-0.20.0.tar.gz $ sudo mv hadoop-0.20.0 /opt/ $ sudo chown -R waue:waue /opt/hadoop-0.20.0 $ sudo ln -sf /opt/hadoop-0.20.0 /opt/hadoop

- /opt/hadoop/conf/hadoop-env.sh

export?JAVA_HOME=/usr/lib/jvm/java-6-sun?export?HADOOP_HOME=/opt/hadoop?exportPATH=$PATH:/opt/hadoop/bin

- /opt/hadoop/conf/core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://localhost:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp/hadoop/hadoop-${user.name}</value> </property> </configuration>- /opt/hadoop/conf/hdfs-site.xml

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

- /opt/hadoop/conf/mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

$ cd /opt/hadoop $ source /opt/hadoop/conf/hadoop-env.sh $ hadoop namenode -format $ start-all.sh $ hadoop fs -put conf input $ hadoop fs -ls

- 有息代表

1.4. 安eclipse??

?

- 在此提供方法下案

- 方法一:下?eclipse SDK 3.4.2 Classic,且放案到家目

- 方法二:上指令

$ cd ~ $ wget http://ftp.cs.pu.edu.tw/pub/eclipse/eclipse/downloads/drops/R-3.4.2-200902111700/eclipse-SDK-3.4.2-linux-gtk.tar.gz

- eclipse 已下到家目後,行下面指令:

?

$ cd ~ $ tar -zxvf eclipse-SDK-3.4.2-linux-gtk.tar.gz $ sudo mv eclipse /opt $ sudo ln -sf /opt/eclipse/eclipse /usr/local/bin/

二、 建立案??2.1 安hadoop 的 eclipse plugin??- 入hadoop 0.20.0 eclipse plugin

?

$ cd /opt/hadoop $ sudo cp /opt/hadoop/contrib/eclipse-plugin/hadoop-0.20.0-eclipse-plugin.jar /opt/eclipse/plugins

$ sudo vim /opt/eclipse/eclipse.ini

- 可斟酌考eclipse.ini容(非必要)

?

-startup plugins/org.eclipse.equinox.launcher_1.0.101.R34x_v20081125.jar --launcher.library plugins/org.eclipse.equinox.launcher.gtk.linux.x86_1.0.101.R34x_v20080805 -showsplash org.eclipse.platform --launcher.XXMaxPermSize 512m -vmargs -Xms40m -Xmx512m

2.2 eclipse??- 打eclipse

?

$ eclipse &

一始出你要工作目放在哪:在我用值

PS: 之後的明是在eclipse 上的介面操作

2.3 野??window ->open pers.. ->other.. ->map/reduce

定要用 Map/Reduce 的野

使用 Map/Reduce 的野後的介面呈

2.4 建立案??file ->new ->project ->Map/Reduce ->Map/Reduce Project ->next

建立mapreduce案(1)

建立mapreduce案的(2)

project name-> 入 : icas?(意)?use default hadoop -> Configur Hadoop install... -> 入:"/opt/hadoop"?-> ok Finish

2.5 定案??

由於建立了icas案,因此eclipse已建立了新的案,出在左窗,右料,properties

Step1. 右project的properties做部定

Step2. 入案的部定

hadoop的javadoc的定(1)

- java Build Path -> Libraries -> hadoop-0.20.0-ant.jar

- java Build Path -> Libraries -> hadoop-0.20.0-core.jar

- java Build Path -> Libraries -> hadoop-0.20.0-tools.jar

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

?

source?...-> 入:/opt/opt/hadoop-0.20.0/src javadoc ...-> 入:file:/opt/hadoop/docs/api/

Step3. hadoop的javadoc的定完後(2)

Step4. java本身的javadoc的定(3)

?

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

定完後回到eclipse 主窗

2.6 接hadoop server??Step1. 窗右下角色大象示"Map/Reduce Locations tag" -> 右的色大象示:

Step2. 行eclipse hadoop 的定(2)

Location Name -> 入:hadoop?(意)?Map/Reduce Master -> Host-> 入:localhost Map/Reduce Master -> Port-> 入:9001 DFS Master -> Host-> 入:9000 Finish

定完後,可以看到下方多了一色大象,左方展料也可以秀出在hdfs的案

三、 撰例程式??- 之前在eclipse上已了案icas,因此目在:

- /home/waue/workspace/icas

- 在目有料:

- src : 用程式原始

- bin : 用後的class

- 如此一原始和就不混在一起,之後生jar很有助

- 在我一例程式 :?WordCount

3.1 mapper.java??

?

- new

?

File ->new ->mapper

- create

source?folder-> 入: icas/src Package : Sample Name -> : mapper

- modify

?

package?Sample;?import?java.io.IOException;?import?java.util.StringTokenizer;?importorg.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Mapper;?public?class?mapper?extends?Mapper<Object,?Text,?Text,IntWritable>?{?private?final?static?IntWritable one?=?new?IntWritable(1);?private?Text word?=?newText();?public?void?map(Object key,?Text value,?Context context)?throws?IOException,InterruptedException?{?StringTokenizer itr?=?new?StringTokenizer(value.toString());?while(itr.hasMoreTokens())?{?word.set(itr.nextToken());?context.write(word,?one);?}?}?}建立mapper.java後,入程式

3.2 reducer.java??- new

- File -> new -> reducer

- create

source?folder-> 入: icas/src Package : Sample Name -> : reducer

- modify

?

package?Sample;?import?java.io.IOException;?import?org.apache.hadoop.io.IntWritable;?importorg.apache.hadoop.io.Text;?import?org.apache.hadoop.mapreduce.Reducer;?public?class?reducerextends?Reducer<Text,?IntWritable,?Text,?IntWritable>?{?private?IntWritable result?=?newIntWritable();?public?void?reduce(Text key,?Iterable<IntWritable>?values,?Context context)?throwsIOException,?InterruptedException?{?int?sum?=?0;?for?(IntWritable val?:?values)?{?sum?+=val.get();?}?result.set(sum);?context.write(key,?result);?}?}- File -> new -> Map/Reduce Driver

3.3?WordCount.java (main function)??- new

建立WordCount.java,此用mapper reducer,因此 Map/Reduce Driver

- create

source?folder-> 入: icas/src Package : Sample Name -> : WordCount.java

- modify

package?Sample;?import?org.apache.hadoop.conf.Configuration;?import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Job;?import?org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import?org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;?importorg.apache.hadoop.util.GenericOptionsParser;?public?class?WordCount?{?public?static?voidmain(String[]?args)?throws?Exception?{?Configuration conf?=?new?Configuration();?String[]otherArgs?=?new?GenericOptionsParser(conf,?args)?.getRemainingArgs();?if?(otherArgs.length?!=?2)?{System.err.println("Usage: wordcount <in> <out>");?System.exit(2);?}?Job job?=?new?Job(conf,?"word count");?job.setJarByClass(WordCount.class);?job.setMapperClass(mapper.class);job.setCombinerClass(reducer.class);?job.setReducerClass(reducer.class);job.setOutputKeyClass(Text.class);?job.setOutputValueClass(IntWritable.class);FileInputFormat.addInputPath(job,?new?Path(otherArgs[0]));?FileOutputFormat.setOutputPath(job,?newPath(otherArgs[1]));?System.exit(job.waitForCompletion(true)???0?:?1);?}?}三完成後存後,整程式建立完成

- 三都存後,可以看到icas案下的src,bin都有案生,我用指令check

?

$ cd workspace/icas $ ls src/Sample/ mapper.java reducer.java WordCount.java $ ls bin/Sample/ mapper.class reducer.class WordCount.class

四、例程式??

?

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

4.1 解除run-on-hadoop封印??

有一心的hadoop使用者提供一能 run-on-hadoop 功能恢的方法。

原因是hadoop 的 eclipse-plugin 也是用eclipse europa 版本的,而eclipse 的各版本 3.2 , 3.3, 3.4 也都有或多或少的差性存在。

因此如果先用eclipse europa 建立一新案,之後把europa的eclipse版本掉,用eclipse 3.4,之後案就能用run-on-mapreduce 功能!

有趣的可以!(感逢甲工所同)

4.2 用端指令??4.2.1 生Makefile ??$ cd /home/waue/workspace/icas/ $ gedit Makefile

- 入以下Makefile的容

JarFile="sample-0.1.jar"?MainFunc="Sample.WordCount"?LocalOutDir="/tmp/output"?all:help jar: jar -cvf?${JarFile}?-C bin/ . run: hadoop jar?${JarFile}?${MainFunc}?input output clean: hadoop fs -rmr output output: rm -rf?${LocalOutDir}?hadoop fs -get output?${LocalOutDir}?gedit${LocalOutDir}/part-r-00000 &?help: @echo?"Usage:"?@echo?" make jar - Build Jar File."?@echo?" make clean - Clean up Output directory on HDFS."?@echo?" make run - Run your MapReduce code on Hadoop."?@echo?" make output - Download and show output file"?@echo?" make help - Show Makefile options."?@echo?" "?@echo?"Example:"?@echo?" make jar; make run; make output; make clean"4.2.2 行??

?

- 行Makefile,可以到目下,行make [],若不知道何,可以打make 或 make help

- make 的用法明

$ cd /home/waue/workspace/icas/ $ make Usage: make jar - Build Jar File. make clean - Clean up Output directory on HDFS. make run - Run your MapReduce code on Hadoop. make output - Download and show output file make help - Show Makefile options. Example: make jar; make run; make output; make clean

- 下面提供各make 的

?

make jar??- 1. 生jar

?

$ make jar

make run??- 2. 跑我的wordcount 於hadoop上

$ make run

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

- 因有定完整的javadoc, 因此可以得到的解助

make output??- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

$ make output

make clean??- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

?

$ make clean

五、??<ul style=

最新版本的 Eclipse 3.5 搭配 Ubuntu 9.04 + hadoop-eclipse-plugin 0.20.1 ,初步功能皆可正常作

但 Ubuntu 9.10 的 各版本 Eclipse , 似乎有 gtk 形介面的bug ,有此一增加 GDK_NATIVE_WINDOWS=1 就可以解,但初步似乎用

0.1 境明??- ubuntu 8.10

- sun-java-6

- eclipse 3.4.2

- hadoop 0.20.0

0.2 目明??- 使用者:waue

- 使用者家目: /home/waue

- 案目 : /home/waue/workspace

- hadoop目: /opt/hadoop

一、安??

安的部份必要都一模一,提供考,反正只要安好java , hadoop , eclipse,清楚自己的路就可以了

1.1. 安java??

首先安java 基本套件

$ sudo apt-get install java-common sun-java6-bin sun-java6-jdk sun-java6-jre

1.1.1. 安sun-java6-doc??

?

1 javadoc (jdk-6u10-docs.zip) 下下?下

2 下完後案放在 /tmp/ 下

3 行

?

$ sudo apt-get install sun-java6-doc

1.2. ssh 安定??$ apt-get install ssh $ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys $ ssh localhost

行ssh localhost 有出密的息

1.3. 安hadoop??

安hadoop0.20到/opt/取目名hadoop

$ cd ~ $ wget http://apache.ntu.edu.tw/hadoop/core/hadoop-0.20.0/hadoop-0.20.0.tar.gz $ tar zxvf hadoop-0.20.0.tar.gz $ sudo mv hadoop-0.20.0 /opt/ $ sudo chown -R waue:waue /opt/hadoop-0.20.0 $ sudo ln -sf /opt/hadoop-0.20.0 /opt/hadoop

- /opt/hadoop/conf/hadoop-env.sh

export?JAVA_HOME=/usr/lib/jvm/java-6-sun?export?HADOOP_HOME=/opt/hadoop?exportPATH=$PATH:/opt/hadoop/bin

- /opt/hadoop/conf/core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://localhost:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp/hadoop/hadoop-${user.name}</value> </property> </configuration>- /opt/hadoop/conf/hdfs-site.xml

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

- /opt/hadoop/conf/mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

$ cd /opt/hadoop $ source /opt/hadoop/conf/hadoop-env.sh $ hadoop namenode -format $ start-all.sh $ hadoop fs -put conf input $ hadoop fs -ls

- 有息代表

1.4. 安eclipse??

?

- 在此提供方法下案

- 方法一:下?eclipse SDK 3.4.2 Classic,且放案到家目

- 方法二:上指令

$ cd ~ $ wget http://ftp.cs.pu.edu.tw/pub/eclipse/eclipse/downloads/drops/R-3.4.2-200902111700/eclipse-SDK-3.4.2-linux-gtk.tar.gz

- eclipse 已下到家目後,行下面指令:

?

$ cd ~ $ tar -zxvf eclipse-SDK-3.4.2-linux-gtk.tar.gz $ sudo mv eclipse /opt $ sudo ln -sf /opt/eclipse/eclipse /usr/local/bin/

二、 建立案??2.1 安hadoop 的 eclipse plugin??- 入hadoop 0.20.0 eclipse plugin

?

$ cd /opt/hadoop $ sudo cp /opt/hadoop/contrib/eclipse-plugin/hadoop-0.20.0-eclipse-plugin.jar /opt/eclipse/plugins

$ sudo vim /opt/eclipse/eclipse.ini

- 可斟酌考eclipse.ini容(非必要)

?

-startup plugins/org.eclipse.equinox.launcher_1.0.101.R34x_v20081125.jar --launcher.library plugins/org.eclipse.equinox.launcher.gtk.linux.x86_1.0.101.R34x_v20080805 -showsplash org.eclipse.platform --launcher.XXMaxPermSize 512m -vmargs -Xms40m -Xmx512m

2.2 eclipse??- 打eclipse

?

$ eclipse &

一始出你要工作目放在哪:在我用值

PS: 之後的明是在eclipse 上的介面操作

2.3 野??window ->open pers.. ->other.. ->map/reduce

定要用 Map/Reduce 的野

使用 Map/Reduce 的野後的介面呈

2.4 建立案??file ->new ->project ->Map/Reduce ->Map/Reduce Project ->next

建立mapreduce案(1)

建立mapreduce案的(2)

project name-> 入 : icas?(意)?use default hadoop -> Configur Hadoop install... -> 入:"/opt/hadoop"?-> ok Finish

2.5 定案??

由於建立了icas案,因此eclipse已建立了新的案,出在左窗,右料,properties

Step1. 右project的properties做部定

Step2. 入案的部定

hadoop的javadoc的定(1)

- java Build Path -> Libraries -> hadoop-0.20.0-ant.jar

- java Build Path -> Libraries -> hadoop-0.20.0-core.jar

- java Build Path -> Libraries -> hadoop-0.20.0-tools.jar

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

?

source?...-> 入:/opt/opt/hadoop-0.20.0/src javadoc ...-> 入:file:/opt/hadoop/docs/api/

Step3. hadoop的javadoc的定完後(2)

Step4. java本身的javadoc的定(3)

?

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

定完後回到eclipse 主窗

2.6 接hadoop server??Step1. 窗右下角色大象示"Map/Reduce Locations tag" -> 右的色大象示:

Step2. 行eclipse hadoop 的定(2)

Location Name -> 入:hadoop?(意)?Map/Reduce Master -> Host-> 入:localhost Map/Reduce Master -> Port-> 入:9001 DFS Master -> Host-> 入:9000 Finish

定完後,可以看到下方多了一色大象,左方展料也可以秀出在hdfs的案

三、 撰例程式??- 之前在eclipse上已了案icas,因此目在:

- /home/waue/workspace/icas

- 在目有料:

- src : 用程式原始

- bin : 用後的class

- 如此一原始和就不混在一起,之後生jar很有助

- 在我一例程式 :?WordCount

3.1 mapper.java??

?

- new

?

File ->new ->mapper

- create

source?folder-> 入: icas/src Package : Sample Name -> : mapper

- modify

?

package?Sample;?import?java.io.IOException;?import?java.util.StringTokenizer;?importorg.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Mapper;?public?class?mapper?extends?Mapper<Object,?Text,?Text,IntWritable>?{?private?final?static?IntWritable one?=?new?IntWritable(1);?private?Text word?=?newText();?public?void?map(Object key,?Text value,?Context context)?throws?IOException,InterruptedException?{?StringTokenizer itr?=?new?StringTokenizer(value.toString());?while(itr.hasMoreTokens())?{?word.set(itr.nextToken());?context.write(word,?one);?}?}?}建立mapper.java後,入程式

3.2 reducer.java??- new

- File -> new -> reducer

- create

source?folder-> 入: icas/src Package : Sample Name -> : reducer

- modify

?

package?Sample;?import?java.io.IOException;?import?org.apache.hadoop.io.IntWritable;?importorg.apache.hadoop.io.Text;?import?org.apache.hadoop.mapreduce.Reducer;?public?class?reducerextends?Reducer<Text,?IntWritable,?Text,?IntWritable>?{?private?IntWritable result?=?newIntWritable();?public?void?reduce(Text key,?Iterable<IntWritable>?values,?Context context)?throwsIOException,?InterruptedException?{?int?sum?=?0;?for?(IntWritable val?:?values)?{?sum?+=val.get();?}?result.set(sum);?context.write(key,?result);?}?}- File -> new -> Map/Reduce Driver

3.3?WordCount.java (main function)??- new

建立WordCount.java,此用mapper reducer,因此 Map/Reduce Driver

- create

source?folder-> 入: icas/src Package : Sample Name -> : WordCount.java

- modify

package?Sample;?import?org.apache.hadoop.conf.Configuration;?import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Job;?import?org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import?org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;?importorg.apache.hadoop.util.GenericOptionsParser;?public?class?WordCount?{?public?static?voidmain(String[]?args)?throws?Exception?{?Configuration conf?=?new?Configuration();?String[]otherArgs?=?new?GenericOptionsParser(conf,?args)?.getRemainingArgs();?if?(otherArgs.length?!=?2)?{System.err.println("Usage: wordcount <in> <out>");?System.exit(2);?}?Job job?=?new?Job(conf,?"word count");?job.setJarByClass(WordCount.class);?job.setMapperClass(mapper.class);job.setCombinerClass(reducer.class);?job.setReducerClass(reducer.class);job.setOutputKeyClass(Text.class);?job.setOutputValueClass(IntWritable.class);FileInputFormat.addInputPath(job,?new?Path(otherArgs[0]));?FileOutputFormat.setOutputPath(job,?newPath(otherArgs[1]));?System.exit(job.waitForCompletion(true)???0?:?1);?}?}三完成後存後,整程式建立完成

- 三都存後,可以看到icas案下的src,bin都有案生,我用指令check

?

$ cd workspace/icas $ ls src/Sample/ mapper.java reducer.java WordCount.java $ ls bin/Sample/ mapper.class reducer.class WordCount.class

四、例程式??

?

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

4.1 解除run-on-hadoop封印??

有一心的hadoop使用者提供一能 run-on-hadoop 功能恢的方法。

原因是hadoop 的 eclipse-plugin 也是用eclipse europa 版本的,而eclipse 的各版本 3.2 , 3.3, 3.4 也都有或多或少的差性存在。

因此如果先用eclipse europa 建立一新案,之後把europa的eclipse版本掉,用eclipse 3.4,之後案就能用run-on-mapreduce 功能!

有趣的可以!(感逢甲工所同)

4.2 用端指令??4.2.1 生Makefile ??$ cd /home/waue/workspace/icas/ $ gedit Makefile

- 入以下Makefile的容

JarFile="sample-0.1.jar"?MainFunc="Sample.WordCount"?LocalOutDir="/tmp/output"?all:help jar: jar -cvf?${JarFile}?-C bin/ . run: hadoop jar?${JarFile}?${MainFunc}?input output clean: hadoop fs -rmr output output: rm -rf?${LocalOutDir}?hadoop fs -get output?${LocalOutDir}?gedit${LocalOutDir}/part-r-00000 &?help: @echo?"Usage:"?@echo?" make jar - Build Jar File."?@echo?" make clean - Clean up Output directory on HDFS."?@echo?" make run - Run your MapReduce code on Hadoop."?@echo?" make output - Download and show output file"?@echo?" make help - Show Makefile options."?@echo?" "?@echo?"Example:"?@echo?" make jar; make run; make output; make clean"4.2.2 行??

?

- 行Makefile,可以到目下,行make [],若不知道何,可以打make 或 make help

- make 的用法明

$ cd /home/waue/workspace/icas/ $ make Usage: make jar - Build Jar File. make clean - Clean up Output directory on HDFS. make run - Run your MapReduce code on Hadoop. make output - Download and show output file make help - Show Makefile options. Example: make jar; make run; make output; make clean

- 下面提供各make 的

?

make jar??- 1. 生jar

?

$ make jar

make run??- 2. 跑我的wordcount 於hadoop上

$ make run

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

- 因有定完整的javadoc, 因此可以得到的解助

make output??- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

$ make output

make clean??- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

?

$ make clean

五、??<ul style=

0.2 目明??- 使用者:waue

- 使用者家目: /home/waue

- 案目 : /home/waue/workspace

- hadoop目: /opt/hadoop

一、安??

安的部份必要都一模一,提供考,反正只要安好java , hadoop , eclipse,清楚自己的路就可以了

1.1. 安java??

首先安java 基本套件

$ sudo apt-get install java-common sun-java6-bin sun-java6-jdk sun-java6-jre

1.1.1. 安sun-java6-doc??

?

1 javadoc (jdk-6u10-docs.zip) 下下?下

2 下完後案放在 /tmp/ 下

3 行

?

$ sudo apt-get install sun-java6-doc

1.2. ssh 安定??$ apt-get install ssh $ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys $ ssh localhost

行ssh localhost 有出密的息

1.3. 安hadoop??

安hadoop0.20到/opt/取目名hadoop

$ cd ~ $ wget http://apache.ntu.edu.tw/hadoop/core/hadoop-0.20.0/hadoop-0.20.0.tar.gz $ tar zxvf hadoop-0.20.0.tar.gz $ sudo mv hadoop-0.20.0 /opt/ $ sudo chown -R waue:waue /opt/hadoop-0.20.0 $ sudo ln -sf /opt/hadoop-0.20.0 /opt/hadoop

- /opt/hadoop/conf/hadoop-env.sh

export?JAVA_HOME=/usr/lib/jvm/java-6-sun?export?HADOOP_HOME=/opt/hadoop?exportPATH=$PATH:/opt/hadoop/bin

- /opt/hadoop/conf/core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://localhost:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp/hadoop/hadoop-${user.name}</value> </property> </configuration>- /opt/hadoop/conf/hdfs-site.xml

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

- /opt/hadoop/conf/mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

$ cd /opt/hadoop $ source /opt/hadoop/conf/hadoop-env.sh $ hadoop namenode -format $ start-all.sh $ hadoop fs -put conf input $ hadoop fs -ls

- 有息代表

1.4. 安eclipse??

?

- 在此提供方法下案

- 方法一:下?eclipse SDK 3.4.2 Classic,且放案到家目

- 方法二:上指令

$ cd ~ $ wget http://ftp.cs.pu.edu.tw/pub/eclipse/eclipse/downloads/drops/R-3.4.2-200902111700/eclipse-SDK-3.4.2-linux-gtk.tar.gz

- eclipse 已下到家目後,行下面指令:

?

$ cd ~ $ tar -zxvf eclipse-SDK-3.4.2-linux-gtk.tar.gz $ sudo mv eclipse /opt $ sudo ln -sf /opt/eclipse/eclipse /usr/local/bin/

二、 建立案??2.1 安hadoop 的 eclipse plugin??- 入hadoop 0.20.0 eclipse plugin

?

$ cd /opt/hadoop $ sudo cp /opt/hadoop/contrib/eclipse-plugin/hadoop-0.20.0-eclipse-plugin.jar /opt/eclipse/plugins

$ sudo vim /opt/eclipse/eclipse.ini

- 可斟酌考eclipse.ini容(非必要)

?

-startup plugins/org.eclipse.equinox.launcher_1.0.101.R34x_v20081125.jar --launcher.library plugins/org.eclipse.equinox.launcher.gtk.linux.x86_1.0.101.R34x_v20080805 -showsplash org.eclipse.platform --launcher.XXMaxPermSize 512m -vmargs -Xms40m -Xmx512m

2.2 eclipse??- 打eclipse

?

$ eclipse &

一始出你要工作目放在哪:在我用值

PS: 之後的明是在eclipse 上的介面操作

2.3 野??window ->open pers.. ->other.. ->map/reduce

定要用 Map/Reduce 的野

使用 Map/Reduce 的野後的介面呈

2.4 建立案??file ->new ->project ->Map/Reduce ->Map/Reduce Project ->next

建立mapreduce案(1)

建立mapreduce案的(2)

project name-> 入 : icas?(意)?use default hadoop -> Configur Hadoop install... -> 入:"/opt/hadoop"?-> ok Finish

2.5 定案??

由於建立了icas案,因此eclipse已建立了新的案,出在左窗,右料,properties

Step1. 右project的properties做部定

Step2. 入案的部定

hadoop的javadoc的定(1)

- java Build Path -> Libraries -> hadoop-0.20.0-ant.jar

- java Build Path -> Libraries -> hadoop-0.20.0-core.jar

- java Build Path -> Libraries -> hadoop-0.20.0-tools.jar

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

?

source?...-> 入:/opt/opt/hadoop-0.20.0/src javadoc ...-> 入:file:/opt/hadoop/docs/api/

Step3. hadoop的javadoc的定完後(2)

Step4. java本身的javadoc的定(3)

?

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

定完後回到eclipse 主窗

2.6 接hadoop server??Step1. 窗右下角色大象示"Map/Reduce Locations tag" -> 右的色大象示:

Step2. 行eclipse hadoop 的定(2)

Location Name -> 入:hadoop?(意)?Map/Reduce Master -> Host-> 入:localhost Map/Reduce Master -> Port-> 入:9001 DFS Master -> Host-> 入:9000 Finish

定完後,可以看到下方多了一色大象,左方展料也可以秀出在hdfs的案

三、 撰例程式??- 之前在eclipse上已了案icas,因此目在:

- /home/waue/workspace/icas

- 在目有料:

- src : 用程式原始

- bin : 用後的class

- 如此一原始和就不混在一起,之後生jar很有助

- 在我一例程式 :?WordCount

3.1 mapper.java??

?

- new

?

File ->new ->mapper

- create

source?folder-> 入: icas/src Package : Sample Name -> : mapper

- modify

?

package?Sample;?import?java.io.IOException;?import?java.util.StringTokenizer;?importorg.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Mapper;?public?class?mapper?extends?Mapper<Object,?Text,?Text,IntWritable>?{?private?final?static?IntWritable one?=?new?IntWritable(1);?private?Text word?=?newText();?public?void?map(Object key,?Text value,?Context context)?throws?IOException,InterruptedException?{?StringTokenizer itr?=?new?StringTokenizer(value.toString());?while(itr.hasMoreTokens())?{?word.set(itr.nextToken());?context.write(word,?one);?}?}?}建立mapper.java後,入程式

3.2 reducer.java??- new

- File -> new -> reducer

- create

source?folder-> 入: icas/src Package : Sample Name -> : reducer

- modify

?

package?Sample;?import?java.io.IOException;?import?org.apache.hadoop.io.IntWritable;?importorg.apache.hadoop.io.Text;?import?org.apache.hadoop.mapreduce.Reducer;?public?class?reducerextends?Reducer<Text,?IntWritable,?Text,?IntWritable>?{?private?IntWritable result?=?newIntWritable();?public?void?reduce(Text key,?Iterable<IntWritable>?values,?Context context)?throwsIOException,?InterruptedException?{?int?sum?=?0;?for?(IntWritable val?:?values)?{?sum?+=val.get();?}?result.set(sum);?context.write(key,?result);?}?}- File -> new -> Map/Reduce Driver

3.3?WordCount.java (main function)??- new

建立WordCount.java,此用mapper reducer,因此 Map/Reduce Driver

- create

source?folder-> 入: icas/src Package : Sample Name -> : WordCount.java

- modify

package?Sample;?import?org.apache.hadoop.conf.Configuration;?import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Job;?import?org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import?org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;?importorg.apache.hadoop.util.GenericOptionsParser;?public?class?WordCount?{?public?static?voidmain(String[]?args)?throws?Exception?{?Configuration conf?=?new?Configuration();?String[]otherArgs?=?new?GenericOptionsParser(conf,?args)?.getRemainingArgs();?if?(otherArgs.length?!=?2)?{System.err.println("Usage: wordcount <in> <out>");?System.exit(2);?}?Job job?=?new?Job(conf,?"word count");?job.setJarByClass(WordCount.class);?job.setMapperClass(mapper.class);job.setCombinerClass(reducer.class);?job.setReducerClass(reducer.class);job.setOutputKeyClass(Text.class);?job.setOutputValueClass(IntWritable.class);FileInputFormat.addInputPath(job,?new?Path(otherArgs[0]));?FileOutputFormat.setOutputPath(job,?newPath(otherArgs[1]));?System.exit(job.waitForCompletion(true)???0?:?1);?}?}三完成後存後,整程式建立完成

- 三都存後,可以看到icas案下的src,bin都有案生,我用指令check

?

$ cd workspace/icas $ ls src/Sample/ mapper.java reducer.java WordCount.java $ ls bin/Sample/ mapper.class reducer.class WordCount.class

四、例程式??

?

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

4.1 解除run-on-hadoop封印??

有一心的hadoop使用者提供一能 run-on-hadoop 功能恢的方法。

原因是hadoop 的 eclipse-plugin 也是用eclipse europa 版本的,而eclipse 的各版本 3.2 , 3.3, 3.4 也都有或多或少的差性存在。

因此如果先用eclipse europa 建立一新案,之後把europa的eclipse版本掉,用eclipse 3.4,之後案就能用run-on-mapreduce 功能!

有趣的可以!(感逢甲工所同)

4.2 用端指令??4.2.1 生Makefile ??$ cd /home/waue/workspace/icas/ $ gedit Makefile

- 入以下Makefile的容

JarFile="sample-0.1.jar"?MainFunc="Sample.WordCount"?LocalOutDir="/tmp/output"?all:help jar: jar -cvf?${JarFile}?-C bin/ . run: hadoop jar?${JarFile}?${MainFunc}?input output clean: hadoop fs -rmr output output: rm -rf?${LocalOutDir}?hadoop fs -get output?${LocalOutDir}?gedit${LocalOutDir}/part-r-00000 &?help: @echo?"Usage:"?@echo?" make jar - Build Jar File."?@echo?" make clean - Clean up Output directory on HDFS."?@echo?" make run - Run your MapReduce code on Hadoop."?@echo?" make output - Download and show output file"?@echo?" make help - Show Makefile options."?@echo?" "?@echo?"Example:"?@echo?" make jar; make run; make output; make clean"4.2.2 行??

?

- 行Makefile,可以到目下,行make [],若不知道何,可以打make 或 make help

- make 的用法明

$ cd /home/waue/workspace/icas/ $ make Usage: make jar - Build Jar File. make clean - Clean up Output directory on HDFS. make run - Run your MapReduce code on Hadoop. make output - Download and show output file make help - Show Makefile options. Example: make jar; make run; make output; make clean

- 下面提供各make 的

?

make jar??- 1. 生jar

?

$ make jar

make run??- 2. 跑我的wordcount 於hadoop上

$ make run

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

- 因有定完整的javadoc, 因此可以得到的解助

make output??- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

$ make output

make clean??- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

?

$ make clean

五、??<ul style=

一、安??

安的部份必要都一模一,提供考,反正只要安好java , hadoop , eclipse,清楚自己的路就可以了

1.1. 安java??

首先安java 基本套件

$ sudo apt-get install java-common sun-java6-bin sun-java6-jdk sun-java6-jre

1.1.1. 安sun-java6-doc??

?

1 javadoc (jdk-6u10-docs.zip) 下下?下

2 下完後案放在 /tmp/ 下

3 行

?

$ sudo apt-get install sun-java6-doc

1.2. ssh 安定??$ apt-get install ssh $ ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys $ ssh localhost

行ssh localhost 有出密的息

1.3. 安hadoop??

安hadoop0.20到/opt/取目名hadoop

$ cd ~ $ wget http://apache.ntu.edu.tw/hadoop/core/hadoop-0.20.0/hadoop-0.20.0.tar.gz $ tar zxvf hadoop-0.20.0.tar.gz $ sudo mv hadoop-0.20.0 /opt/ $ sudo chown -R waue:waue /opt/hadoop-0.20.0 $ sudo ln -sf /opt/hadoop-0.20.0 /opt/hadoop

- /opt/hadoop/conf/hadoop-env.sh

export?JAVA_HOME=/usr/lib/jvm/java-6-sun?export?HADOOP_HOME=/opt/hadoop?exportPATH=$PATH:/opt/hadoop/bin

- /opt/hadoop/conf/core-site.xml

<configuration> <property> <name>fs.default.name</name> <value>hdfs://localhost:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/tmp/hadoop/hadoop-${user.name}</value> </property> </configuration>- /opt/hadoop/conf/hdfs-site.xml

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

- /opt/hadoop/conf/mapred-site.xml

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

$ cd /opt/hadoop $ source /opt/hadoop/conf/hadoop-env.sh $ hadoop namenode -format $ start-all.sh $ hadoop fs -put conf input $ hadoop fs -ls

- 有息代表

1.4. 安eclipse??

?

- 在此提供方法下案

- 方法一:下?eclipse SDK 3.4.2 Classic,且放案到家目

- 方法二:上指令

$ cd ~ $ wget http://ftp.cs.pu.edu.tw/pub/eclipse/eclipse/downloads/drops/R-3.4.2-200902111700/eclipse-SDK-3.4.2-linux-gtk.tar.gz

- eclipse 已下到家目後,行下面指令:

?

$ cd ~ $ tar -zxvf eclipse-SDK-3.4.2-linux-gtk.tar.gz $ sudo mv eclipse /opt $ sudo ln -sf /opt/eclipse/eclipse /usr/local/bin/

二、 建立案??

2.1 安hadoop 的 eclipse plugin??

- 入hadoop 0.20.0 eclipse plugin

?

$ cd /opt/hadoop $ sudo cp /opt/hadoop/contrib/eclipse-plugin/hadoop-0.20.0-eclipse-plugin.jar /opt/eclipse/plugins

$ sudo vim /opt/eclipse/eclipse.ini

- 可斟酌考eclipse.ini容(非必要)

?

-startup plugins/org.eclipse.equinox.launcher_1.0.101.R34x_v20081125.jar --launcher.library plugins/org.eclipse.equinox.launcher.gtk.linux.x86_1.0.101.R34x_v20080805 -showsplash org.eclipse.platform --launcher.XXMaxPermSize 512m -vmargs -Xms40m -Xmx512m

2.2 eclipse??

- 打eclipse

?

$ eclipse &

一始出你要工作目放在哪:在我用值

PS: 之後的明是在eclipse 上的介面操作

2.3 野??window ->open pers.. ->other.. ->map/reduce

定要用 Map/Reduce 的野

使用 Map/Reduce 的野後的介面呈

2.4 建立案??

file ->new ->project ->Map/Reduce ->Map/Reduce Project ->next

建立mapreduce案(1)

建立mapreduce案的(2)

project name-> 入 : icas?(意)?use default hadoop -> Configur Hadoop install... -> 入:"/opt/hadoop"?-> ok Finish

2.5 定案??

由於建立了icas案,因此eclipse已建立了新的案,出在左窗,右料,properties

Step1. 右project的properties做部定

Step2. 入案的部定

hadoop的javadoc的定(1)

- java Build Path -> Libraries -> hadoop-0.20.0-ant.jar

- java Build Path -> Libraries -> hadoop-0.20.0-core.jar

- java Build Path -> Libraries -> hadoop-0.20.0-tools.jar

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

?

source?...-> 入:/opt/opt/hadoop-0.20.0/src javadoc ...-> 入:file:/opt/hadoop/docs/api/

Step3. hadoop的javadoc的定完後(2)

Step4. java本身的javadoc的定(3)

?

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

定完後回到eclipse 主窗

2.6 接hadoop server??

Step1. 窗右下角色大象示"Map/Reduce Locations tag" -> 右的色大象示:

Step2. 行eclipse hadoop 的定(2)

Location Name -> 入:hadoop?(意)?Map/Reduce Master -> Host-> 入:localhost Map/Reduce Master -> Port-> 入:9001 DFS Master -> Host-> 入:9000 Finish

定完後,可以看到下方多了一色大象,左方展料也可以秀出在hdfs的案

三、 撰例程式??

- 之前在eclipse上已了案icas,因此目在:

- /home/waue/workspace/icas

- 在目有料:

- src : 用程式原始

- bin : 用後的class

- 如此一原始和就不混在一起,之後生jar很有助

- 在我一例程式 :?WordCount

3.1 mapper.java??

?

- new

?

File ->new ->mapper

- create

source?folder-> 入: icas/src Package : Sample Name -> : mapper

- modify

?

package?Sample;?import?java.io.IOException;?import?java.util.StringTokenizer;?importorg.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Mapper;?public?class?mapper?extends?Mapper<Object,?Text,?Text,IntWritable>?{?private?final?static?IntWritable one?=?new?IntWritable(1);?private?Text word?=?newText();?public?void?map(Object key,?Text value,?Context context)?throws?IOException,InterruptedException?{?StringTokenizer itr?=?new?StringTokenizer(value.toString());?while(itr.hasMoreTokens())?{?word.set(itr.nextToken());?context.write(word,?one);?}?}?}建立mapper.java後,入程式

3.2 reducer.java??

- new

- File -> new -> reducer

- create

source?folder-> 入: icas/src Package : Sample Name -> : reducer

- modify

?

package?Sample;?import?java.io.IOException;?import?org.apache.hadoop.io.IntWritable;?importorg.apache.hadoop.io.Text;?import?org.apache.hadoop.mapreduce.Reducer;?public?class?reducerextends?Reducer<Text,?IntWritable,?Text,?IntWritable>?{?private?IntWritable result?=?newIntWritable();?public?void?reduce(Text key,?Iterable<IntWritable>?values,?Context context)?throwsIOException,?InterruptedException?{?int?sum?=?0;?for?(IntWritable val?:?values)?{?sum?+=val.get();?}?result.set(sum);?context.write(key,?result);?}?}- File -> new -> Map/Reduce Driver

3.3?WordCount.java (main function)??

- new

建立WordCount.java,此用mapper reducer,因此 Map/Reduce Driver

- create

source?folder-> 入: icas/src Package : Sample Name -> : WordCount.java

- modify

package?Sample;?import?org.apache.hadoop.conf.Configuration;?import?org.apache.hadoop.fs.Path;import?org.apache.hadoop.io.IntWritable;?import?org.apache.hadoop.io.Text;?importorg.apache.hadoop.mapreduce.Job;?import?org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import?org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;?importorg.apache.hadoop.util.GenericOptionsParser;?public?class?WordCount?{?public?static?voidmain(String[]?args)?throws?Exception?{?Configuration conf?=?new?Configuration();?String[]otherArgs?=?new?GenericOptionsParser(conf,?args)?.getRemainingArgs();?if?(otherArgs.length?!=?2)?{System.err.println("Usage: wordcount <in> <out>");?System.exit(2);?}?Job job?=?new?Job(conf,?"word count");?job.setJarByClass(WordCount.class);?job.setMapperClass(mapper.class);job.setCombinerClass(reducer.class);?job.setReducerClass(reducer.class);job.setOutputKeyClass(Text.class);?job.setOutputValueClass(IntWritable.class);FileInputFormat.addInputPath(job,?new?Path(otherArgs[0]));?FileOutputFormat.setOutputPath(job,?newPath(otherArgs[1]));?System.exit(job.waitForCompletion(true)???0?:?1);?}?}三完成後存後,整程式建立完成

- 三都存後,可以看到icas案下的src,bin都有案生,我用指令check

?

$ cd workspace/icas $ ls src/Sample/ mapper.java reducer.java WordCount.java $ ls bin/Sample/ mapper.class reducer.class WordCount.class

四、例程式??

?

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

4.1 解除run-on-hadoop封印??

有一心的hadoop使用者提供一能 run-on-hadoop 功能恢的方法。

原因是hadoop 的 eclipse-plugin 也是用eclipse europa 版本的,而eclipse 的各版本 3.2 , 3.3, 3.4 也都有或多或少的差性存在。

因此如果先用eclipse europa 建立一新案,之後把europa的eclipse版本掉,用eclipse 3.4,之後案就能用run-on-mapreduce 功能!

有趣的可以!(感逢甲工所同)

4.2 用端指令??

4.2.1 生Makefile ??

$ cd /home/waue/workspace/icas/ $ gedit Makefile

- 入以下Makefile的容

JarFile="sample-0.1.jar"?MainFunc="Sample.WordCount"?LocalOutDir="/tmp/output"?all:help jar: jar -cvf?${JarFile}?-C bin/ . run: hadoop jar?${JarFile}?${MainFunc}?input output clean: hadoop fs -rmr output output: rm -rf?${LocalOutDir}?hadoop fs -get output?${LocalOutDir}?gedit${LocalOutDir}/part-r-00000 &?help: @echo?"Usage:"?@echo?" make jar - Build Jar File."?@echo?" make clean - Clean up Output directory on HDFS."?@echo?" make run - Run your MapReduce code on Hadoop."?@echo?" make output - Download and show output file"?@echo?" make help - Show Makefile options."?@echo?" "?@echo?"Example:"?@echo?" make jar; make run; make output; make clean"4.2.2 行??

?

- 行Makefile,可以到目下,行make [],若不知道何,可以打make 或 make help

- make 的用法明

$ cd /home/waue/workspace/icas/ $ make Usage: make jar - Build Jar File. make clean - Clean up Output directory on HDFS. make run - Run your MapReduce code on Hadoop. make output - Download and show output file make help - Show Makefile options. Example: make jar; make run; make output; make clean

- 下面提供各make 的

?

make jar??

- 1. 生jar

?

$ make jar

make run??

- 2. 跑我的wordcount 於hadoop上

$ make run

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

- 因有定完整的javadoc, 因此可以得到的解助

make output??

- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

$ make output

make clean??

- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

?

$ make clean

五、??<ul style=

- 4. 指令用把hdfs上的output料清除。如果你想要在跑一次make run,先行make clean,否hadoop告你,output料已存在,而拒工作喔!

- 3. 指令是助使用者果hdfs下到local端,且用gedit你的果

- 因有定完整的javadoc, 因此可以得到的解助

- 而回到eclipse窗,我可以看到下方窗run完的job呈出;左方窗也多出output料,part-r-00000就是我的果

- make run基本上能正的作到束,因此代表我在eclipse的程式可以利在hadoop0.20的平台上行。

- 2. 跑我的wordcount 於hadoop上

- 1. 生jar

- 下面提供各make 的

- 入以下Makefile的容

- 因此,4.1 提供一eclipse 上解除 run-on-hadoop 封印的方法。而4.2 是避run-on-hadoop 功能,用command mode端指令的方法行。

- 右WordCount.java -> run as -> run on Hadoop :有效果

- 由於hadoop 0.20 此版本的eclipse-plugin依不完整 ,如:

- 之前在eclipse上已了案icas,因此目在:

- javadoc location -> 入:file:/usr/lib/jvm/java-6-sun/docs/api/

- 以 hadoop-0.20.0-core.jar 的定容如下,其他依此推

- 打eclipse

- 可斟酌考eclipse.ini容(非必要)

- 入hadoop 0.20.0 eclipse plugin

- eclipse 已下到家目後,行下面指令:

- 在此提供方法下案

- 有息代表

- /opt/hadoop/conf/mapred-site.xml

- /opt/hadoop/conf/hdfs-site.xml

- /opt/hadoop/conf/core-site.xml